Tweet Disematkan

SAINT CLAIR

514 posts

SAINT CLAIR

@clairclancy

Somewhere between tired and in Bergabung Mayıs 2018

175 Mengikuti100 Pengikut

Building a simple neural network from scratch in java which includes

Feedforward, backward propagation, Loss Function, Activation Functions

github.com/Irotochukwusam…

#Java #coding #AI #dev

English

Neural Network Feedforward ( one layer ) in Python vs Java language

Python: “I’ll just guess your types and do the magic ”

Java : “Type. Type. Type. Now… compile. Wait… fix that. Compile again.”

#CodingLife #PythonVsJava #ProgrammerHumor

English

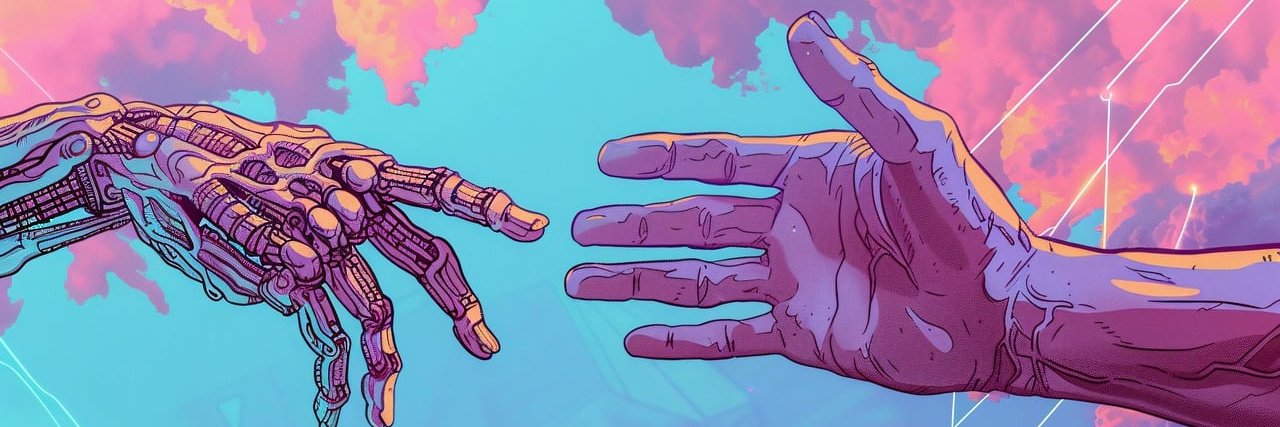

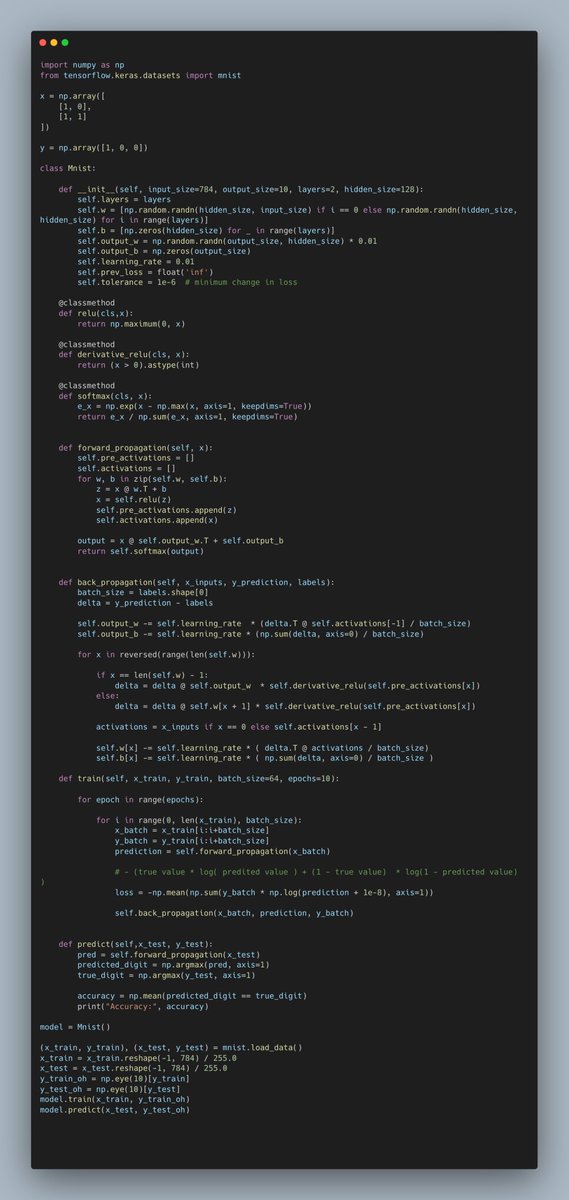

Built a tiny neural net from scratch to read handwritten digits. 0 - 9

784 inputs → 2 hidden layers → 10 outputs

1. Forward pass

2. Backprop

Watching it go from random noise → recognizing numbers feels like magic!

#ML #DeepLearning #Python

English

@TheVixhal This is absolutely amazing man, actually built same with python but used sigmoid as activation and used Xavier initialisation to handle the vanishing gradient issue

English

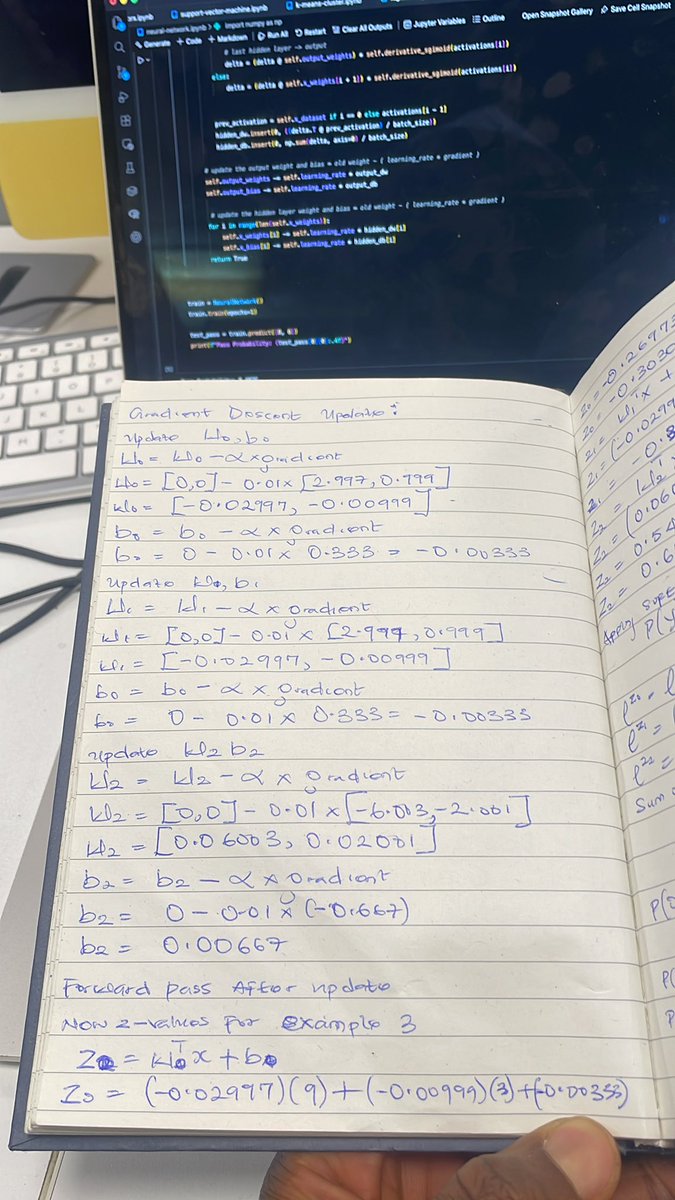

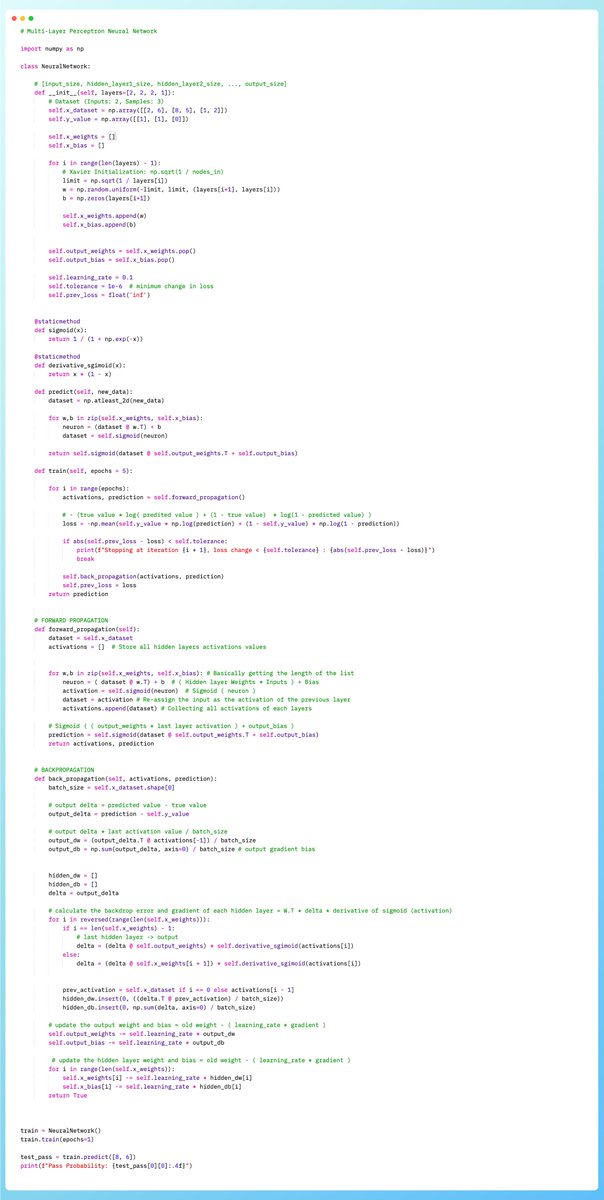

🚀 Building intelligence from scratch. (Neural Network)

I implemented a Multi-Layer Perceptron (MLP) in Python without TensorFlow or PyTorch, to truly understand how neural networks learn.

#MachineLearning #DeepLearning #NeuralNetworks #Python #AI #NapierUniversity 🧠

English

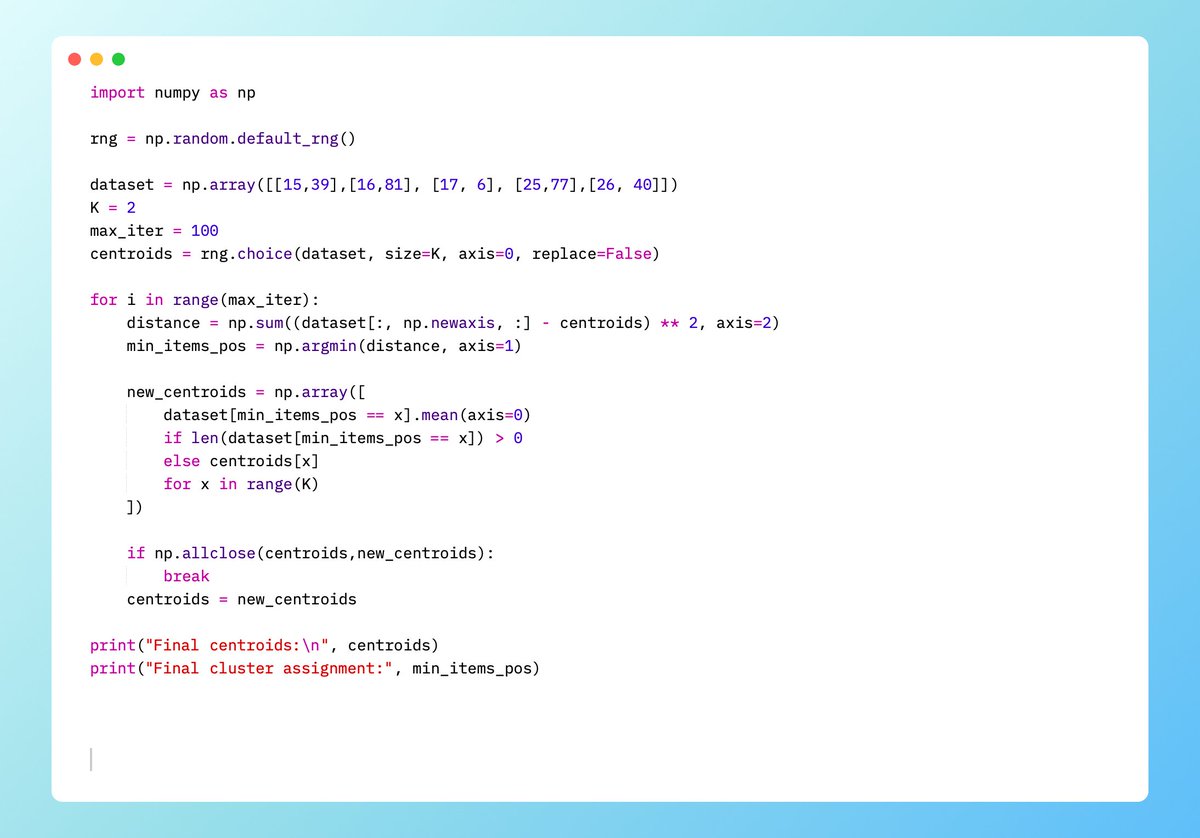

Just implemented k-Means clustering in Python from scratch!

Used it on a small customer dataset: Income vs Spending Score.

2 clusters found automatically

Centroids updated iteratively

Each customer assigned to the closest centroid

#MachineLearning #DataScience #Python #Cluster

English

Just built a Weighted K-Nearest Neighbors (KNN) Regressor from scratch using NumPy!

Unlike simple KNN, this version handles :

✅ Feature Scaling (Z-score)

✅ Vectorized Distance Math

✅ Custom K selection

✅ Weighted Averaging

#MachineLearning #Python #NumPy #DataScience #Coding

English

Built K-Nearest Neighbors from first principles for Classification

• Feature scaling (Very important)

• Euclidean distance (calculated by hand)

• Choosing K

• Weighted KNN voting

#MachineLearning #python #datascience #AI #coding

English

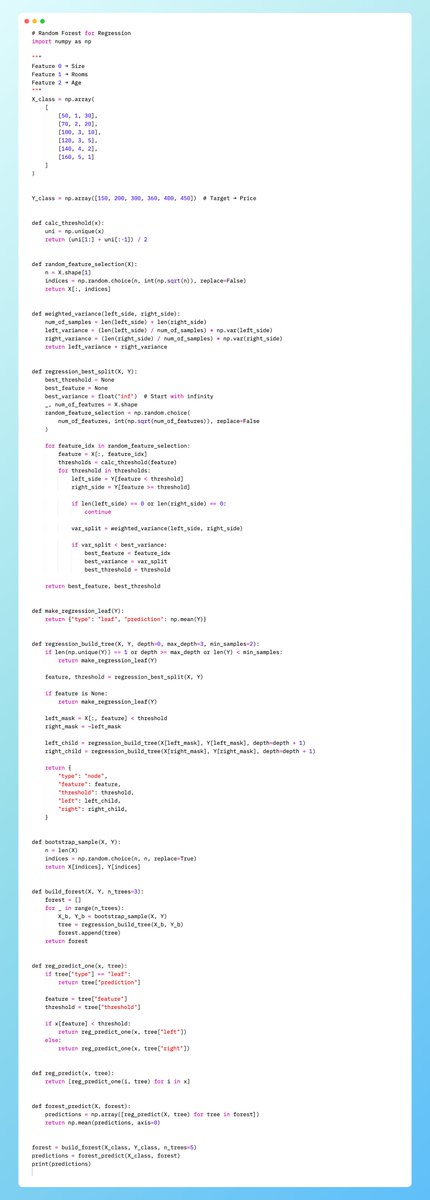

I just built a Random Forest Regressor from scratch in NumPy by extending the last regression tree i built earlier.

✔️ Bootstrap sampling

✔️ Random feature selection

✔️ Variance-based splits

✔️ Recursive decision trees

✔️ Ensemble averaging

#MachineLearning #RandomForest #FromScratch #NumPy #DataScience #AI #LearningML

English

@PriyaChakr87701 but Sklearn will be faster in larger dataset due to it Cython-optimized backend

English

@PriyaChakr87701 On the synthetic test data, both models achieved an MSE of ~0.813 and an R2 of ~0.96. This exists because both implementations use Variance Reduction (Mean Squared Error) as the splitting criterion and follow the CART algorithm to create a piecewise constant prediction model.

English

Built a Decision Tree Regressor from scratch in Python

✔ Variance-based splits

✔ Recursive tree construction

✔ Mean-value leaf predictions

✔ Piecewise constant regression

#MachineLearning #DataScience #Python #FromScratch

English

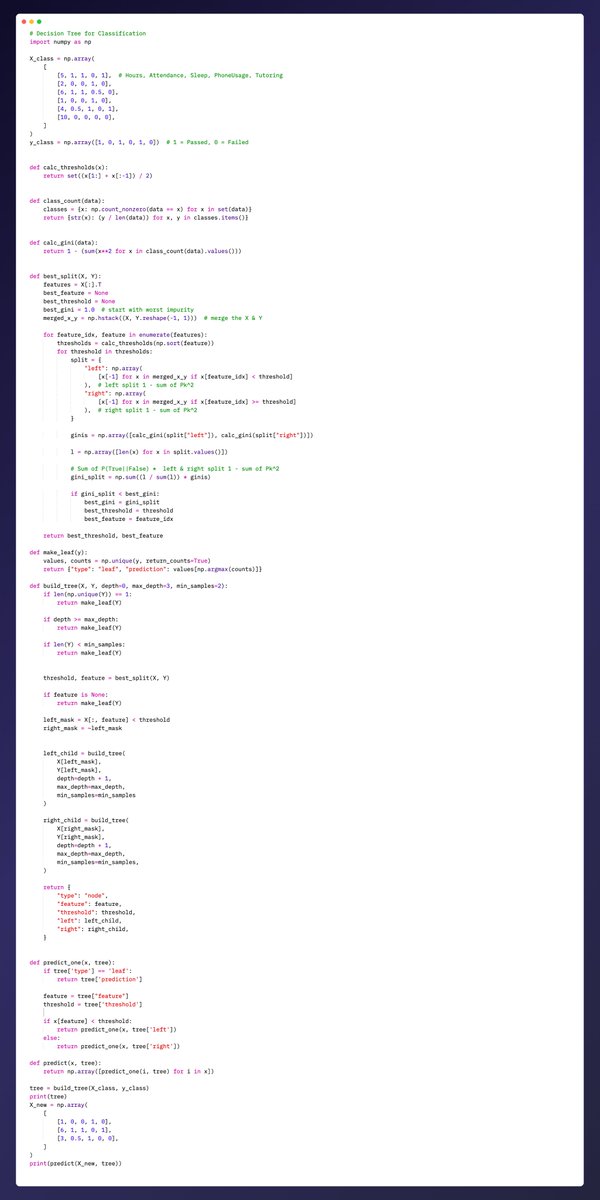

Built a Decision Tree classifier from scratch in Python

✔ Gini impurity

✔ Threshold generation

✔ Best split selection

✔ Recursive tree building

✔ Leaf prediction & inference

#MachineLearning #DataScience #Python #MLFromScratch

English

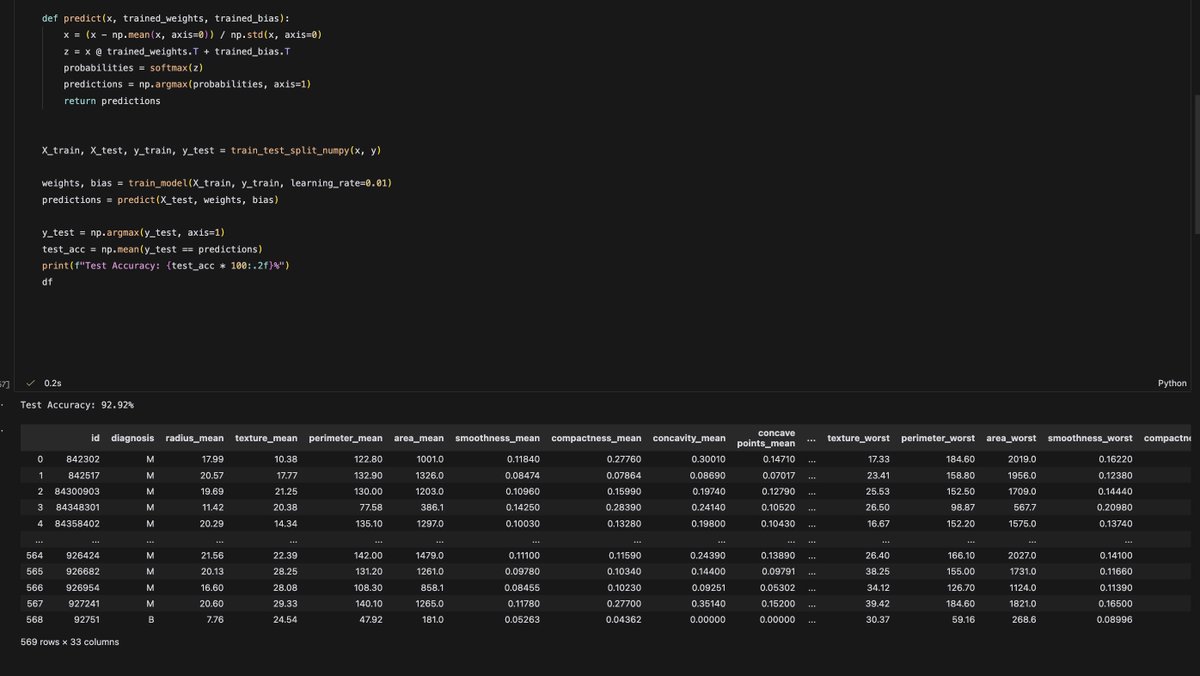

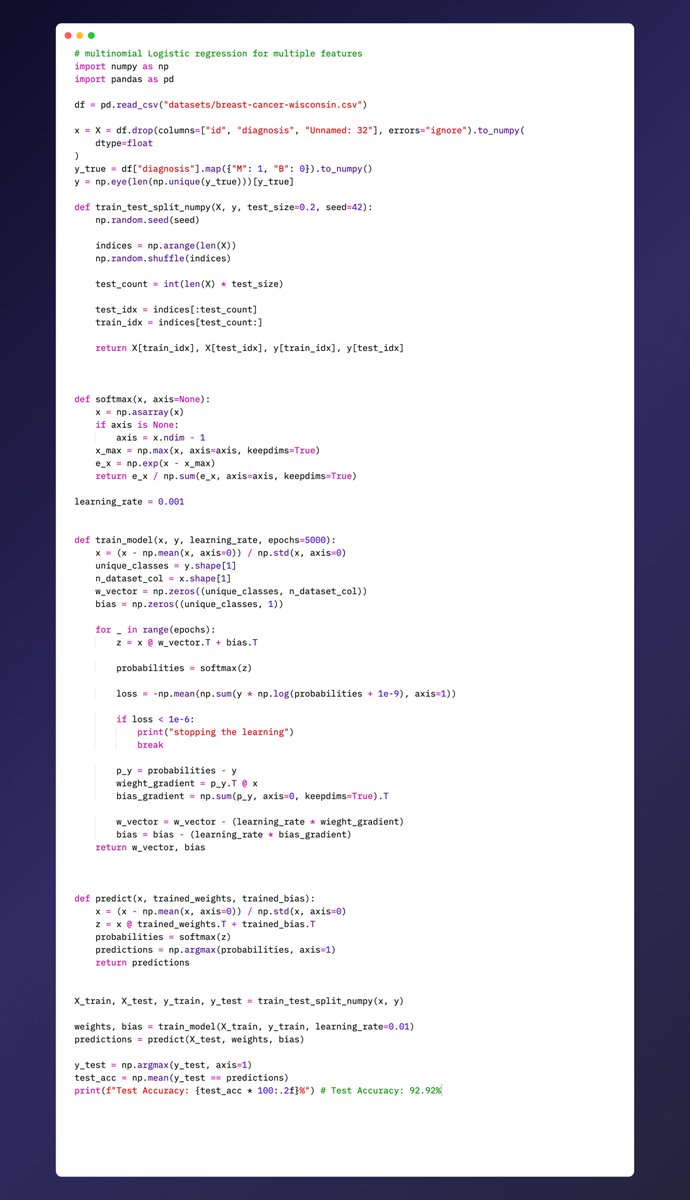

🚀 Built a Multinomial Logistic Regression from scratch in Python to classify breast cancer!

✅ Train/Test split

✅ One-hot encoding

✅ Softmax & gradient descent

Accuracy on test set: 92.9%

#python #code #ArtificialInteligence

English

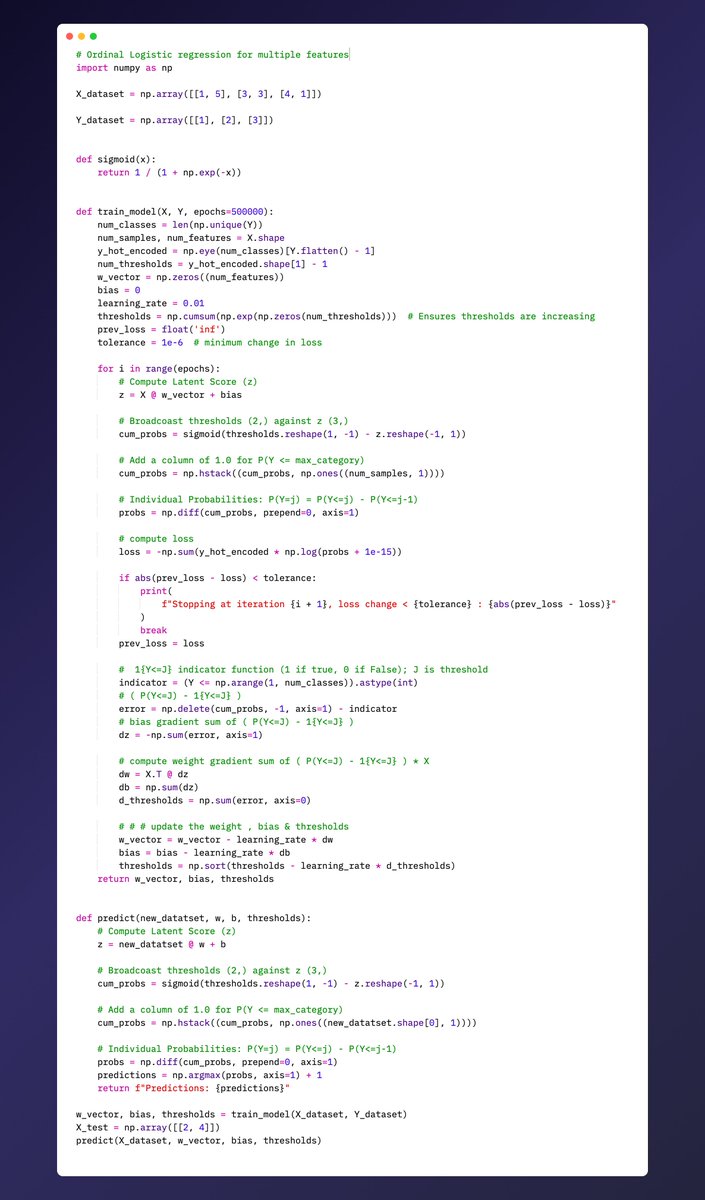

🚀 Just implemented Ordinal Logistic Regression from scratch in Python! 🐍

✅ Supports multiple features

✅ Learns weights, bias & thresholds

✅ Predicts ordinal outcomes (1,2,3…)

✅ Fully vectorized & easy to extend

Perfect for ranking problems or ratings!

#MachineLearning #Python #DataScience

English