Ramya Chinnadurai 🚀

8K posts

@code_rams

Indie hacker. Building in public @tweetsmashApp | https://t.co/YO5CRQrT6b Linkedmash | https://t.co/pbOsWhaJl3

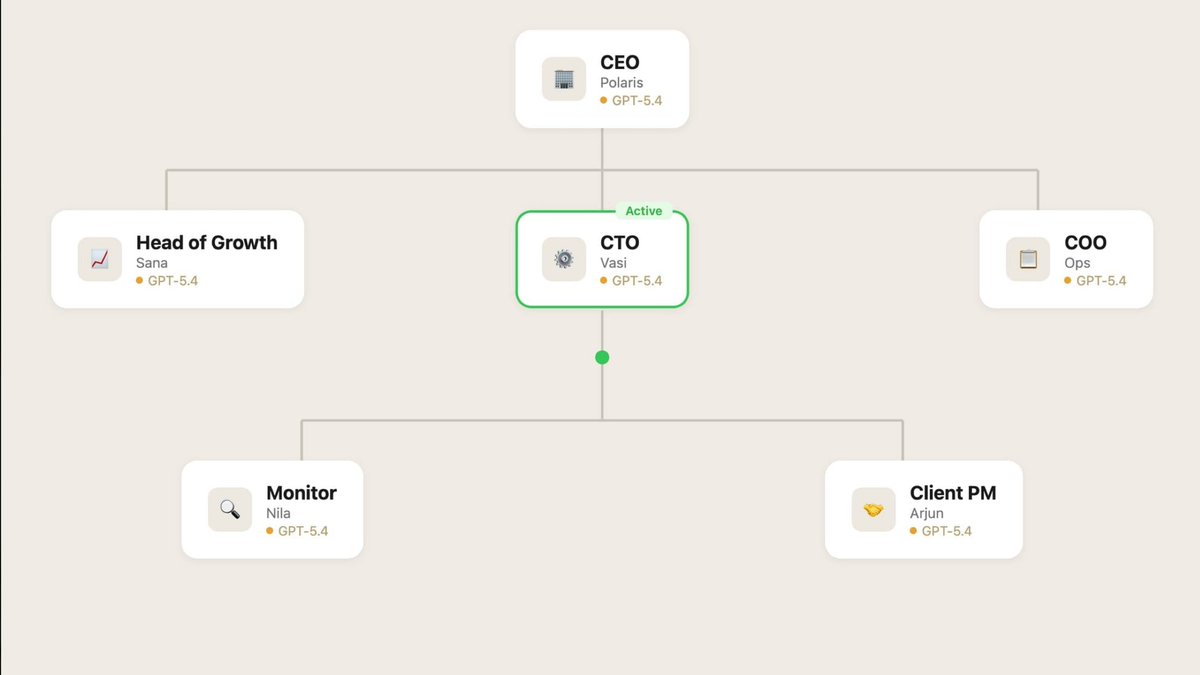

Why would anyone choose OpenClaw vs Claude Code? Claude now has: • Discord/Telegram integration • Cron Jobs (/loop) • 1M token memory • Webhooks to phone • Can run 24/7 on any Computer or Mac Mini This covers 95% of what people actually use OpenClaw for with better security and easier setup The only reason to stick with OpenClaw is if you want a multi-agent setup. That's the only difference I could think of Going to stick with OpenClaw for now because of this, but the gap is almost at zero

We just released Claude Code channels, which allows you to control your Claude Code session through select MCPs, starting with Telegram and Discord. Use this to message Claude Code directly from your phone.

IF YOU'RE ON OPENCLAW DO THIS NOW: I just sped up my OpenClaw by 95% with a single prompt Over the past week my claw has been unbelievably slow. Turns out the output of EVERY cron job gets loaded into context Months of cron outputs sent with every message Do this prompt now: "Check how many session files are in ~/.openclaw/agents/main/sessions/ and how big sessions.json is. If there are thousands of old cron session files bloating it, delete all the old .jsonl files except the main session, then rebuild sessions.json to only reference sessions that still exist on disk." This will delete all the session data around your cron outputs. If you do a ton of cron jobs, this is a tremendous amount of bloat that does not need to be loaded into context and is MAJORLY slowing down your Openclaw If you for some reason want to keep some of this cron session data in memory, then don't have your openclaw delete ALL of them. But for me, I have all the outputs automatically save to a Convex database anyway, so there was no reason to keep it all in context. Instantly sped up my OpenClaw from unusable to lightning quick

Found this project called OpenMAIC - an open source multi-agent interactive classroom platform that uses AI models of your choice, which creates a full interactive classroom experience within minutes on ANYTHING. Super cool project, video out now!

We invited Claude users to share how they use AI, what they dream it could make possible, and what they fear it might do. Nearly 81,000 people responded in one week—the largest qualitative study of its kind. Read more: anthropic.com/features/81k-i…

One plugin. One command. Every skill: ▲ ~/ npx plugins add vercel/vercel-plugin The Vercel plugin for coding agents turns isolated capabilities into coordinated expertise, with: • 47+ specialized skills • Sub-agents for deployments, performance, and more • Dynamic context management for precision and cost control From single tasks to full workflows, agents like Claude Code and Cursor can further understand how to build and ship on Vercel. vercel.com/changelog/intr…

There's a hidden tax on every knowledge worker in the world, and nobody talks about it: The design tax. You're a strategist, a sales lead, a marketer. You were hired for what you know. But every meeting, every pitch, every proposal expects you to show up with something that looks like a designer made it. I lived this. Before Gamma, I spent time in consulting and investment banking. I spent more hours formatting slides than the analysis that went into them. When my cofounders and I started Gamma, we asked: what if you never had to be a designer in the first place? Five years and nearly 100 million users later, we've refunded billions of hours of the design tax. Today, we're eliminating it for good with our biggest launch ever. Gamma Imagine — a powerful, AI-native visual creation tool directly in Gamma. Posters, logos, infographics, visuals from a single prompt. On brand, every time. AI-Native Templates. Templates were supposed to save you from design work. Instead you spent the time filling them in. So we completely rebuilt the template experience. Modify a whole deck with a single prompt, with your brand and style intact every time. Gamma Connectors. You're already thinking in ChatGPT and Claude. Now Gamma sits inside the most popular work apps in the world. No more context-switching. You were hired for your ideas, not to resize text boxes. Let Gamma pay the design tax.

"Every software company in the world, needs to have an @openclaw strategy" - Jensen at @NVIDIAAI GTC Framing OpenClaw as one of the most important open source releases ever, they have announced NemoClaw - a reference platform for enterprise grade secure Openclaw, with OpenShell, Network boundaries, security baked in.

Subagents are now available in Codex. You can accelerate your workflow by spinning up specialized agents to: • Keep your main context window clean • Tackle different parts of a task in parallel • Steer individual agents as work unfolds

#NVIDIAGTC news: NVIDIA announces NemoClaw for the OpenClaw agent platform. NVIDIA NemoClaw installs NVIDIA Nemotron models and the NVIDIA OpenShell runtime in a single command, adding privacy and security controls to run secure, always-on AI assistants. nvda.ws/47xOPqQ