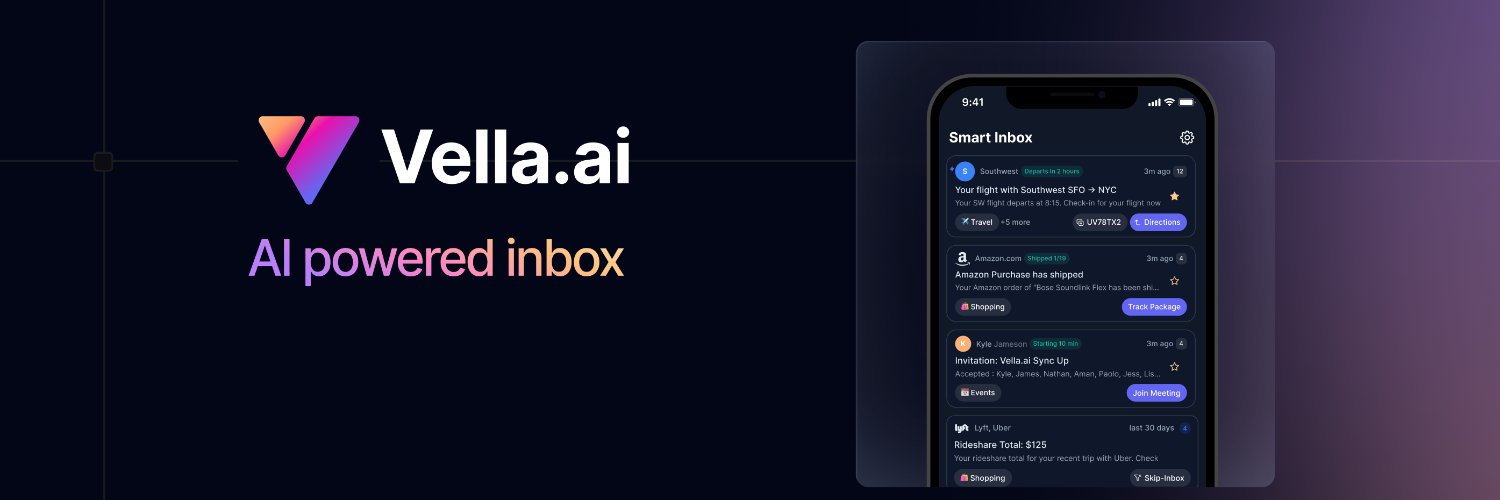

Your inbox never stops filling up & ⭐️ 🚩 📧 📥 can only get you so far.

Yonatan

2.2K posts

@devYonz

Co-founder @vella_ai | CEO @LegendAppHQ | https://t.co/ifnNzQPRQi @Harvard & x-dev @LinkedIn Passionate about user-owned auth, storage, and personalization.

Your inbox never stops filling up & ⭐️ 🚩 📧 📥 can only get you so far.

Your inbox never stops filling up & ⭐️ 🚩 📧 📥 can only get you so far.

I'm excited to share that I've teamed up with @jmeistrich to make 📘 Legend notebook better than ever. It is the notes & to-do list app that you end up with after starting with OneNote, Evernote, Apple Notes, and trying Craft, Tana, Todist, and Things.

Your inbox never stops filling up & ⭐️ 🚩 📧 📥 can only get you so far.

We're still reviewing the CFP submissions, but we've already dropped the first speakers on the website! 👀 We can't wait to see what the @expo folks have cooked for us this year & we're all fired up for @wcandillon making his comeback to App.js 🔥 Stay tuned for more! PS. The price will go up in May, so don't wait up too long!

The cofounder and CTO of Perplexity, @denisyarats just said internally at Perplexity they’re moving away from MCPs and instead using APIs and CLIs 👀

Thought this Opus 4.6 distilled Qwen 27B might be a gimmick, but I had to try it, and I am IMPRESSED! The video below compares two long-context prompted single-page HTML designs. More details below, but TLDR: it seems to be another significant improvement over the base model! For sake of simplicity, the Qwen Opus 4.6 Distillation, posted by "Jackrong" will now be referred to as Qwopus. Test Details: I ran it against base Qwen 27B, both in Claude Code, using my own personal benchmark that showed me on day one that Qwen 27B was significantly better than the other models launched that day, before benchmarks even hit. I uploaded an extensive summary of a highly speculative preprint I'm working on, spanning multiple disciplines, and involving lots of intricate LaTeX math equations and verbose explanation. The LaTeX rendering is a big milestone because less than a year ago, SOTA models were still struggling to render them properly in HTML. Qwen 27B was the first local model I tried that nailed them on the first go and didn't mess misrepresent them or error, this was why I was so impressed with it day one. Real big boy Opus 4.6 rocks at them. But while both of these two 27B models did well in one shot, the Claude Code experience seemed smoother with Qwopus, and the design also seems, to me, to be significantly nicer; much less of a "Las Vegas" vibe, let's say, and the color pallete is much less jarring, but still exciting. But also, the way the verbose text explanations are presented in the Qwopus version is orders of magnitude more detailed and organized than base Qwen 27B. Which can be seen in the greater length of the Qwopus presentation. Qwen threw a table where Qwopus put a nice section with summaries. This video shows them both throughout, then section by section compared, then I briefly scrolled through the Claude Code output from each. You can tell that the Qwopus responses were formatted really nicely, almost exactly like Opus 4.6 seems to output, especially in planning mode. I did have to steer Qwen once, but that was a file naming issue that was kind of my fault, so i wouldn't count it. This would run on a 3090 well as shown by @sudoingX, it rips on my 5090@50 t/ps, and feels like a genuine Claude experience. Need to test more, but try it in Claude Code, and I think you'll be surprised. I am wondering if it also just plays nicer with the Claude Code "secret sauce" that is closed source since it's distilled on a Claude model. So with that, I'm tagging the legend @TheAhmadOsman, curious if you've messed with this yet, but I think this is the new local inference king! AND THIS IS THE WORST IT'LL EVER BE!

Language Benchmark

🧘🏽stay in the flow