Very excited to announce that early release of my book "Create your own programming language with @rustlang" is available at createlang.rs Accompanying code is on my github @ThisWeekInRust @read_rust #rustlang github.com/ehsanmok/creat…

𝔈𝔥𝔰𝔞𝔫

1.6K posts

@ehsanmok

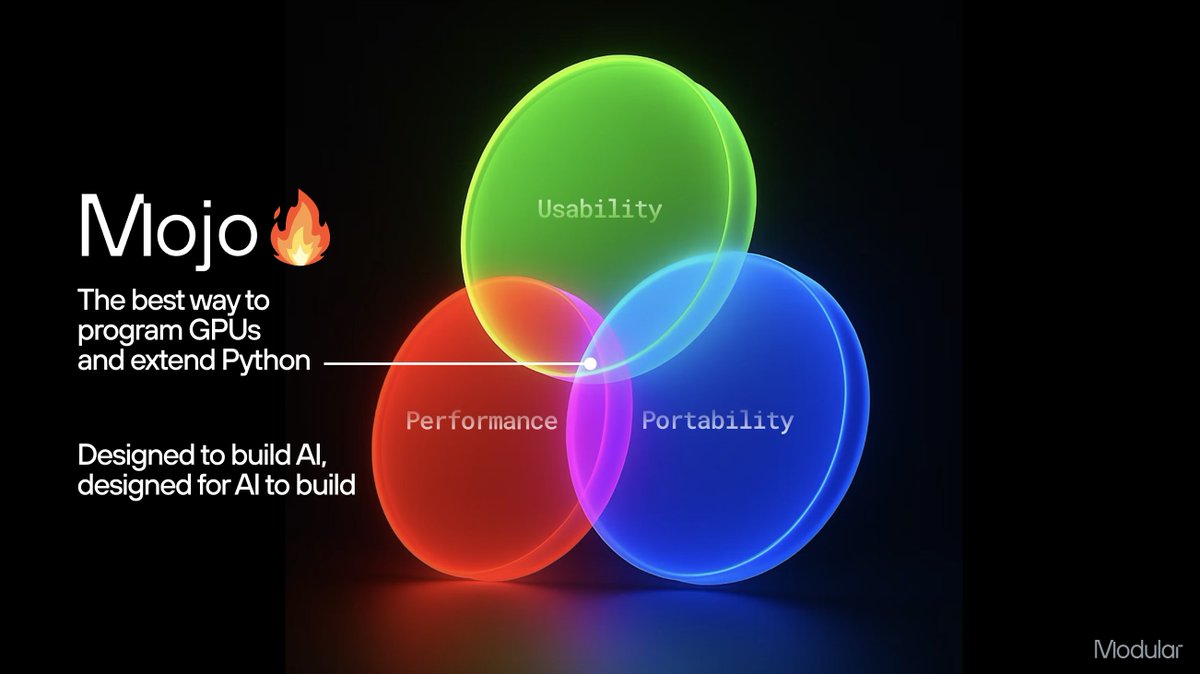

Mojo🔥 maximalist @Modular. Teacher at heart. https://t.co/WvqWAgfsLP, https://t.co/yx2pGCIcYw. Into powerlifting. Used to know some Math. Opinions are mine.

Very excited to announce that early release of my book "Create your own programming language with @rustlang" is available at createlang.rs Accompanying code is on my github @ThisWeekInRust @read_rust #rustlang github.com/ehsanmok/creat…

Programming Massively Parallel Processors is the gold standard GPU programming textbook and the 5th edition just dropped. Mojo 🔥 solutions to every exercise are in today's nightly release. Check out the repo and see what GPU programming looks like in Mojo ⬇️ github.com/modular/modula…

Today, in Iran, in the middle of a war, the regime executed a 19-year-old national wrestling champion for the crime of joining January protests. 💔 After signaling to the world, including President @realDonaldTrump, that they would halt executions of protesters, the regime has done the exact opposite. Three young protesters, Saleh Mohammadi, Mehdi Ghasemi, and Saeed Davoudi, were hanged in Qom after a sham trial. Reports indicate torture. Forced confessions. No access to chosen lawyers. Closed-door proceedings. No right to appeal. I call on @GlobalAthleteHQ to stand with Iranian athletes who are being silenced, imprisoned, and executed simply for raising their voices. This is not just about sports. This is about human dignity.