Akhilesh Mishra

5.3K posts

Akhilesh Mishra

@livingdevops

Founder LivingDevOps | DevOps Lead |Educator | Mentor | Tech Writer

Should X add a dislike button?

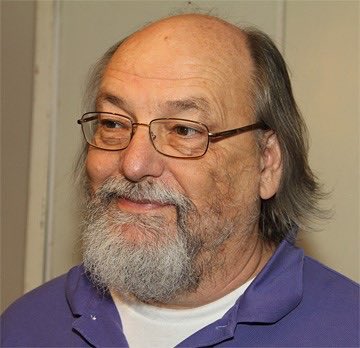

Dennis Ritchie created C in the early 1970s without Google, Stack Overflow, GitHub, or any AI ( Claude, Cursor, Codex) assistant. - No VC funding. - No viral launch. - No TED talk. - Just two engineers at Bell Labs. A terminal. And a problem to solve. He built a language that fit in kilobytes. 50 years later, it runs everything. Linux kernel. Windows. macOS. Every iPhone. Every Android. NASA’s deep space probes. The International Space Station. > Python borrowed from it. > Java borrowed from it. > JavaScript borrowed from it. If you have ever written a single line of code in any language, you did it in Dennis Ritchie’s shadow. He died in 2011. The same week as Steve Jobs. Jobs got the front pages. Ritchie got silence. This Legend deserves to be celebrated.

Dennis Ritchie created C in the early 1970s without Google, Stack Overflow, GitHub, or any AI ( Claude, Cursor, Codex) assistant. - No VC funding. - No viral launch. - No TED talk. - Just two engineers at Bell Labs. A terminal. And a problem to solve. He built a language that fit in kilobytes. 50 years later, it runs everything. Linux kernel. Windows. macOS. Every iPhone. Every Android. NASA’s deep space probes. The International Space Station. > Python borrowed from it. > Java borrowed from it. > JavaScript borrowed from it. If you have ever written a single line of code in any language, you did it in Dennis Ritchie’s shadow. He died in 2011. The same week as Steve Jobs. Jobs got the front pages. Ritchie got silence. This Legend deserves to be celebrated.