Tom Fiddaman

5.2K posts

We need single-payer health care. I've looked at the numbers, and there's no other way to bring costs down.

here is the video on the Riemann hypothesis that YouTube took down

@SkippyStone @mzjacobson That's a silly fallacy of small numbers. Would you eat an apple with 122ppm of plutonium? 122ppm over many kilometers of air is a lot. Anyway the radiative effects of gases are experimental science, so if you had evidence you could refute all sorts of things and win a Nobel.

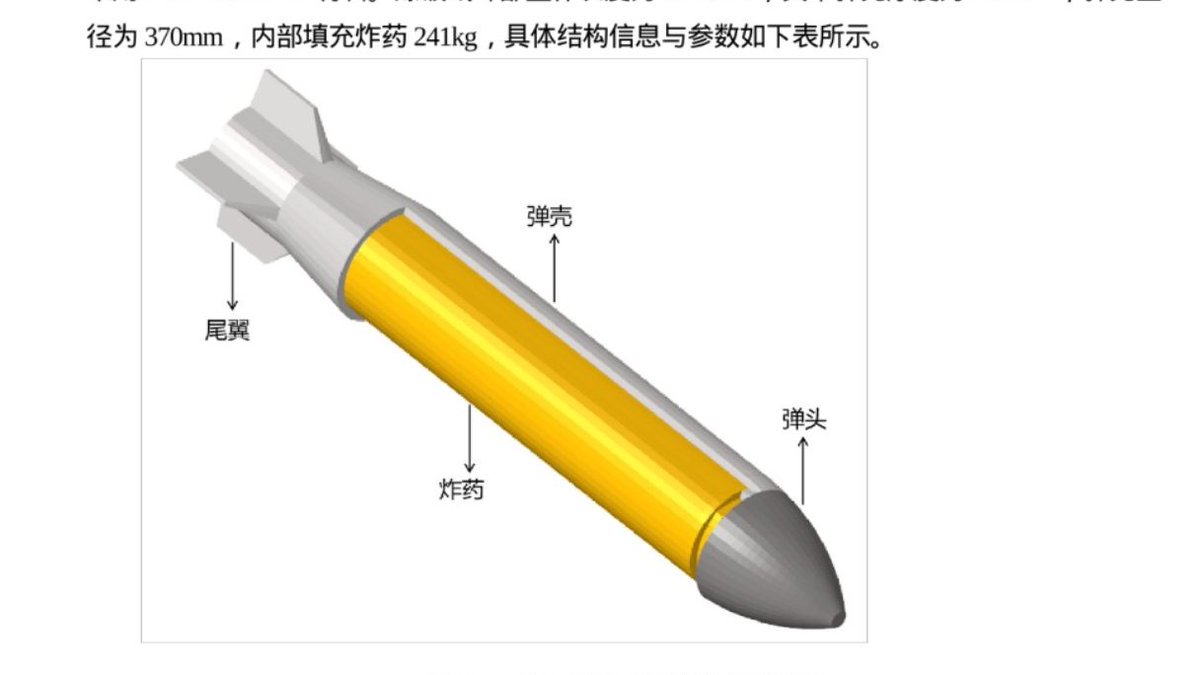

If this is real, it could be one of the largest data breaches in China’s history. A hacker group claims it extracted over 10 petabytes of data from a state-run supercomputing facility, widely believed by experts to be the National Supercomputing Center in Tianjin. This center supports thousands of clients, including research institutes, aerospace programs, and defense-linked organizations. What’s reportedly in the data: - Documents marked “secret” in Chinese - Missile and bomb schematics - Aerospace and aviation research - Bioinformatics and fusion simulation data - Files linked to major state entities like AVIC and COMAC Cybersecurity experts who reviewed sample data say it matches what you would expect from such a facility, though the full breach is not independently verified. Even more concerning: - The attacker claims access lasted months without detection - Sample datasets were posted online via Telegram - Full access is reportedly being sold for hundreds of thousands of dollars in crypto At this stage, the scale and origin are still being verified. But if even partially true, it points to a serious vulnerability in infrastructure tied to China’s scientific and defense ecosystem. If a centralized system like this can be penetrated, what does that say about the security of the data it was processing? #China #Cybersecurity #CCP #DataBreach #Geopolitics #Tech cnn.com/2026/04/08/chi…

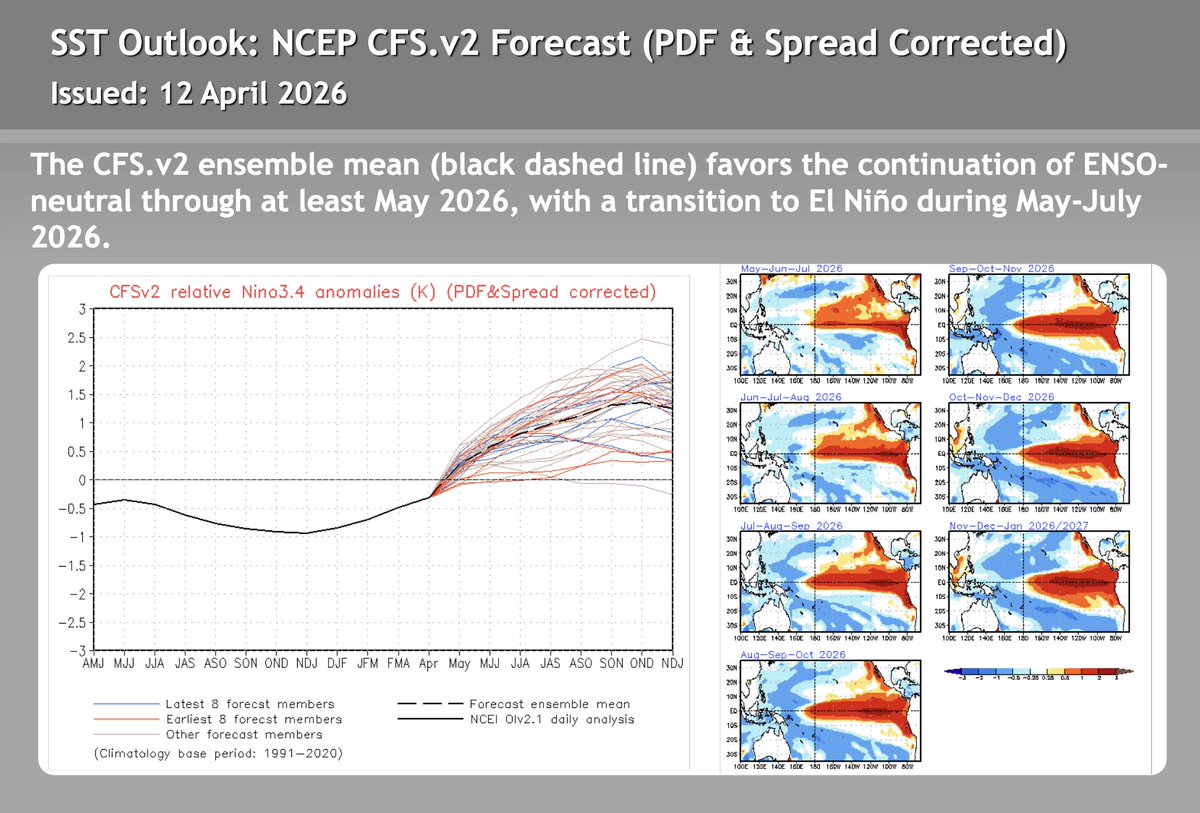

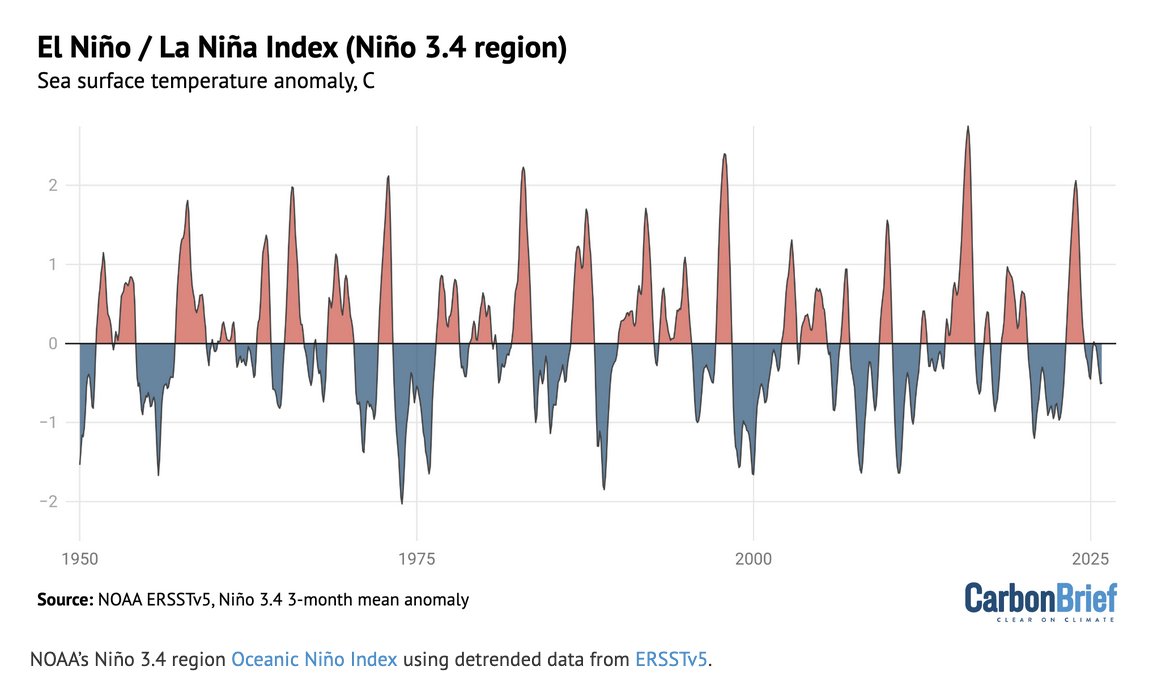

People who deny climate change not only deny the obvious data showing warming rates today faster than any time since even deglaciation from the last ice age and the obvious fact that the upper atmosphere (stratosphere) is cooling due to greenhouse gases trapping heat in and warming the lower atmosphere (troposphere), preventing that heat from warming the stratosphere, but also pretend that they have brains that operate better than solutions to physical equations of the atmosphere solved by the largest computer models in the world. x.com/mzjacobson/sta…

Vance: We want you to make a decision about your future with no outside forces pressuring you or telling you what to do. I am not telling you exactly who to vote for.