Zhoujun (Jorge) Cheng

400 posts

Zhoujun (Jorge) Cheng

@ChengZhoujun

Ph.D. @UCSanDiego | Scaling RL and agents

🍫 CocoaBench is calling for contributions from the community! Join us and help shape how next-generation agents are evaluated and built🚀✨ #LLM #AI #Agent #CocoaBench More details in the threads 👇

🍫 CocoaBench v1.0 is out! CocoaBench is a benchmark for unified digital agents, built around open-world tasks that require composing 💻 coding, 👀 vision, 🌐 search. Since our first research preview last December, we have expanded the benchmark substantially with community contributed tasks, and spent months testing and refining the tasks, evaluations, and agent runs. Some takeaways: • Even the best agent system reaches only 45.1% on CocoaBench v1.0. • Coding agents like Codex are already surprisingly strong on general tasks beyond software engineering. • Stronger agents tend to push more of the work into code. • Open source models still lag behind leading frontier models on these general tasks. 👇More on the website and in the paper #AI #Agents #LLM #Benchmark #CocoaBench

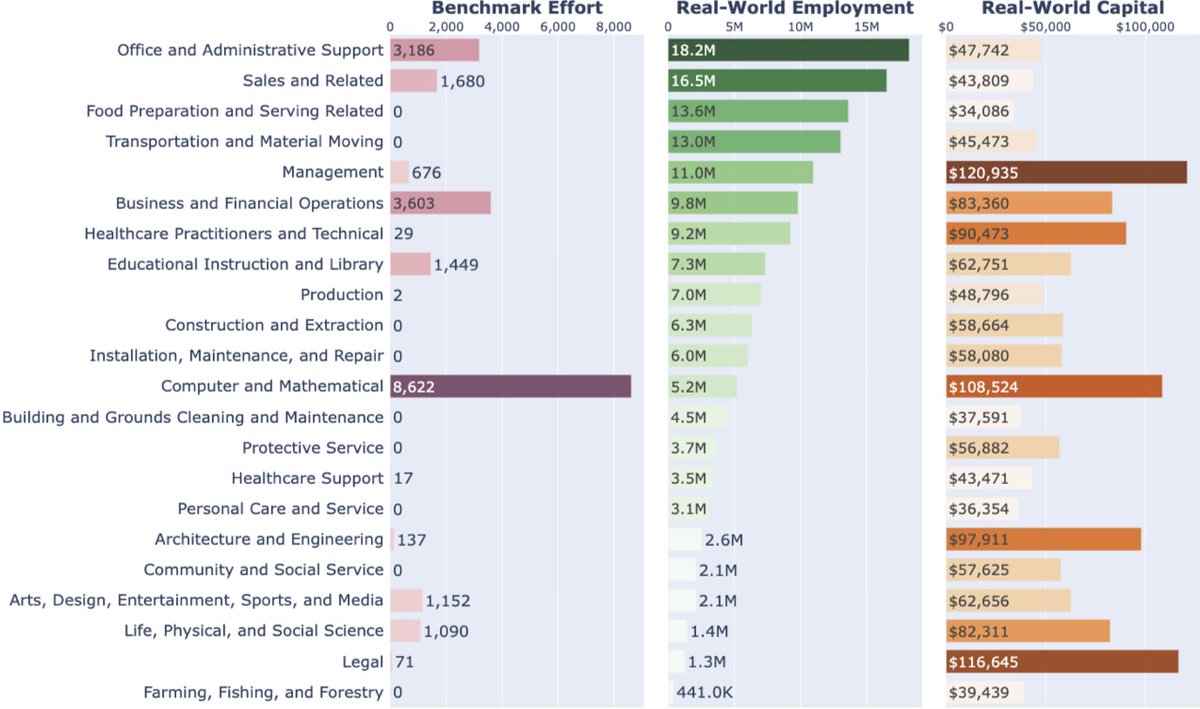

Excited to share that 𝘁𝗵𝗲 𝟱𝘁𝗵 @DL4Code 𝘄𝗼𝗿𝗸𝘀𝗵𝗼𝗽 𝗶𝘀 𝗰𝗼𝗺𝗶𝗻𝗴 𝘁𝗼 #ICML2026🇰🇷. Grateful to everyone who helped make this such an energizing space for the field! Also, a belated update on our position paper 𝗛𝘂𝗺𝗮𝗻𝘀 𝗮𝗿𝗲 𝗠𝗶𝘀𝘀𝗶𝗻𝗴 𝗳𝗿𝗼𝗺 𝗔𝗜 𝗖𝗼𝗱𝗶𝗻𝗴 𝗔𝗴𝗲𝗻𝘁 𝗥𝗲𝘀𝗲𝗮𝗿𝗰𝗵. What I’m especially proud of in the paper is the core argument: the next frontier for coding agents is not just more autonomy, but better human collaboration. As agents get stronger, the real bottleneck is increasingly whether people can align with them, steer them, verify their outputs, and trust them in real workflows. We turn that observation into concrete research agenda for building coding agents that are genuinely more useful. The seed for this paper came from an early conversation with the awesome @KLieret at #NeurIPS. I later discussed the idea with @jyangballin, which led to further conversations with @ZhiruoW @Diyi_Yang, and the final paper was led by these folks together with a fantastic group of co-authors. Parts of the argument were also shaped by wonderful discussions at the #DL4C workshops at #ICLR and #NeurIPS last year. Check it out! zorazrw.github.io/files/position…

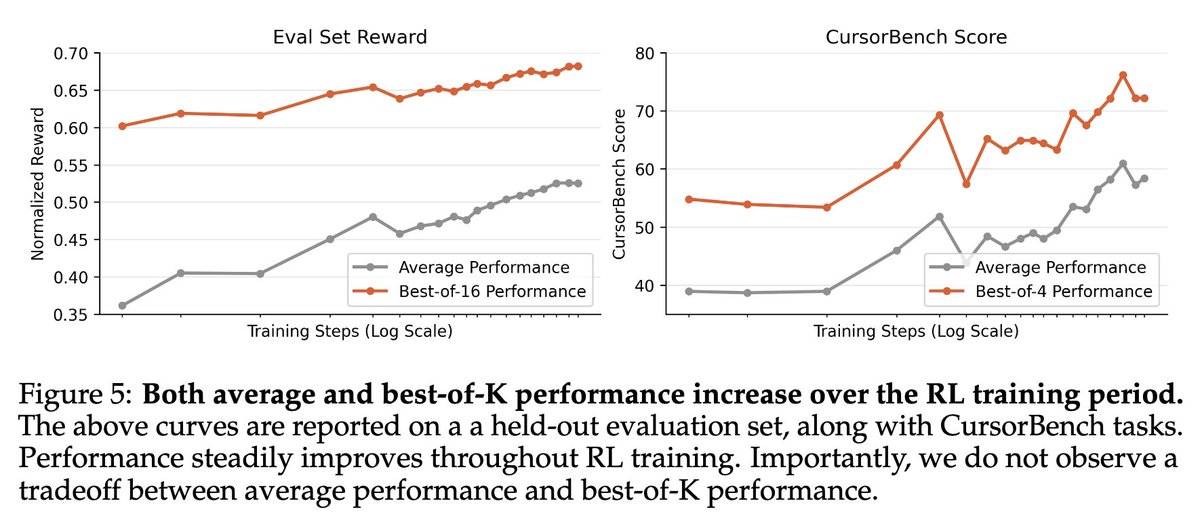

We're releasing a technical report describing how Composer 2 was trained.

We're releasing a technical report describing how Composer 2 was trained.

In Agent RL, models suffer from Template Collapse. They generate vast, diverse outputs (High Entropy) that lose all meaningful connection to the input prompt (Low Mutual Information). In other words, agent learn different ways to say nothing. 🚀 Introducing RAGEN-v2 -- Here's how we define and fix such silent failure modes in Agent RL. 🧵

What if coding agents could build entire virtual worlds? 🌍🏙️ SimWorld makes it possible — enabling agents like Claude Code 🤖 to generate and interact with scenes directly inside an Unreal Engine simulation 🎮 World simulation for embodied agents just became much easier and more accessible 🚀 Stay tuned — more models and capabilities coming soon ⚡️

🚀 Introducing Qwen3-Coder-Next, an open-weight LM built for coding agents & local development. What’s new: 🤖 Scaling agentic training: 800K verifiable tasks + executable envs 📈 Efficiency–Performance Tradeoff: achieves strong results on SWE-Bench Pro with 80B total params and 3B active ✨ Supports OpenClaw, Qwen Code, Claude Code, web dev, browser use, Cline, etc 🤗 Hugging Face: huggingface.co/collections/Qw… 🤖 ModelScope: modelscope.cn/collections/Qw… 📝 Blog: qwen.ai/blog?id=qwen3-… 📄 Tech report: github.com/QwenLM/Qwen3-C…