固定されたツイート

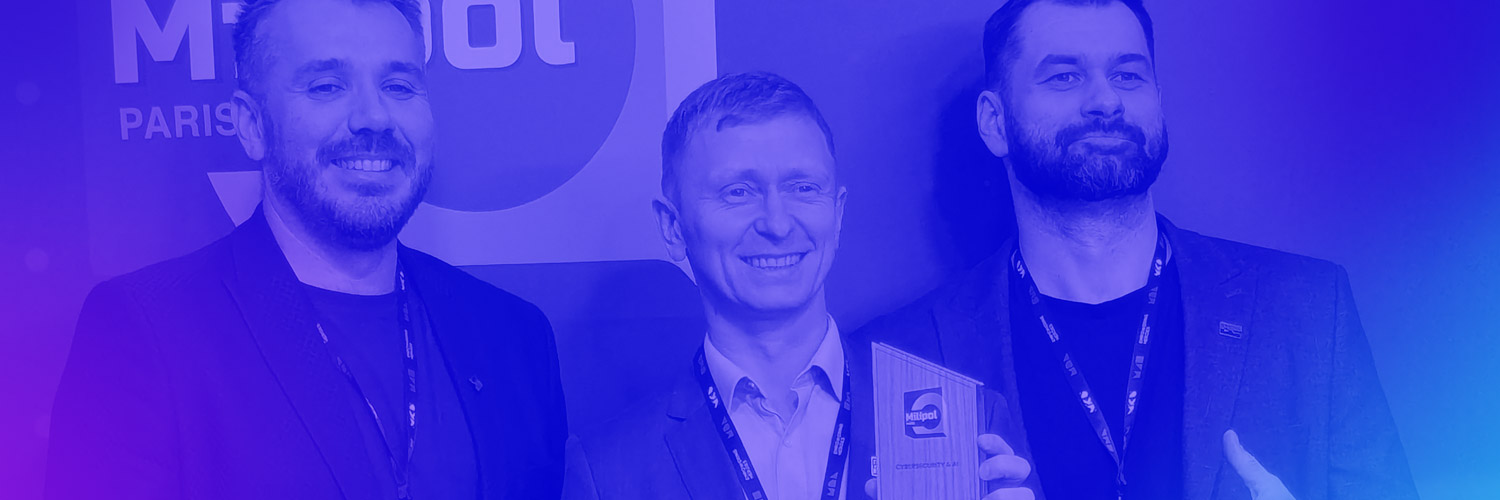

We won the @Milipol_Paris Innovation Award 2025 in Cybersecurity & AI. This sends a clear signal from the world’s leading homeland security stage that #neurosymbolic AI is a promising direction for delivering safe and explainable AI in the field.

While others debate “Prompt Engineering,” we use “Logic Engineering.” We bring mathematical and logical precision to domains where failure is not an option: compliance, national security, infrastructure and autonomous systems.

During our talks in Paris with defence officials and equipment manufacturers, the consensus was clear: black-box AI has hit its breaking point. The demand for transparent, auditable logic has become the industry’s priority.

English