Mark Van der Merwe がリツイート

Mark Van der Merwe

31 posts

Mark Van der Merwe

@MarkVanderMerwe

PhD @UMRobotics | Previously: Intern @NASAJPL, Intern @Mila_Quebec, Undergraduate @UUtah Computer Vision, Robotics, and Machine/Deep Learning.

参加日 Ekim 2019

284 フォロー中142 フォロワー

Mark Van der Merwe がリツイート

Earlier this year, @NimaFazeli7 recieved a @NSF CAREER Award.

We took the chance to catch up with Professor Fazeli to learn a bit more about the fascinating research on "intelligent and dexterous robots that seamlessly integrate vision and touch.”

English

Mark Van der Merwe がリツイート

Is dynamics model mismatch breaking your robotic safety guarantees? We used Conformal Prediction to construct probabilistically safe trajectories given approximate Gaussian dynamics models.

Learn more at um-arm-lab.github.io/lucca

Presented @wafr_conf & @michigan_AI's Symposium

English

Mark Van der Merwe がリツイート

Our This&That: Language-Gesture Controlled Video Generation for Robot Planning testing code of Video Diffusion Model is released at github:

github.com/Kiteretsu77/Th…

The rest will be coming soon (working hard to organizing the code😆)

English

Mark Van der Merwe がリツイート

Mark Van der Merwe がリツイート

Imagine controlling robots with simple gestures! We've developed a system that lets you point at objects and tell robots to 'move this' or 'close that,' with language-gesture-controlled video generation! Check out our project dubbed "this&that": cfeng16.github.io/this-and-that/

GIF

English

Mark Van der Merwe がリツイート

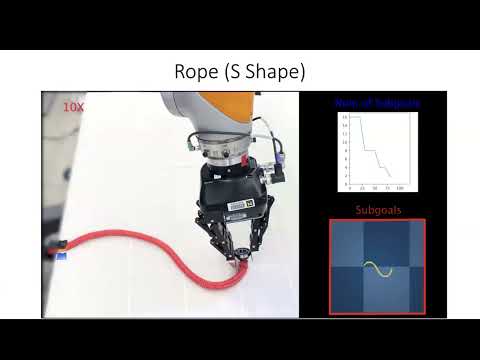

MPC is powerful but doesn’t work well for long-horizon tasks without reference trajectories, which are usually expensive to compute online

Introducing Subgoal Diffuser to generate subgoals at appropriate temporal resolution dynamically to guide MPC

sites.google.com/view/subgoal-d…

🧵↓

English

Mark Van der Merwe がリツイート

Zixuan Huang et. al's new #ICRA2024 paper uses diffusion to guide MPC for complex manipulation tasks! Subgoal-Diffuser breaks the task down into reachable subgoals to guide MPC, thus avoiding local minima. Paper: arxiv.org/abs/2403.13085 Video: youtube.com/watch?v=M0gmBt…

YouTube

GIF

English

Mark Van der Merwe がリツイート

I successfully defended my PhD! Thank you to my advisor Dmitry Berenson, and my lab mates in the @umicharmlab ! 🎓🔬🤖

If you're interested in hiring me to work on learning & planning for robotic manipulation, send me a message!

English

Mark Van der Merwe がリツイート

How can a 🤖 plan and control tools (e.g., 🧽 🧹) for contact-rich tasks given visual-language inputs? CALAMARI 🦑 shows how we can handle this problem in a very generalizable and data-efficient way via a spatial-action map representation! (1/n)

@corl_conf

English

Mark Van der Merwe がリツイート

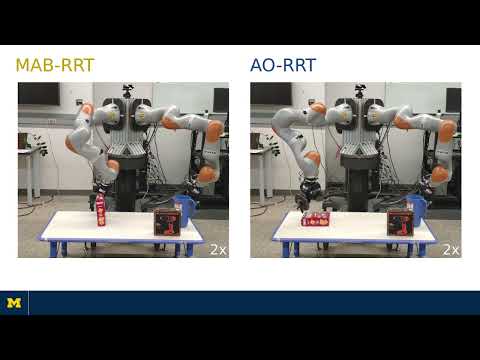

📢A new way to look at motion planning as online learning in Marco Faroni's RA-L paper! *Key idea*: Bias sampling in a Kinodynamic RRT via a non-stationary multi-armed bandit, where arms are clusters of transitions.

Paper: ieeexplore.ieee.org/document/10238… . Video: youtube.com/watch?v=JUfL7I…

YouTube

GIF

English

Check out our work on detecting contact between a deforming tool and its (unknown) environment! #RSS2023

Nima Fazeli@NimaFazeli7

Home robots need to learn how to use our compliant tools (e.g., sponges). @MarkVanderMerwe's #RSS2023 paper enables simultaneous deformation and contact estimation via implicit representations, paving the way for next-gen dexterous tool-use @umicharmlab @UMRobotics @WiYoungsun

English

Mark Van der Merwe がリツイート

Robots can slide objects on flat surfaces when they are too big/heavy to lift. But what if they cannot push on the side of the object? Xili Yi shows how robots can use top contact to certifiably push the object to any desired configuration #RSS2023 arxiv.org/abs/2305.14289

GIF

English

Mark Van der Merwe がリツイート

Close your eyes and pick up two objects, one in each hand. Can you guess their poses just from poking them against each other? Our robots can! Check out MultisSCOPE, Andrea Sipos' paper accepted at RSS 2023: arxiv.org/abs/2305.14204 #RSS2023 @UMRobotics @UMengineering

GIF

English

Mark Van der Merwe がリツイート

Tactile pose estimation is difficult when we have only a few contacts on the object. Johnson Zhong's CHSEL uses a Quality-Diversity alg. to produce diverse plausible poses from contact and free space info, outperforming previous work. @NimaFazeli7 #RSS2023 johnsonzhong.me/projects/chsel/

GIF

English

Mark Van der Merwe がリツイート

I'll be presenting our paper on FOCUS tomorrow in the morning #ICRA2023 Come see how a clever tweak to the fine tuning process can improve data efficiency for sim2real transfer!

English

Mark Van der Merwe がリツイート

Mark Van der Merwe がリツイート

📢 How can robots use compliant tools in contact-rich tasks without explicitly modeling their complex mechanics? @MarkVanderMerwe shows a novel multimodal contact-centric approach #CoRL2022! Paper: arxiv.org/abs/2210.03836 & Page: tinyurl.com/2688pv28 @umicharmlab @UMRobotics

English

Mark Van der Merwe がリツイート

📢 How can we enable robots to dexterously manipulate tools with high resolution and high deformation tactile sensors? Check out our paper arxiv.org/abs/2209.13432 at CoRL 2022! Project Page: mmintlab.com/manipulation-v… @UMRobotics @umicharmlab

GIF

English

Mark Van der Merwe がリツイート

📢 Interested in learning to use sight and touch to model deformable objects? Check out our paper VIRDO++ arxiv.org/abs/2210.03701 #CoRL2022 to see how implicit representations seamlessly integrate multimodal sensing in the real-world. @WiYoungsun @andyzengtweets @peteflorence

GIF

English