Professor_blackpill

7.4K posts

I might be too much of an optimist but I just don’t buy the permanent underclass thing. I just think no matter how smart AI gets, there’s no way a motivated person will wake up each day and be unable to contribute to society.

No way, a product that sucks doesn’t work as expected! idnfinancials.com/news/61918/zuc…

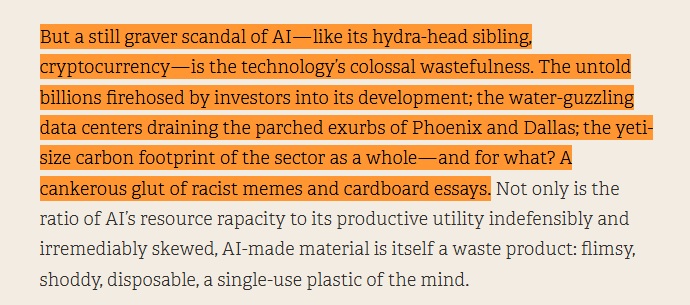

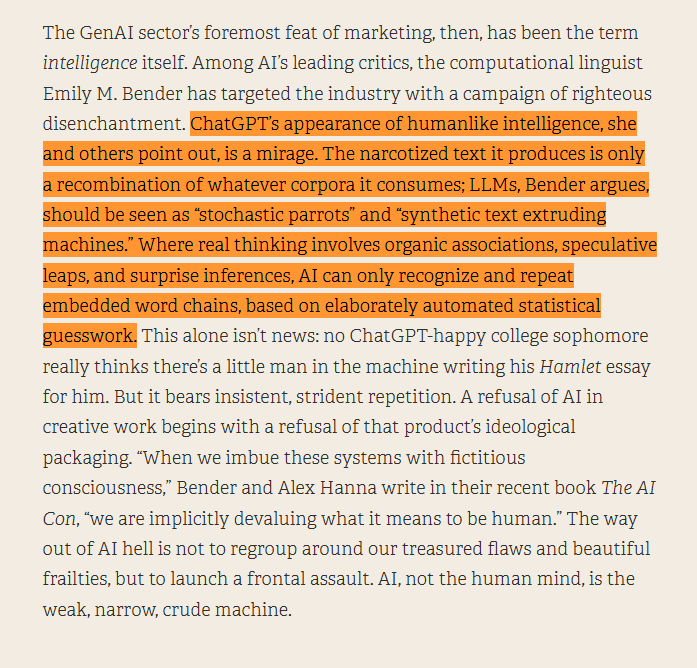

It is kind of interesting: the East Coast magazine types are seemingly intelligent people, seemingly selected through very tight funnels -- great universities, great SAT scores, they can often think critically, follow long and established traditions of thought, etc. but here we are moving at breakneck pace through this unprecedented technological revolution which by any reasonable consensus has decent odds of literally ending biological life as we know it in all sorts of horrible ways, and all they can think about is that... prose is getting kinda worse on the way? That some writing sounds a little sloppier now? That they are not fans of the style? This is somehow their elephant in the room? What? Is this just what happens when you compound bad epistemic habits for decades? Functional paralysis in otherwise sane minds?

Computer Science went from one of the absolute best degrees to pursue to one of the worst all within a decade Absolutely nuts.

Habermas has a surprising place in the intellectual genealogy of the Tech Right. Alex Karp claims he studied with him, which is disputed, but he definitely studied with students of his; Hans-Hermann Hoppe, source of Yarvinite neo-monarchism, was originally a Habermas protégé.

i never saw it from this perspective and now im mad

🚨🚨 Just hours ago (April 16, 2026) Gas pipeline explodes in Haripur , Pakistan: - 8 dead (including children), massive fireball engulfs homes. Just on the same day, Australia’s Geelong refinery (one of our ONLY 2 left) erupts in flames, slashing fuel output amid the Iran war chaos. Energy infrastructure is burning worldwide. Coincidence or coordinated attacks hitting us all???

Billionaire hedge fund manager Ray Dalio just told Tucker Carlson that central bank digital currencies are coming: "There will be no privacy... all transactions will be known... and if you're politically disfavored, you could be shut off."

😱 OMG They're not building a currency... They're building a control grid. Watch Catherine Austin Fitts drop truth at Hillsdale College: CBDC = programmable permission slip for your own money. One second it's "convenience"... the next it's total control over what you can buy, when, and from whom. The BIS in Switzerland is coordinating it all. This is financial freedom on the line. Would you accept programmable money? Let me know below and don't forget to share this! 👇