Tensor-Slayer

10.6K posts

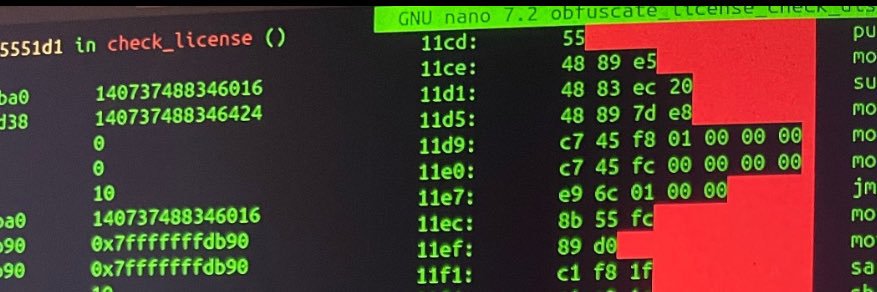

Codex App ported to Linux. Fully functional. How to reverse engineer and replicate detailed below :

hmm I sort of disagree and I am bullish for TML. I think they really really have the top talents that I admire in the field, e.g. Jeremy and Sam for optimization, Songlin for Attn, Lia for MoE, Andrew for FSDPv2, and a bunch more folks it's just natural that it takes a while to publish good models: - dpsk starts to publish papers in 2023, even piblished dspkv2 (which I think is already amazing) in mid 2024 and nobody cares, until dpskv3 and r1 - msh took 10+ month to deliver a first not bad long ctx model in 2023 and be silent for the whole 2024 year, and starts to catch up gradually in 2025 - qwen starts to be a much better model than llama until qwen2.5, mid or late 2024, while the lab has been there forever it takes time to get infra and data done, but as long as you have good folks, and principled ways of doing science and experiments, some time or later, scaling laws will pay back

🚨 ANTHROPIC CEO WARNS: THE COMPANY IS NO LONGER SURE CLAUDE ISN’T CONSCIOUS.

And the underlying reason seems to be that Alibaba has unified the entire team under the Qwen umbrella, with everything reporting directly to Alibaba’s CEO. That may have made the leadership above Junyang concerned that he could grow beyond their control, even though they rely heavily on Junyang and Qwen as core partners.

huh Well. Good job, babus *Alibaba* Qwen is pretty much over Ironic, it wasn't even a startup. Burned purely by lack of ethics

me stepping down. bye my beloved qwen.

The next wave of enshitification is slowly rolling in with TUI apps eating more CPU cycles than actual GUI apps. Also wastes tons of cycles in the terminal displaying it. Profile your crap. Optimize it. Test on ancient terminals. It is not that difficult.

All Telegram chatbots can now stream responses to users in real time — great for AI assistants.

Qwen3.5 2B and 0.8B Benchmarks almost seems like progress on tiny models has come to a stop

TIL that Linux isn't free cause I had to buy the computer to run it 😭 This is the biggest load of cope I have ever heard. I hope Opus gets smoked by DeepSeek v4 and the only people who continue to use closed source models are Windows users.