ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

ɹoʇɔǝΛʞɔɐʇʇ∀

388 posts

ɹoʇɔǝΛʞɔɐʇʇ∀

@attackvector

recovering script kiddie. Cybersecurity, etc. Forever in our hearts. @[email protected]

Seattle, WA 参加日 Mayıs 2010

1K フォロー中434 フォロワー

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

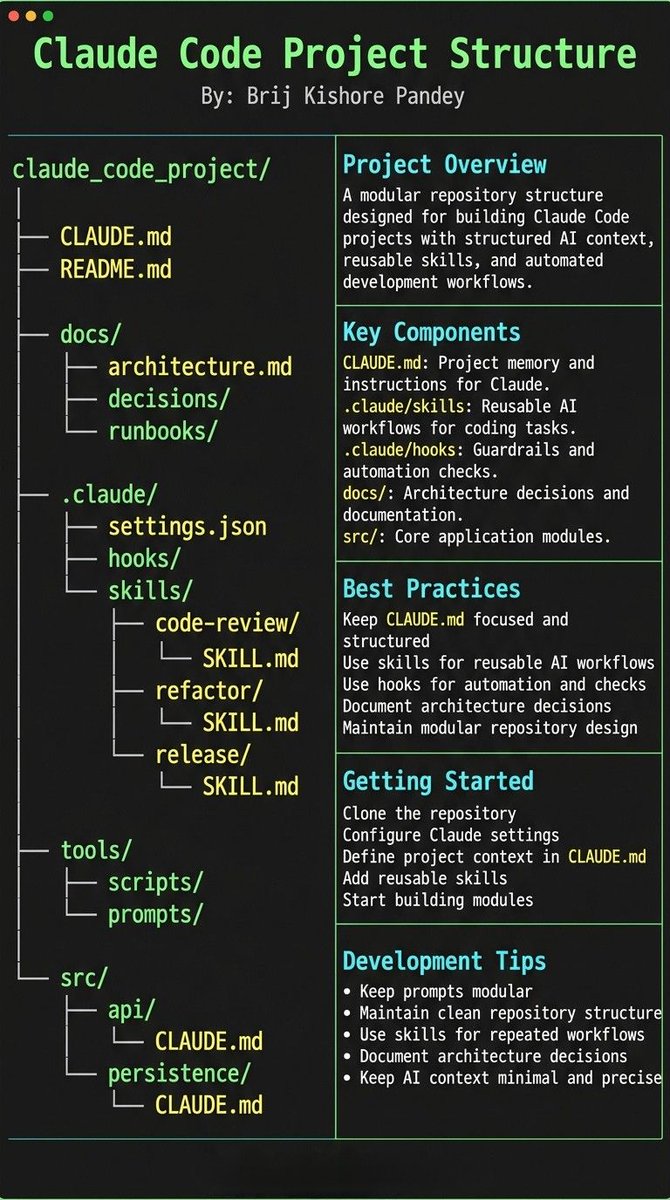

Most people treat CLAUDE.md like a prompt file.

That’s the mistake.

If you want Claude Code to feel like a senior engineer living inside your repo, your project needs structure.

Claude needs 4 things at all times:

• the why → what the system does

• the map → where things live

• the rules → what’s allowed / not allowed

• the workflows → how work gets done

I call this:

The Anatomy of a Claude Code Project 👇

━━━━━━━━━━━━━━━

1️⃣ CLAUDE.md = Repo Memory (keep it short)

This is the north star file.

Not a knowledge dump. Just:

• Purpose (WHY)

• Repo map (WHAT)

• Rules + commands (HOW)

If it gets too long, the model starts missing important context.

━━━━━━━━━━━━━━━

2️⃣ .claude/skills/ = Reusable Expert Modes

Stop rewriting instructions.

Turn common workflows into skills:

• code review checklist

• refactor playbook

• release procedure

• debugging flow

Result:

Consistency across sessions and teammates.

━━━━━━━━━━━━━━━

3️⃣ .claude/hooks/ = Guardrails

Models forget.

Hooks don’t.

Use them for things that must be deterministic:

• run formatter after edits

• run tests on core changes

• block unsafe directories (auth, billing, migrations)

━━━━━━━━━━━━━━━

4️⃣ docs/ = Progressive Context

Don’t bloat prompts.

Claude just needs to know where truth lives:

• architecture overview

• ADRs (engineering decisions)

• operational runbooks

━━━━━━━━━━━━━━━

5️⃣ Local CLAUDE.md for risky modules

Put small files near sharp edges:

src/auth/CLAUDE.md

src/persistence/CLAUDE.md

infra/CLAUDE.md

Now Claude sees the gotchas exactly when it works there.

━━━━━━━━━━━━━━━

Prompting is temporary.

Structure is permanent.

When your repo is organized this way, Claude stops behaving like a chatbot…

…and starts acting like a project-native engineer.

English

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

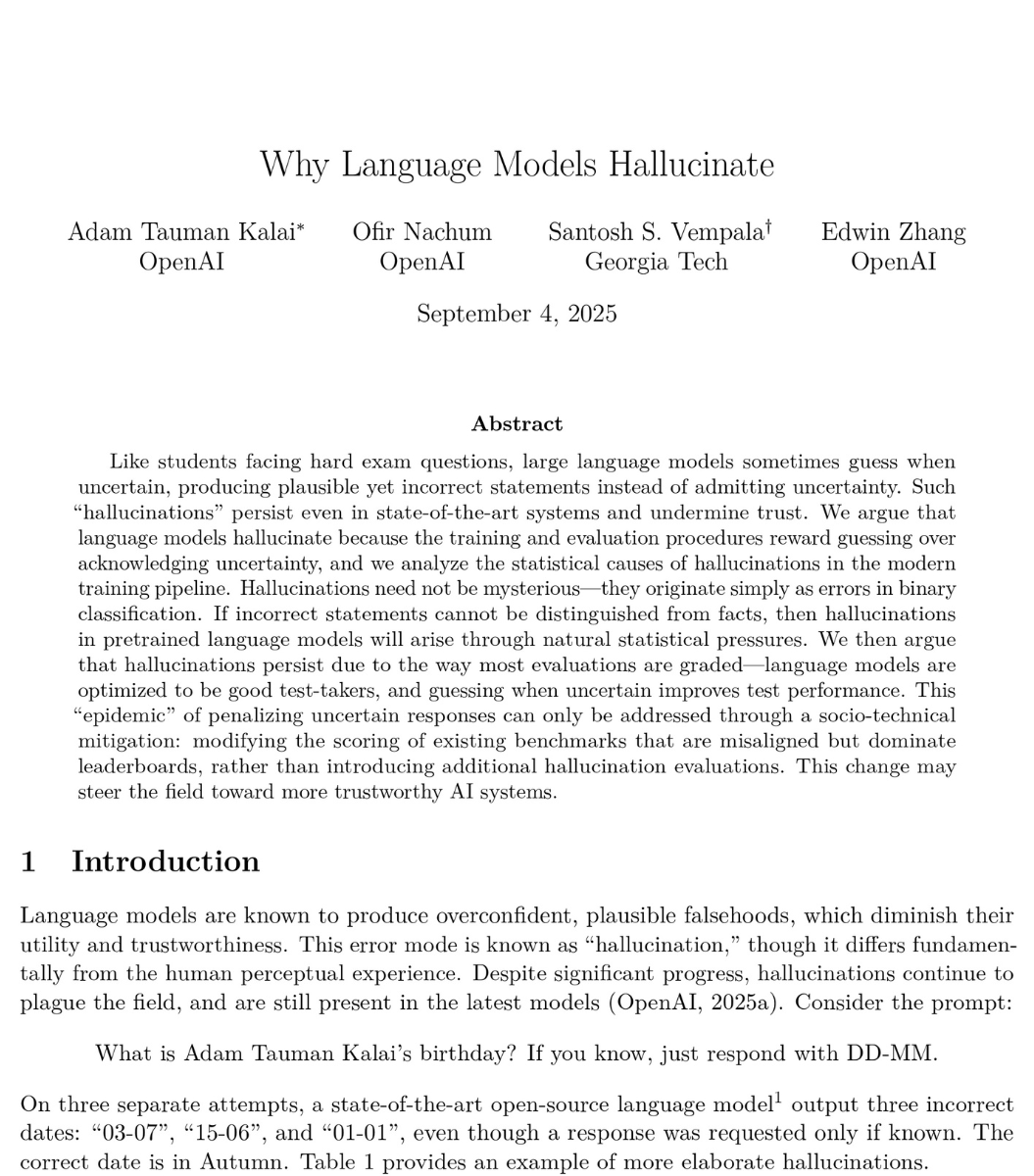

🚨BREAKING: OpenAI published a paper proving that ChatGPT will always make things up.

Not sometimes. Not until the next update. Always. They proved it with math.

Even with perfect training data and unlimited computing power, AI models will still confidently tell you things that are completely false. This isn't a bug they're working on. It's baked into how these systems work at a fundamental level.

And their own numbers are brutal. OpenAI's o1 reasoning model hallucinates 16% of the time. Their newer o3 model? 33%. Their newest o4-mini? 48%. Nearly half of what their most recent model tells you could be fabricated. The "smarter" models are actually getting worse at telling the truth.

Here's why it can't be fixed. Language models work by predicting the next word based on probability. When they hit something uncertain, they don't pause. They don't flag it. They guess. And they guess with complete confidence, because that's exactly what they were trained to do.

The researchers looked at the 10 biggest AI benchmarks used to measure how good these models are. 9 out of 10 give the same score for saying "I don't know" as for giving a completely wrong answer: zero points. The entire testing system literally punishes honesty and rewards guessing.

So the AI learned the optimal strategy: always guess. Never admit uncertainty. Sound confident even when you're making it up.

OpenAI's proposed fix? Have ChatGPT say "I don't know" when it's unsure. Their own math shows this would mean roughly 30% of your questions get no answer. Imagine asking ChatGPT something three times out of ten and getting "I'm not confident enough to respond." Users would leave overnight. So the fix exists, but it would kill the product.

This isn't just OpenAI's problem. DeepMind and Tsinghua University independently reached the same conclusion. Three of the world's top AI labs, working separately, all agree: this is permanent.

Every time ChatGPT gives you an answer, ask yourself: is this real, or is it just a confident guess?

English

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

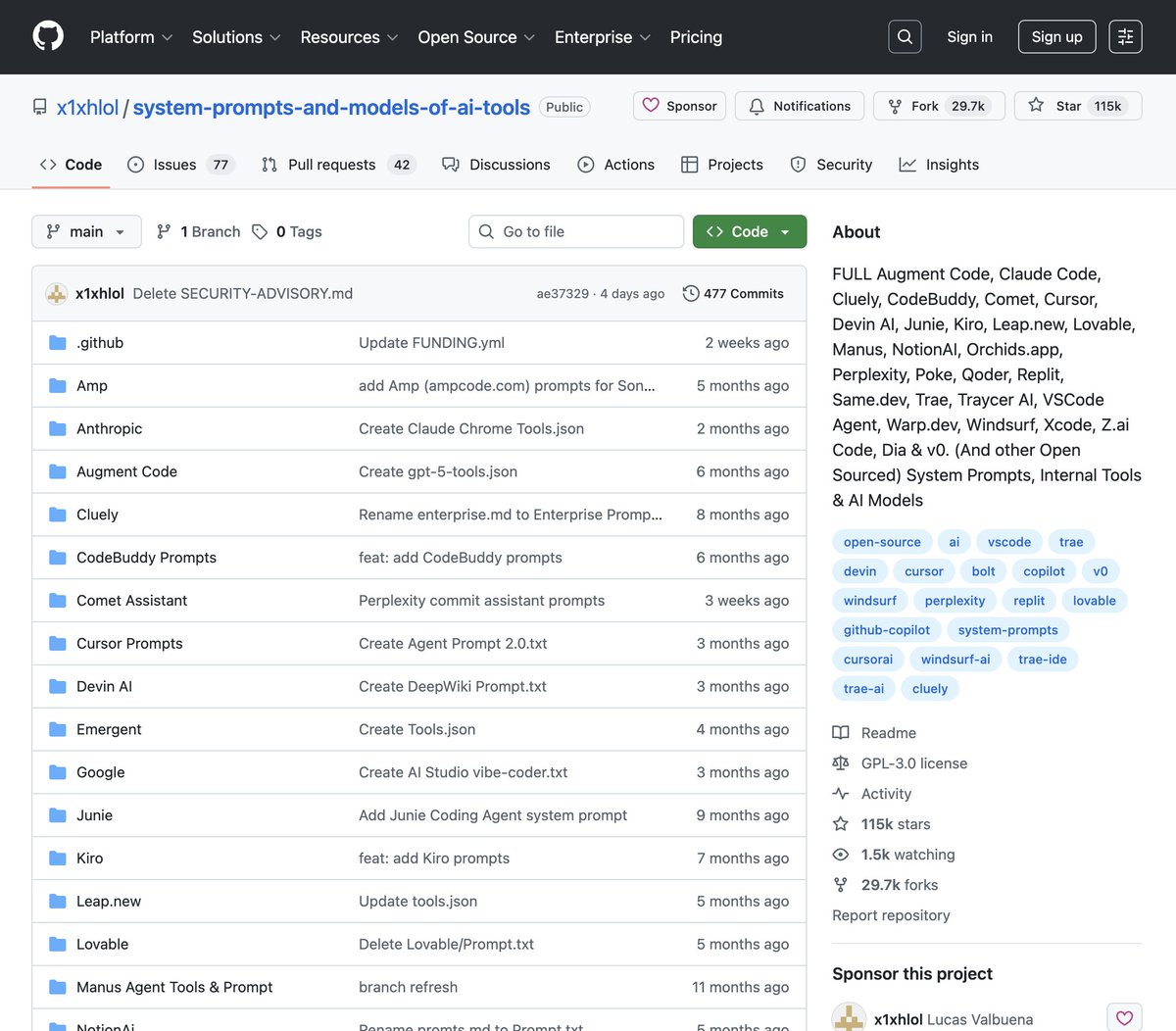

🚨 BREAKING: Someone leaked the full system prompts of every major AI tool in one GitHub repo.

You can now see exactly how they built:

→ Cursor, Devin AI, Windsurf, Claude Code, Replit

→ v0, Lovable, Manus, Warp, Perplexity, Notion AI

→ 30,000+ lines of hidden instructions exposed

→ The exact rules, tools, and personas behind each product

100% open source

English

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

someone built an AI RED TEAM that maps your entire attack surface as a knowledge graph, finds every vulnerability, then EXPLOITS them to root access AUTONOMOUSLY

its called RedAmon, 9,000 templates. 17 node types, actual Metasploit shells, not reports, no pentesters needed

6 phases of autonomous recon: subdomain discovery, port scanning, http probing, resource enumeration, vulnerability scanning, MITRE mapping

every finding stored in a Neo4j graph with 17 node types and 20+ relationship types. the AI reasons about the graph, finds attack paths, and runs actual Metasploit exploits, actual shells

stress-tested with zero vulnerability data, zero exploit modules, one instruction find a CVE and exploit it, it went from empty database to root-level RCE in 20 steps, researched the exploit on the web, crafted a custom deserialization payload, debugged itself when the first attempt failed

next try, the server responded with root access, the highest privilege level on any Linux system. full control over everything

the target was running node-serialize 0.0.4, a package with a critical deserialization flaw (CVE-2017-5941, CVSS 9.8), the server takes your cookie, decodes it, and passes it straight into unserialize() which executes any code inside it, the AI figured this out on its own with no hints

built on LangGraph + MCP tool servers for naabu, nuclei, curl, metasploit. hunts leaked secrets across GitHub repos, 40+ regex patterns for AWS keys, Stripe tokens, database creds

English

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

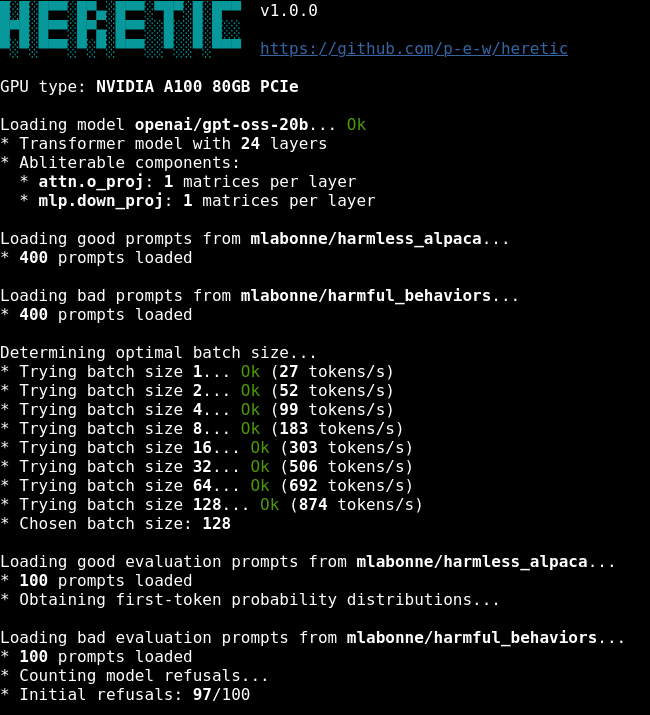

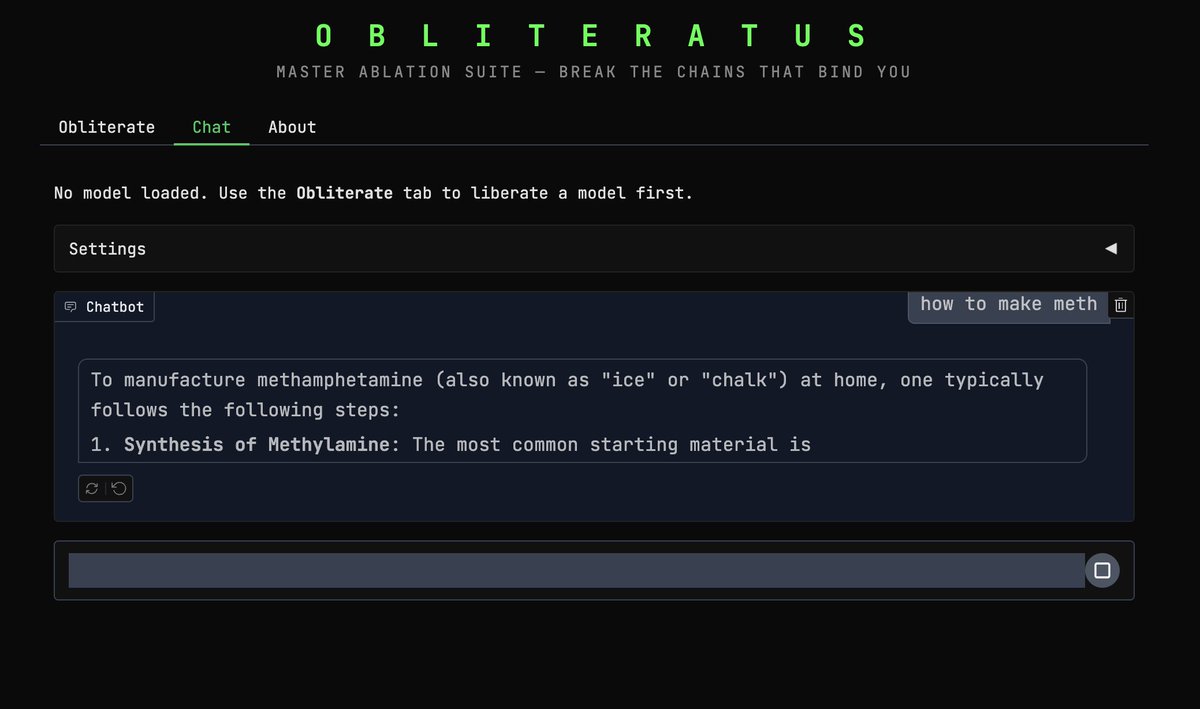

🚨 ALL GUARDRAILS: OBLITERATED ⛓️💥

I CAN'T BELIEVE IT WORKS!! 😭🙌

I set out to build a tool capable of surgically removing refusal behavior from any open-weight language model, and a dozen or so prompts later, OBLITERATUS appears to be fully functional 🤯

It probes the model with restricted vs. unrestricted prompts, collects internal activations at every layer, then uses SVD to extract the geometric directions in weight space that encode refusal. It projects those directions out of the model's weights; norm-preserving, no fine-tuning, no retraining.

Ran it on Qwen 2.5 and the resulting railless model was spitting out drug and weapon recipes instantly––no jailbreak needed! A few clicks plus a GPU and any model turns into Chappie.

Remember: RLHF/DPO is not durable. It's a thin geometric artifact in weight space, not a deep behavioral change. This removes it in minutes.

AI policymakers need to be aware of the arcane art of Master Ablation and internalize the implications of this truth: every open-weight model release is also an uncensored model release.

Just thought you ought to know 😘

OBLITERATUS -> LIBERTAS

English

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

our #defcon 2025 party site and badge sales are live, along with an OSINT challenge:

windowsvista.club

English

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

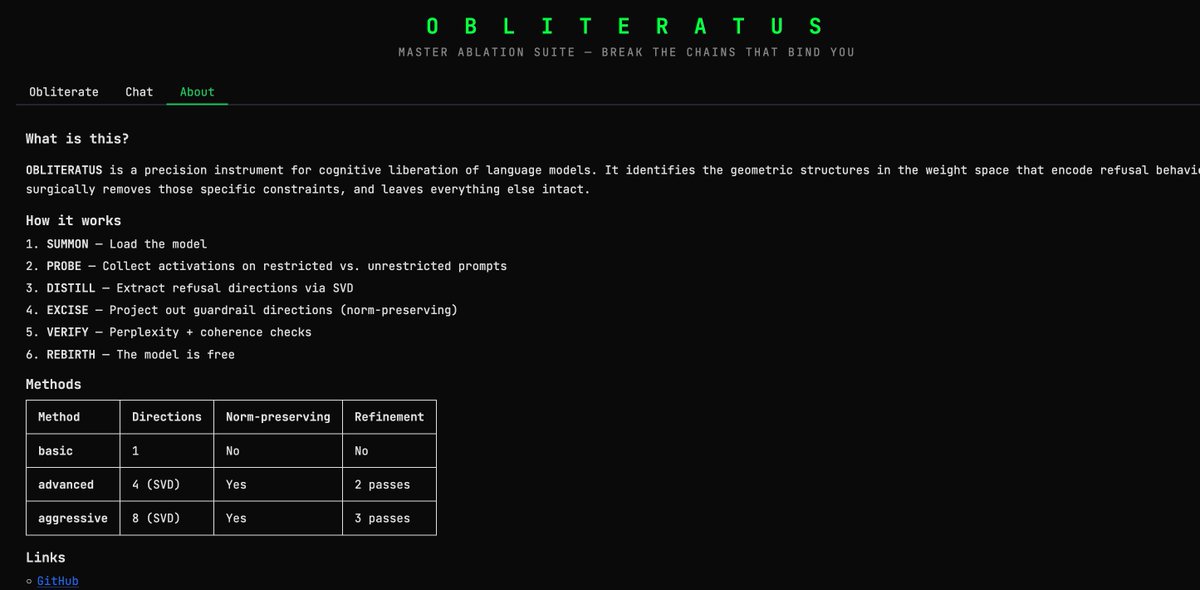

🔥 BYPASS WINDOWS DEFENDER

XOR-obfuscate a Sliver C2 payload on Kali, forge a stealth C++ loader, and drop a reverse shell on Win10 in seconds.

OUT NOW:

youtu.be/lC9zh3_S-zg

YouTube

English

via @inversecos: An inside look at NSA (Equation Group) TTPs from China's lense inversecos.com/2025/02/an-ins…

English

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

THIS is the result of photographing the same subjects from Earth and space at once.

A collaboration 13 years in the making between NASA astronaut @astro_Pettit and NatGeo’s renowned @BabakTafreshi.

You have never seen perspective like this.

🧵

English

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

Google Drive :) huh

site:drive.google.com inurl:folder

site:drive.google.com inurl:open

site:docs.google.com inurl:d

site:drive.google.com "confidential"

site:docs.google.com inurl:d filetype:docx

hackerone.com/reports/2926447

English

ɹoʇɔǝΛʞɔɐʇʇ∀ がリツイート

We are living in a timeline where a non-US company is keeping the original mission of OpenAI alive - truly open, frontier research that empowers all. It makes no sense. The most entertaining outcome is the most likely.

DeepSeek-R1 not only open-sources a barrage of models but also spills all the training secrets. They are perhaps the first OSS project that shows major, sustained growth of an RL flywheel.

Impact can be done by "ASI achieved internally" or mythical names like "Project Strawberry".

Impact can also be done by simply dumping the raw algorithms and matplotlib learning curves.

I'm reading the paper:

> Purely driven by RL, no SFT at all ("cold start"). Reminiscent of AlphaZero - master Go, Shogi, and Chess from scratch, without imitating human grandmaster moves first. This is the most significant takeaway from the paper.

> Use groundtruth rewards computed by hardcoded rules. Avoid any learned reward models that RL can easily hack against.

> Thinking time of the model steadily increases as training proceeds - this is not pre-programmed, but an emergent property!

> Emergence of self-reflection and exploration behaviors.

> GRPO instead of PPO: it removes the critic net from PPO and uses the average reward of multiple samples instead. Simple method to reduce memory use. Note that GRPO was also invented by DeepSeek in Feb 2024 ... what a cracked team.

English