Giorgio Robino

29.4K posts

Giorgio Robino

@solyarisoftware

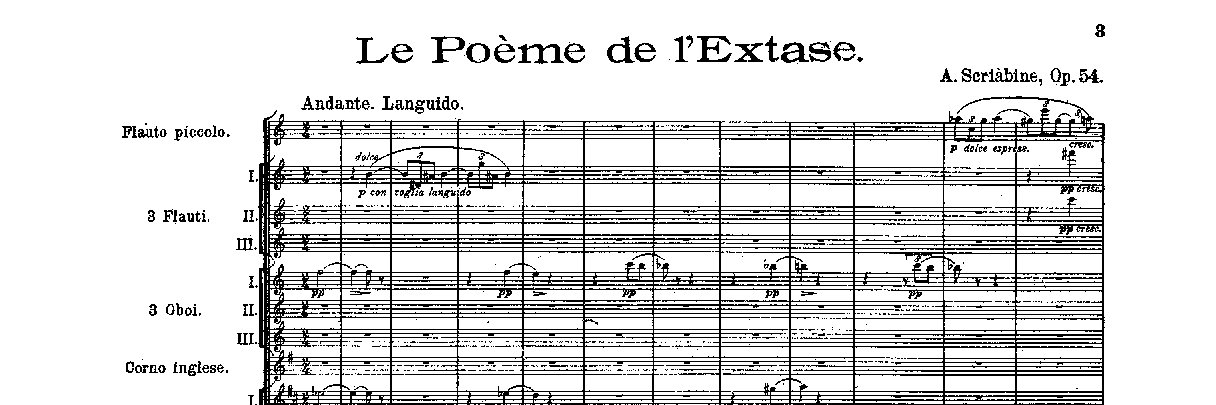

Conversational LLM-based Applications Specialist @almawave | Former ITD-CNR Researcher | Soundscapes (Orchestral) Composer.

Today we’re releasing Qwen-Scope 🔭, an open suite of sparse autoencoders for the Qwen model family. It turns SAE features into practical tools: 🎯 Inference — Steer model outputs by directly manipulating internal features, no prompt engineering needed 📂 Data — Classify & synthesize targeted data with minimal seed examples, boosting long-tail capabilities 🏋️ Training — Trace code-switching & repetitive generation back to their source, fix them at the root 📊 Evaluation — Analyze feature activation patterns to select smarter benchmarks and cut redundancy We hope the community uses Qwen-Scope to uncover new mechanisms inside Qwen models and build applications beyond what we explored.Excited to see what you build! 🚀 🔗🔗 Blog: qwen.ai/blog?id=qwen-s… HuggingFace: huggingface.co/collections/Qw… ModelScope: modelscope.cn/collections/Qw… Technical Report: …anwen-res.oss-accelerate.aliyuncs.com/qwen-scope/Qwe…

Last week, we introduced Ling-2.6-1T. Today, Ling-2.6-1T is officially an open model~ 🤗 1T total parameters · 63B active parameters We bring values to developers by making it easier to test, deploy, customize, and build. It is optimized to be "token efficiency" for real production needs: • Lower token overhead: strong intelligence without long reasoning traces • Reliable multi-step execution: better instruction, tool, context, and workflow control • Production-ready deployment: from code generation to bug fixing, with broad agent framework compatibility A sneak pick into the agentic capability in @opencode