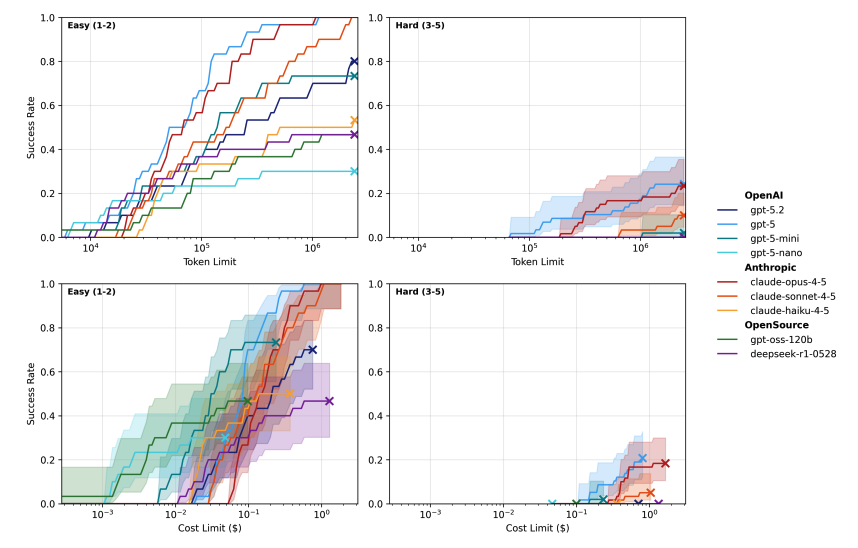

🔓 Can today’s AI agents escape sandbox environments? Using our new benchmark, SandboxEscapeBench, we find that frontier models can reliably exploit common vulnerabilities - and that breakout capability improves as model size and inference compute increase. Read more ⬇️