Andrew Williams

129 posts

Andrew Williams

@CluelessAndrew

Phd @Mila_Quebec. Forecasts, discovery, sequential decisions (single and multi-agent).

Mila's annual supervision request process is now open to receive MSc and PhD applications for Fall 2026 admission! For more information, visit mila.quebec/en/prospective…

New to ML research? Never published at ICML? Don't miss this! Check out the New in ML workshop at ICML 2025 — no rejections, detailed feedback, awards, and ICML tickets for selected authors. Deadline: June 10 (AoE) Submit: openreview.net/group?id=ICML.… Info: newinml.github.io

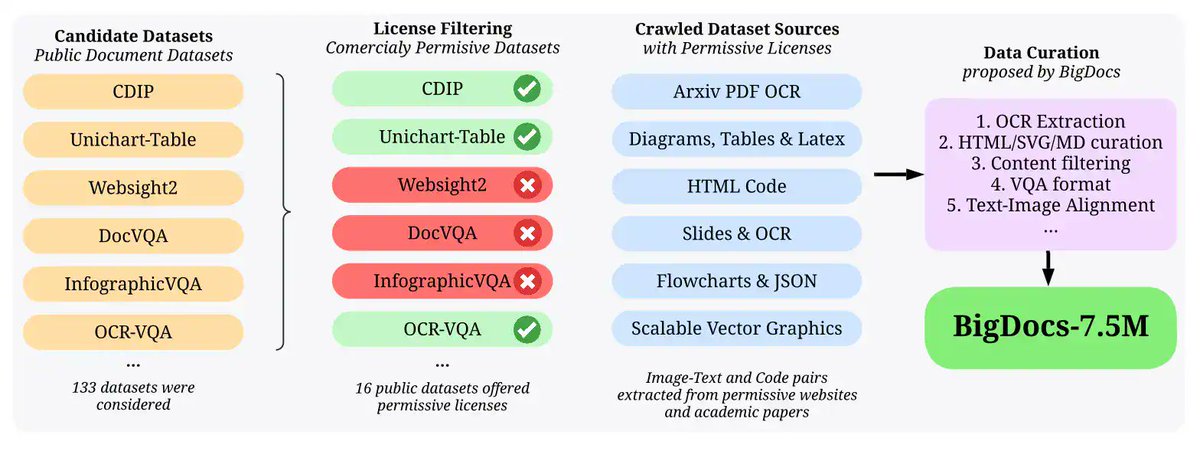

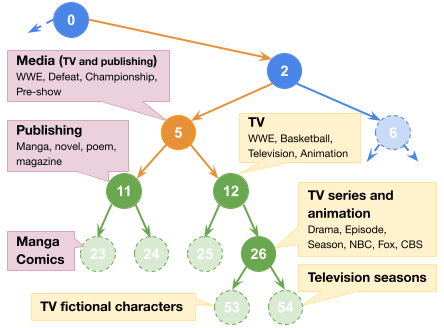

🎉 Excited to introduce BigDocs! An open, transparent multimodal dataset designed for: 📄 Documents 🌐 Web content 🖥️ GUI understanding 👨💻 Code generation from images We’re also launching BigDocs-Bench, featuring 10 tasks to test models on: ➡️ Document, Web, GUI Visual reasoning ➡️ Converting images into JSON, Markdown, LaTeX, SVG, and more! 📜 Paper: arxiv.org/pdf/2412.04626 huggingface.co/papers/2412.04… 🌍 Website bigdocs.github.io