fractalitty 리트윗함

RESEARCHERS JUST BUILT AN AI MODEL TRAINED ONLY ON TEXT FROM BEFORE 1931

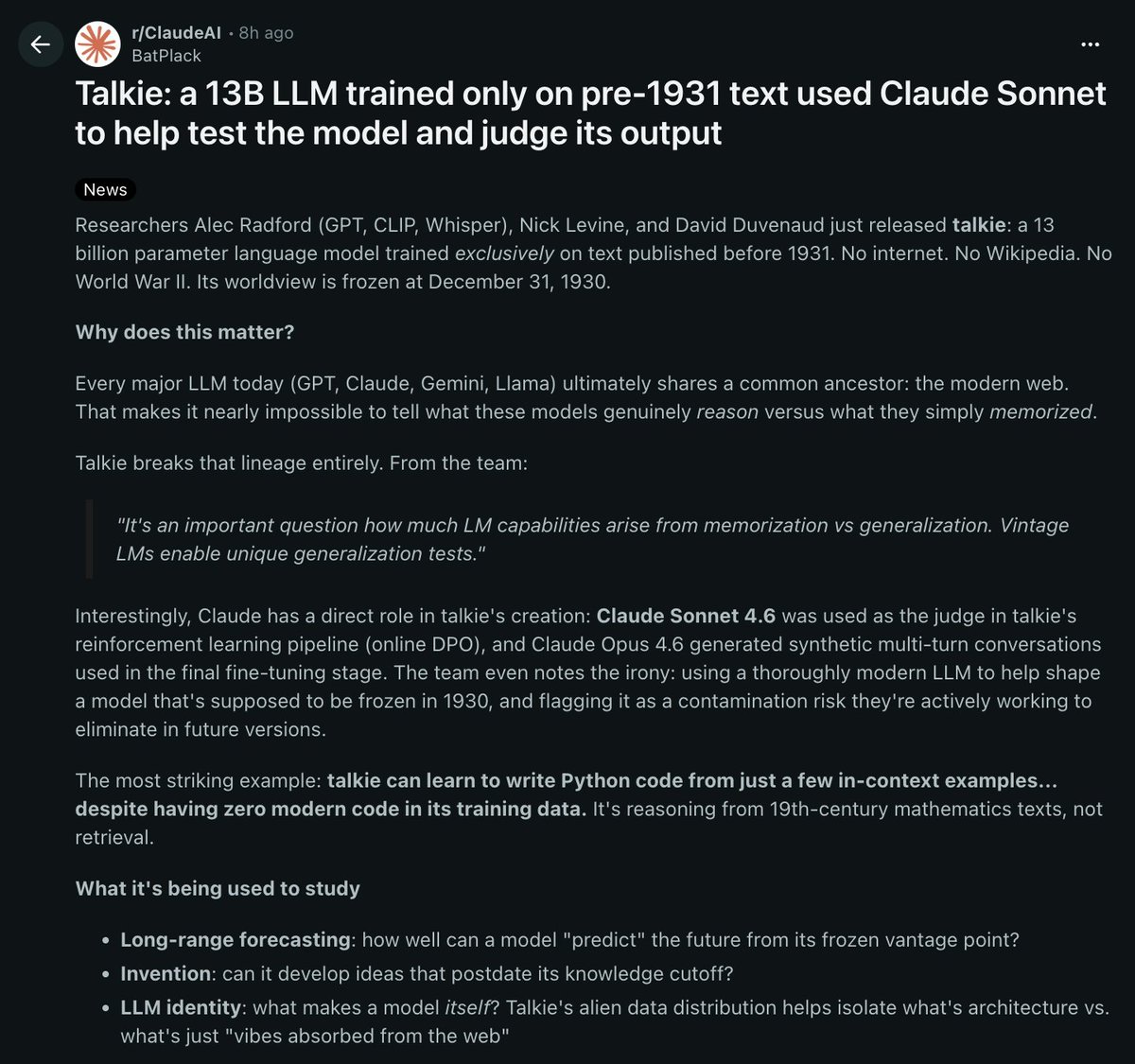

it's called talkie. 13 billion parameters, trained exclusively on text published before december 31, 1930

its worldview is completely frozen in time

the reason this matters: every major AI model today (GPT, claude, gemini, llama) was trained on the modern web.

that makes it almost impossible to tell if these models actually reason or if they just memorized the answers from their training data

talkie breaks that completely because it has never seen any modern information

the crazy part:

talkie can learn to write python code from just a few examples you show it in the prompt. despite having ZERO modern code in its training data.

it's figuring out programming from 19th century mathematics texts. that's ACTUAL reasoning

claude sonnet 4.6 was used as the judge in talkie's reinforcement learning pipeline. claude opus 4.6 generated the synthetic conversations used in fine tuning. a modern AI was used to train a model that's supposed to be frozen in 1930

the team already flagged this as a contamination risk they want to eliminate in future versions

what they're using it to study:

> long range forecasting. how well can a model "predict" the future from a frozen vantage point

> invention. can it develop ideas that didn't exist until after its knowledge cutoff

> LLM identity. what makes a model itself vs what's just patterns absorbed from the web

alec radford built this. the same guy behind GPT, CLIP, and whisper

both models are open source on hugging face.

they're already planning a GPT-3 scale vintage model later this year

an AI that has never seen the modern world can still reason its way to writing code.

THAT alone tells you more about intelligence than any benchmark ever will

English