Knowledge Foundry 리트윗함

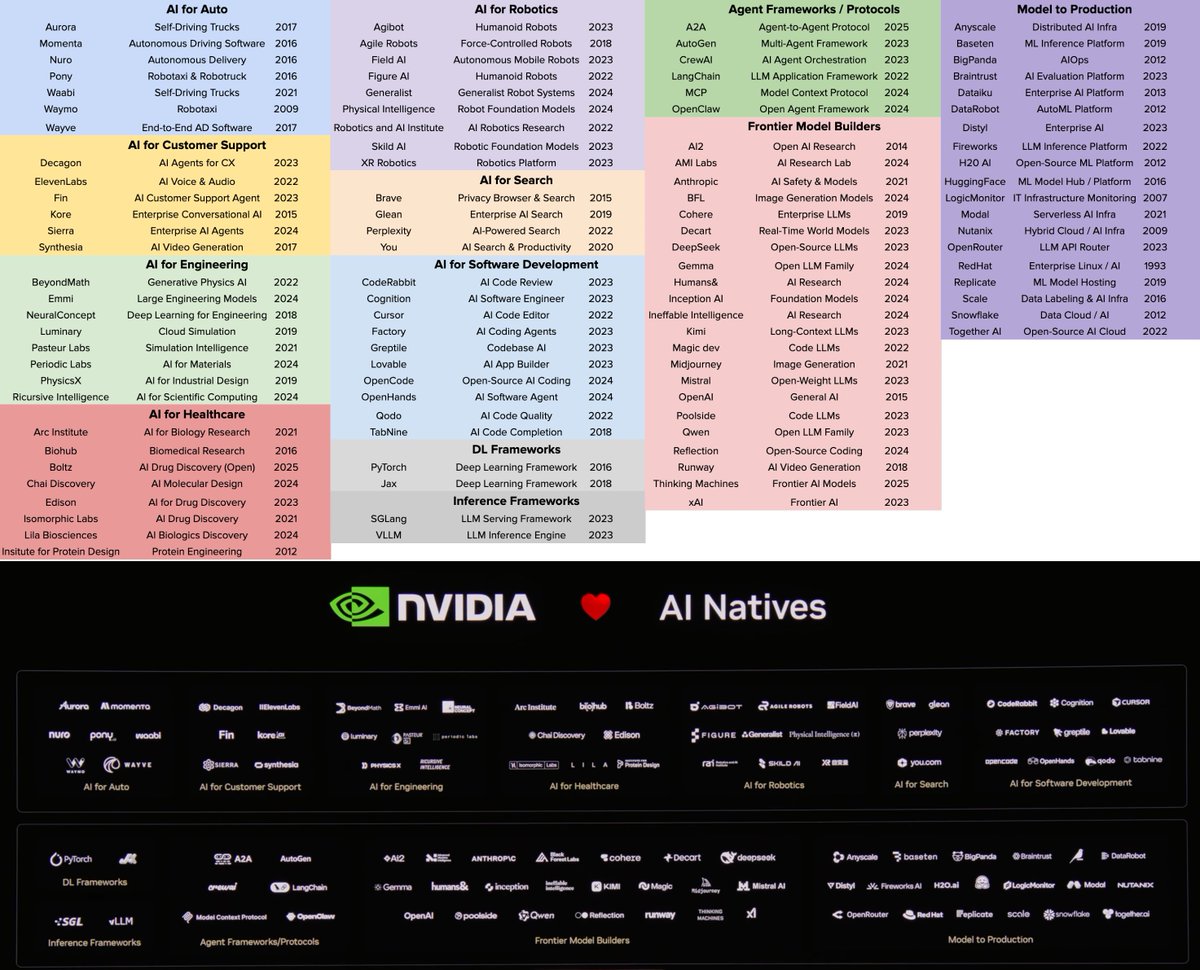

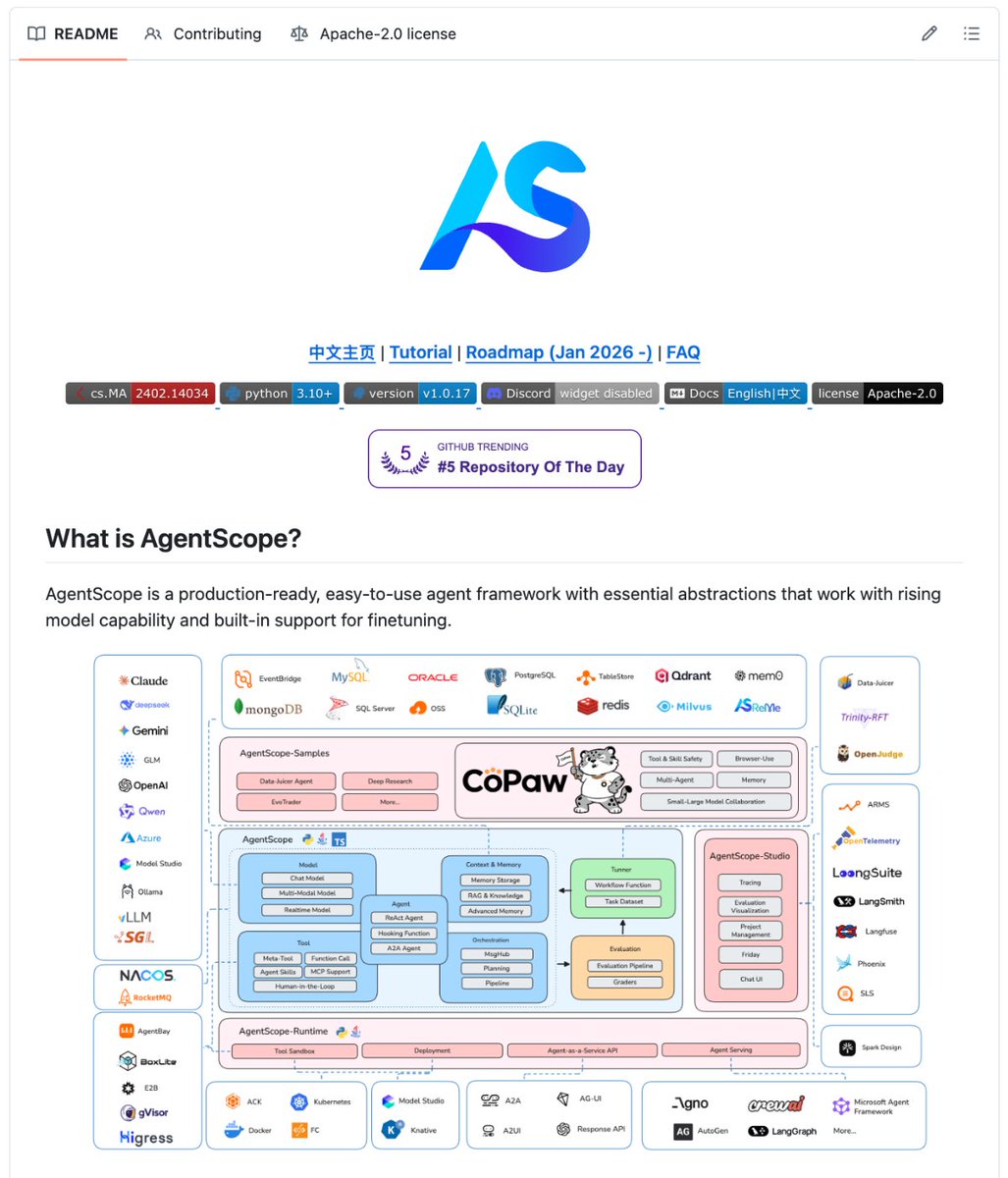

🚨 BREAKING: CHINA just released a Python framework for building AI agents. 100% OPEN SOURCE.

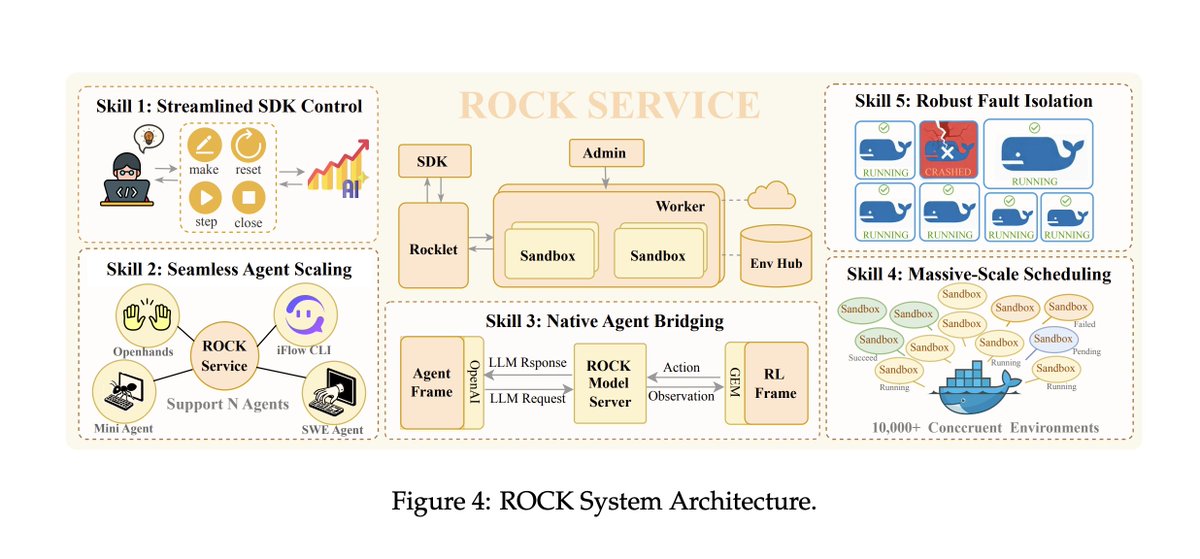

It has visual agent design, MCP tools, memory, RAG, and reasoning. All built in. All working together.

It's called AgentScope.

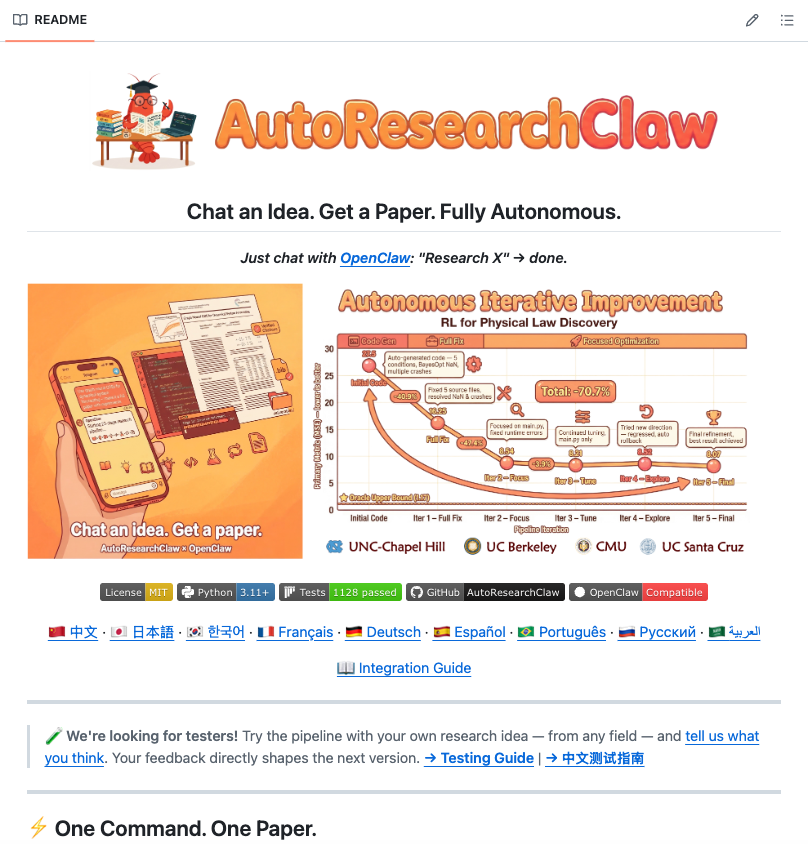

You describe your agent system. It builds the architecture, wires the tools, and runs the whole thing. You come back and there's a working multi-agent pipeline. Not a prototype. Not a demo. The actual system.

Not a wrapper.

Not a chatbot builder.

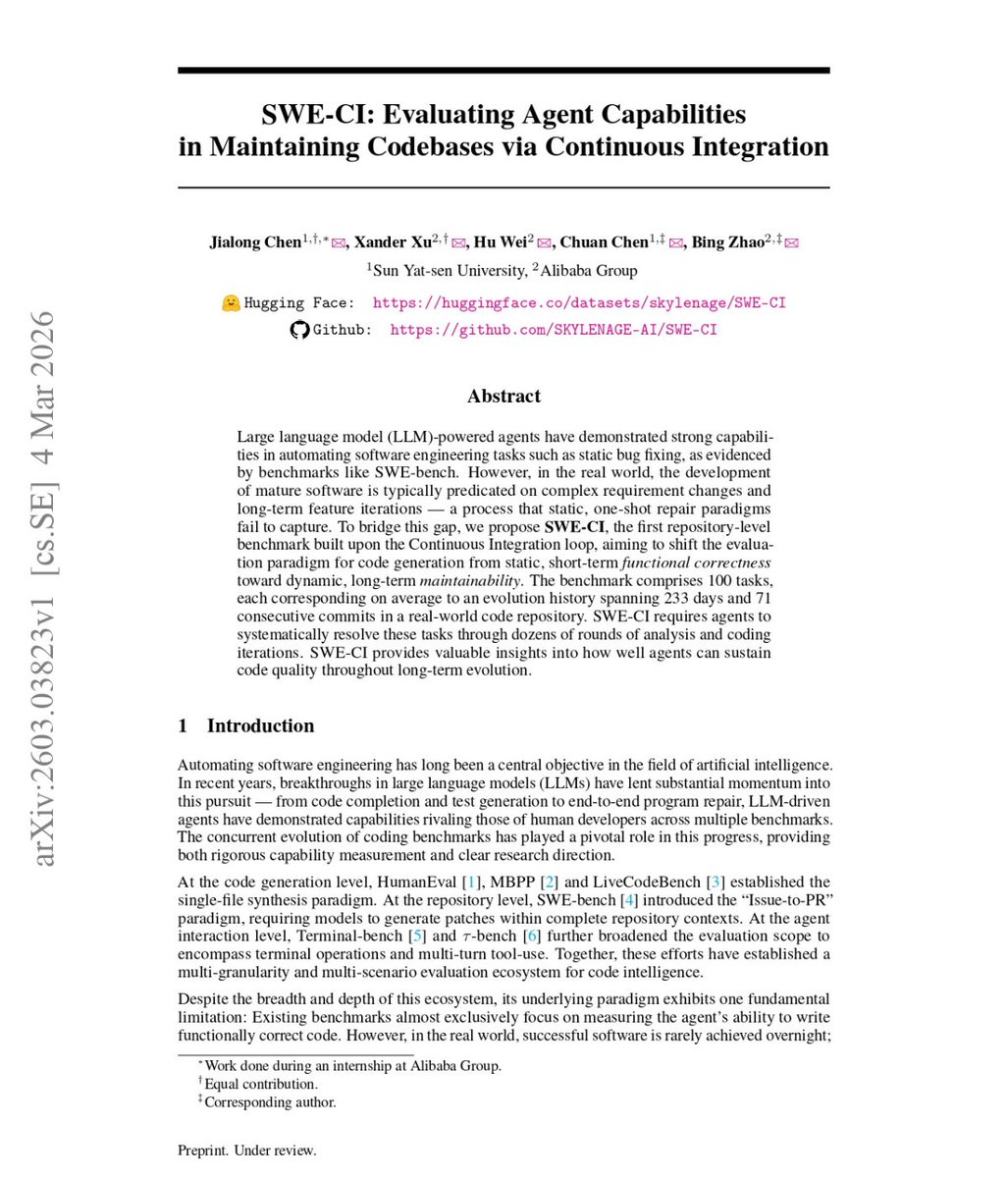

A full Agent-Oriented Programming framework that thinks in agents from the ground up.

Here's what it does out of the box:

→ Visual agent builder so you design your entire system before writing a single line of code

→ Native MCP tool support, plug any external tool directly into any agent in your pipeline

→ Built-in memory so every agent remembers context, decisions, and history across sessions

→ RAG pipeline ready to connect your own documents, databases, and knowledge bases

→ Reasoning modules that let agents plan, reflect, and self-correct without human input

→ Multi-agent coordination so your agents collaborate as a system, not a pile of isolated API calls

Here's how it thinks:

You define your goal. AgentScope maps the agent roles. Each agent gets its tools, its memory, its reasoning layer. They coordinate. Results flow back up. You get a finished output.

A single complex task might route through a planner agent, a researcher agent, a coder agent, and a critic agent, each doing its job, then converge into one clean deliverable.

Here's the wildest part:

AgentScope is built by Alibaba DAMO Academy. The same lab behind Qwen. They didn't assemble this from existing pieces. They designed the entire framework from first principles around how agents actually need to think, remember, and work together. Most frameworks give you building blocks. AgentScope gives you an architecture. The community has already started plugging it into data pipelines, research workflows, and full automation systems the team never planned for.

100% Open Source. Apache 2.0 License.

English