Michael Cho - Rbt/Acc

2.6K posts

Michael Cho - Rbt/Acc

@micoolcho

I ❤️ robots, cheap hardware, steam engines, XGBoost, Liverpool FC & SG 🇸🇬 | Plane crash survivor | Building @BitRobotNetwork @frodobots

First blog post up on Robotics Simulation Infrastructure! I give a high-level overview, followed by an elementary example of better infrastructure for pose management. Link in thread below

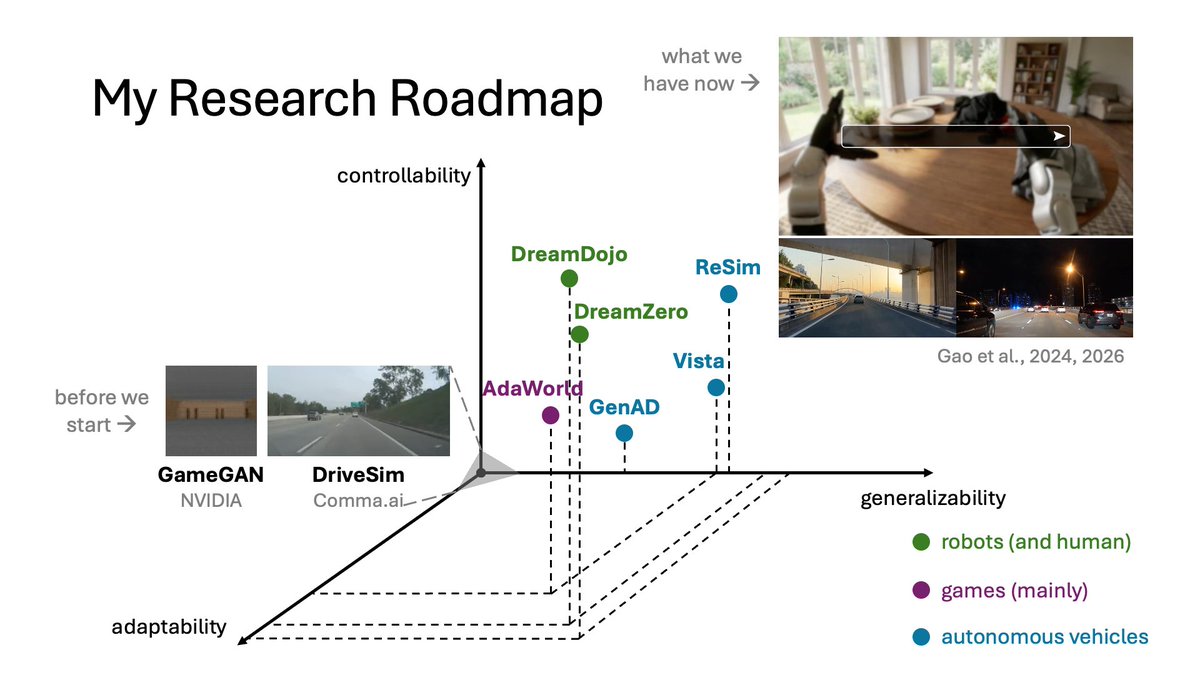

Launching my research group, MAGIC (Manipulation and General Intelligence Control) Lab @NUSComputing, Singapore! We focus on building the next generation of human-centric models for robotic manipulation — deployable safely, reliably, and easily in the real world. Our research spans MLLM reasoning, 3D vision, robot learning, simulation, dexterous manipulation, and cross-embodiment learning. Interested in joining? Sign up here and I'll send a reminder email: forms.gle/oJPLR2pLTt8kLC…

𝐎𝐧𝐞 𝐨𝐟 𝐭𝐡𝐞 𝐦𝐨𝐬𝐭 𝐞𝐱𝐜𝐢𝐭𝐢𝐧𝐠 𝐬𝐡𝐢𝐟𝐭𝐬 𝐢𝐧 𝐫𝐨𝐛𝐨𝐭𝐢𝐜𝐬 𝐫𝐢𝐠𝐡𝐭 𝐧𝐨𝐰 𝐢𝐬 𝐭𝐡𝐚𝐭 𝐫𝐨𝐛𝐨𝐭𝐬 𝐚𝐫𝐞 𝐥𝐞𝐚𝐫𝐧𝐢𝐧𝐠 𝐧𝐨𝐭 𝐨𝐧𝐥𝐲 𝐟𝐫𝐨𝐦 𝐝𝐚𝐭𝐚, 𝐛𝐮𝐭 𝐚𝐥𝐬𝐨 𝐟𝐫𝐨𝐦 “𝐢𝐦𝐚𝐠𝐢𝐧𝐞𝐝” 𝐟𝐮𝐭𝐮𝐫𝐞𝐬. ✨𝐖𝐨𝐫𝐥𝐝 𝐦𝐨𝐝𝐞𝐥𝐬 𝐚𝐫𝐞 𝐦𝐚𝐤𝐢𝐧𝐠 𝐭𝐡𝐢𝐬 𝐩𝐨𝐬𝐬𝐢𝐛𝐥𝐞 𝐚𝐧𝐝 𝐭𝐡𝐞 𝐟𝐢𝐞𝐥𝐝 𝐢𝐬 𝐦𝐨𝐯𝐢𝐧𝐠 𝐢𝐧𝐜𝐫𝐞𝐝𝐢𝐛𝐥𝐲 𝐟𝐚𝐬𝐭. World models, predictive representations of how environments evolve under actions, are quickly becoming one of the central building blocks of modern robotics. They allow robots not only to act, but also to imagine, predict, plan, simulate, and evaluate future outcomes before taking actions in the real world. What makes this field especially exciting is how rapidly it is evolving. In just a short time, we have seen the rise of foundation-scale robotic video generation, controllable simulation, learned physics, and world-guided robot policies. But at the same time, the literature has become highly fragmented across architectures, paradigms, and embodied applications. To help the community keep up, our MARS lab organized and led a comprehensive survey together with an amazing group of researchers, including @HaoranGeng2 , @ZeYanjie, @pabbeel, @JitendraMalikCV, @jiajunwu_cs, @du_yilun, @liuzhuang1234, @mapo1 , @philiptorr , @oier_mees Tatsuya Harada, across @UCBerkeley @Stanford, @Harvard @Princeton @ETH @UniofOxford @UTokyo_News @MSFTResearch. The survey reviews how world models are used for robot policy learning, planning, reinforcement learning, simulation, navigation, autonomous driving, and large-scale embodied video generation, while also summarizing datasets, benchmarks, evaluation protocols, and future research directions. 📖 “World Model for Robot Learning: A Comprehensive Survey” Paper: arxiv.org/abs/2605.00080 Project: ntumars.github.io/wm-robot-surve… Updated Github: github.com/NTUMARS/Awesom… We will also continuously maintain the repository to keep track of newly emerging papers, benchmarks, and resources for the community. #EmbodiedAI #RobotLearning #WorldModel #PhysicalAI #Robotics #FoundationModels

SONIC is now open-source! Generalist whole-body teleoperation for EVERYONE! Our team has long been building comprehensive pipelines for whole-body control, kinematic planner, and teleoperation, and they will all be shared. This will be a continuous update; inference code + model already there, training code and gr00t integration coming soon! Code: github.com/NVlabs/GR00T-W… Docs: nvlabs.github.io/GR00T-WholeBod… Site: nvlabs.github.io/GEAR-SONIC/

Training robot foundation models faces two key hurdles: how to get enough data to train an effective model, and how to make sure that new skills can be acquired quickly. The team at @RhodaAI believes that the answer is training Direct Video Action models from web data. Web data is plentiful, to the point where Rhoda can train their base model on hundreds of years of video data. And then, with the addition of robot data, they can quickly adapt it to new tasks with as little as 20 hours of in-domain data, performing complex, multi-step manipulation tasks with their purpose-built video foundation model. @tongzhou_mu @ericryanchan and @changanvr joined us to talk more about their approach. Watch Episode #79 of RoboPapers, with @micoolcho, @chris_j_paxton, and @DJiafei, to learn more!

Training robot foundation models faces two key hurdles: how to get enough data to train an effective model, and how to make sure that new skills can be acquired quickly. The team at @RhodaAI believes that the answer is training Direct Video Action models from web data. Web data is plentiful, to the point where Rhoda can train their base model on hundreds of years of video data. And then, with the addition of robot data, they can quickly adapt it to new tasks with as little as 20 hours of in-domain data, performing complex, multi-step manipulation tasks with their purpose-built video foundation model. @tongzhou_mu @ericryanchan and @changanvr joined us to talk more about their approach. Watch Episode #79 of RoboPapers, with @micoolcho, @chris_j_paxton, and @DJiafei, to learn more!

We are back. After one year of quiet building. Introducing GENE-26.5, our first robotic brain that takes a major step toward human-level capability. For years, robotics has struggled to learn from the world’s largest and valuable data source: Humans. Solving it means rethinking the whole stack from the ground up: - A robotics-native foundation model. - A 1:1 human-like robotic hand. - A noninvasive data collection glove for motion, force, and touch. - A simulator that turns weeks of experiments into minutes. GENE-26.5 is trained across language, vision, proprioception, tactile, and action. We designed a set of tasks to test how far we can go with this new paradigm. Fully autonomous, 1x speed, one model, same weights. (Enjoy with sound on) We are approaching the endgame for robotics. And this is just a beginning.

Robotics has changed dramatically over the last eight years. @xiao_ted has been involved in the cutting edge of robot learning through this period, spending those eight years at Google Brain/Google Deepmind. And he’s identified three eras of robot learning. These eras are: - The Era of Existence Proofs - trying different methods like QT-Opt, on-robot RL - The Era of Foundation Models - transitioning to data collection and clean objectives (i.e. supervised learning) - The Era of Scaling - orders of magnitude more data and larger models, enabling reasoning, long-horizon actions, and cross-embodiment transfer Watch Episode 78 of RoboPapers, with @micoolcho and @DJiafei to learn more!

Hear what we @DynaRobotics learnt from the bitter lesson in the past 10 months in production

Robotics has changed dramatically over the last eight years. @xiao_ted has been involved in the cutting edge of robot learning through this period, spending those eight years at Google Brain/Google Deepmind. And he’s identified three eras of robot learning. These eras are: - The Era of Existence Proofs - trying different methods like QT-Opt, on-robot RL - The Era of Foundation Models - transitioning to data collection and clean objectives (i.e. supervised learning) - The Era of Scaling - orders of magnitude more data and larger models, enabling reasoning, long-horizon actions, and cross-embodiment transfer Watch Episode 78 of RoboPapers, with @micoolcho and @DJiafei to learn more!