Built Different Certified Elite

775 posts

고정된 트윗

SpaceX ticker should be $SEXX Elon @elonmusk $TSLA

English

Had to take a break from @ChatCharge prep and go pick up this beauty! Got it home and immediately packed it to the gills with event materials ready to head out first thing in the morning! Fingers crossed no rock chips before we get it back and have PPF applied!

This baby is going to look good in black and white photos!

@chattybird0306

English

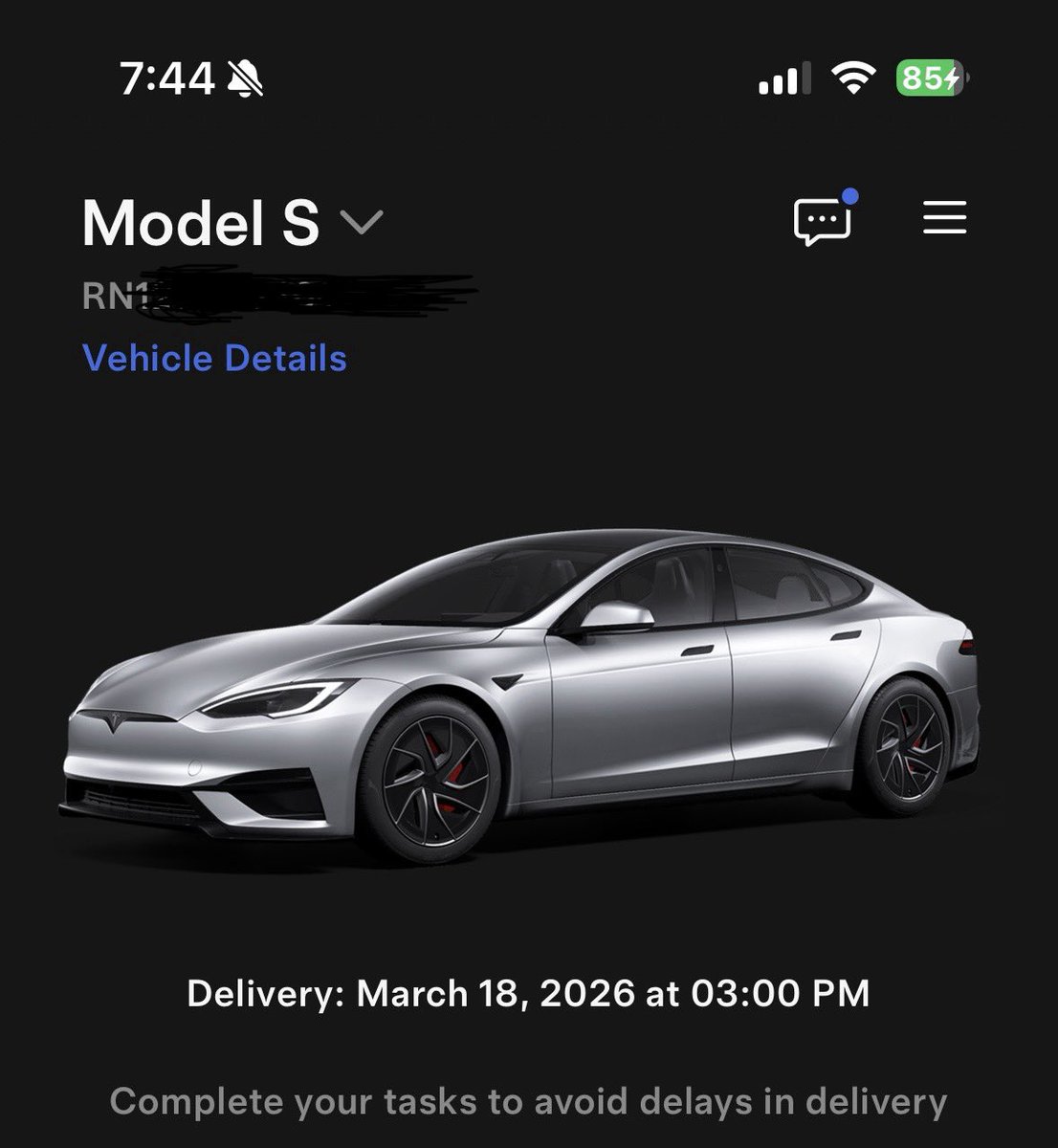

We pick up the new Model S Plaid tomorrow!! I’m so excited and can’t wait to take it on its first road trip to Chattanooga!

@JEHazel75 @ChatCharge

English

Built Different Certified Elite 리트윗함

Rocket garden at Starbase.

You can see this from the public highway.

Dima Zeniuk@DimaZeniuk

SpaceX Starship rocket garden

English

Built Different Certified Elite 리트윗함

Digital Optimus

Digital Optimus could take several forms.

At the very least, it will exist as software installed on a Mac or Windows machine. As such, it will have access to screen pixels (output) and be able to drive the keyboard and mouse (input).

It could run in a stand-alone mode using your computer’s local inference compute. Alternatively, that compute could be offloaded to a Tesla AI4 processor (and later AI5).

This offloading could occur in several ways, but most likely through a network connection to available AI4 compute. In a simple case, if your Tesla vehicle is parked in your garage, your home computer could connect over Wi-Fi to the vehicle’s compute— specifically its two AI4 chips.

Because the model is network-based, Digital Optimus could just as easily connect to any available compute node. That might be another user’s vehicle in his garage or any parked Tesla added to the network, or a cluster of AI4 compute blocks located at a Supercharger site.

This form of remote inference would allow Digital Optimus to seamlessly select the best available compute node.

Tesla/xAI could also produce a dedicated compute device, similar in concept to the NVIDIA DGX Spark. If such a device were built, it would simply join the network as another inference node. When located locally, it could even connect directly to a PC to minimize latency.

In effect, Elon’s idea implies that AI4 inference compute could function as a distributed network of compute devices. Users who own AI4 hardware (such as a Tesla vehicle) might even be compensated for contributing their compute capacity.

Finally, this distributed inference model could be the most energy-efficient approach. By spreading workloads across many nodes, it distributes power consumption and delivers intelligence at the lowest cost per watt. In doing so, it breaks the centralized data-center power logjam.

Elon Musk@elonmusk

Oh and it works in all AI4-equipped cars, so your car can do office work for you when not driving. We’re also deploying millions of dedicated Digital Optimus units in the field at Superchargers where we have ~7 gigawatts of available power.

English

Built Different Certified Elite 리트윗함

Woah!

Take a look at what Tesla’s Principal Engineer for the CyberCab just said

Eric@EricETesla

@SecDuffy, thank you and your team for meeting with us this week at the AV Safety Forum. Innovative, American made AVs, like Cybercab, will vastly improve roadway safety and we look forward to working with you on launching them at scale this year.

English

@KoncreteOshare @JEHazel75 @chattybird0306 @FthePump1 @Dogetothemoon @BLKMDL3 Yep. Should have said hi

English

@JEHazel75 @chattybird0306 @Brand0n @FthePump1 @Dogetothemoon @BLKMDL3 lol I did, and @FthePump1 had the t shirt on. You guys seemed like a great bunch. I need more reasons to drive the Y performance more. So June 6th fits the bill.

English

@KoncreteOshare @chattybird0306 @Brand0n @FthePump1 @Dogetothemoon @BLKMDL3 Oh you have jumped in and said hi!

English

@FthePump1 Me and my new Y performance will be there.

English

@FthePump1 thanks for the info today on the June 6th event.

English

@chattybird0306 @Brand0n @JEHazel75 @FthePump1 @Dogetothemoon @BLKMDL3 Seen you there as well. You guys seemed like a good bunch. Will look to see you guys at the June 6th event.

English

@Brand0n I think I seen you at the Tesla diner today.

English

Just did a thing. The kicker: FSD transfer and EOL for Model S. LFG! 🔥

Whole Mars Catalog@wholemars

Order a Tesla today, no need to wait for anything. It will change your life.

English

Built Different Certified Elite 리트윗함

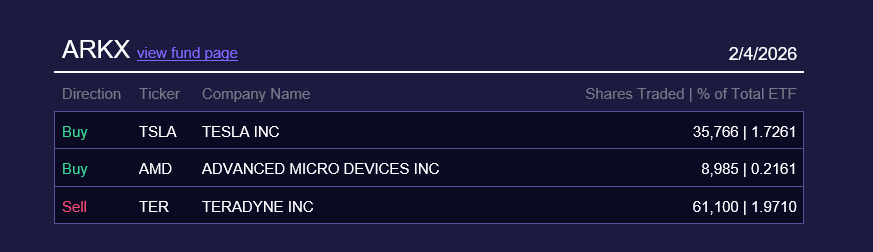

Holy smokes, another sign 🔥

ARKX (ARK Space & Defense Innovation ETF) bought for the first time $TSLA !!!!!!!!!

I put in the comment their portfolio this morning, before purchasing for close to $15m $TSLA shares, making it instantly the #19 position out of 35 positions.

English

Built Different Certified Elite 리트윗함

@JoNationLive @SawyerMerritt Cybercab is cool but I’m going to space when I grow up

English