Linjie (Lindsey) Li

189 posts

@LINJIEFUN

researching @Microsoft, @UW, contributed to https://t.co/VzcJa9Skx3

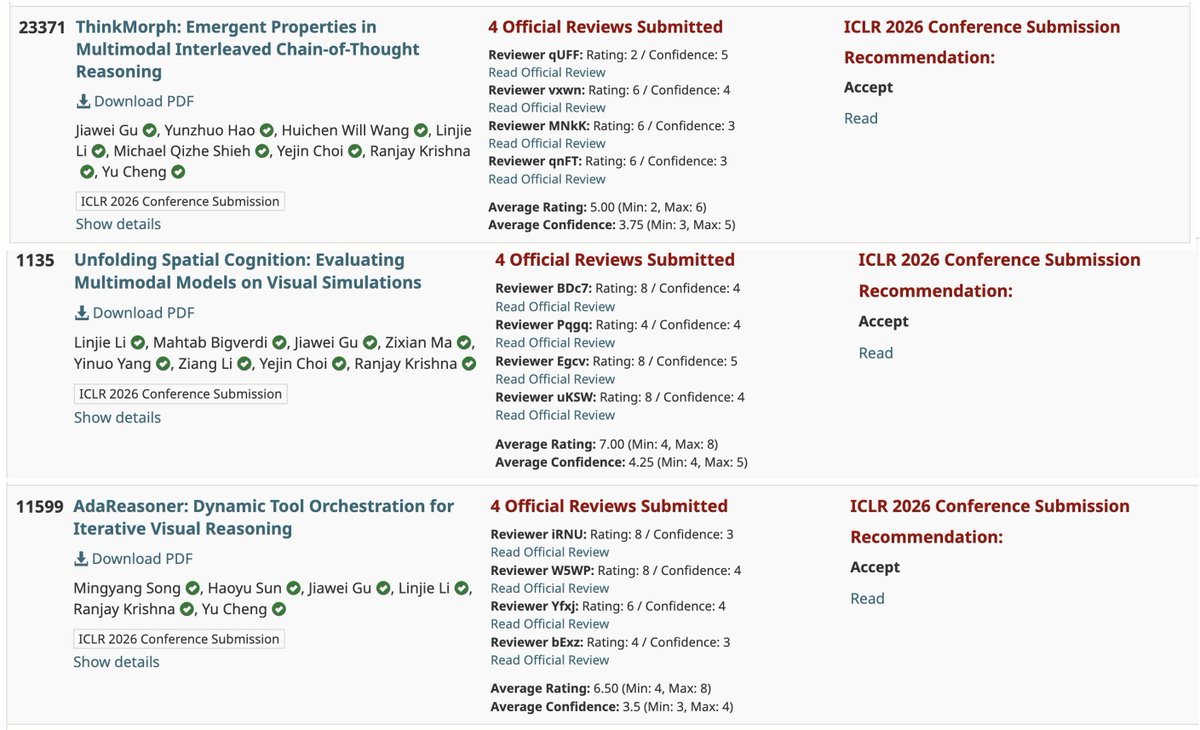

🚨Sensational title alert: we may have cracked the code to true multimodal reasoning. Meet ThinkMorph — thinking in modalities, not just with them. And what we found was... unexpected. 👀 Emergent intelligence, strong gains, and …🫣 🧵 arxiv.org/abs/2510.27492 (1/16)

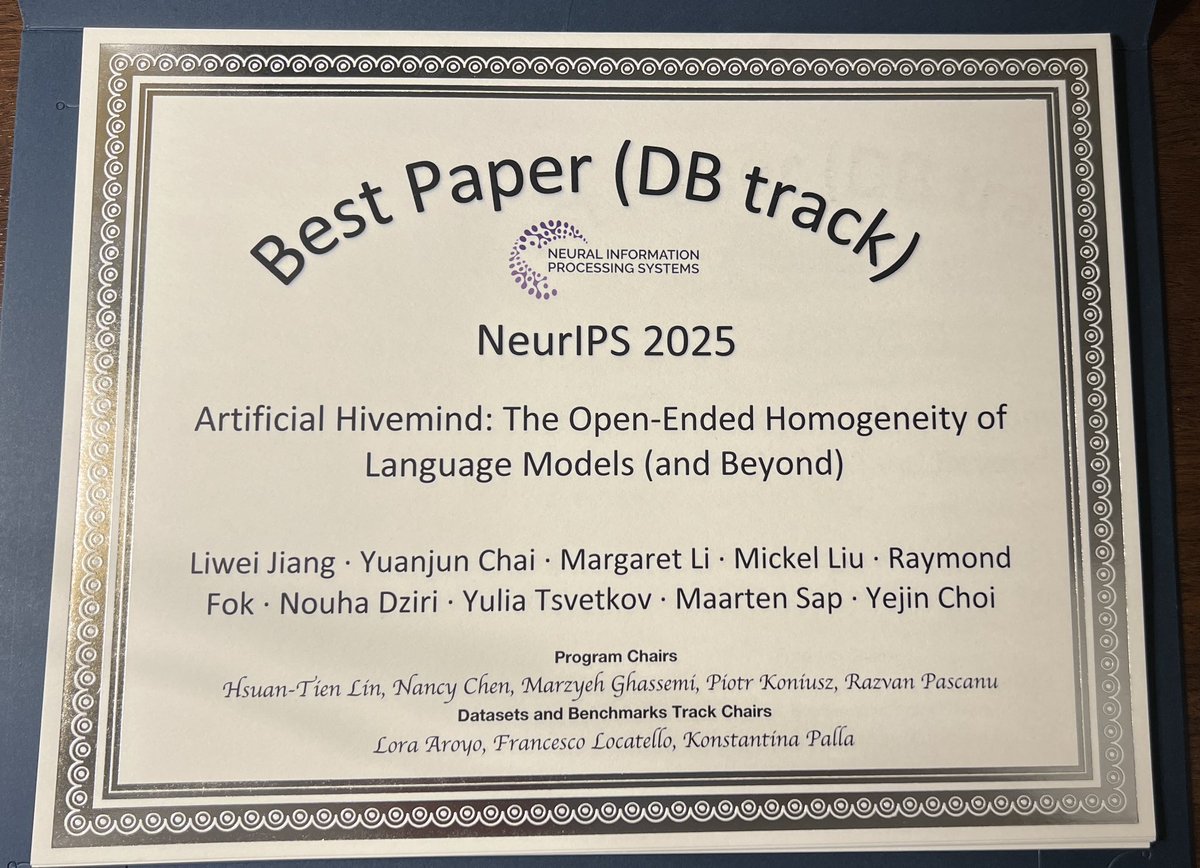

⚠️Different models. Same thoughts.⚠️ Today’s AI models converge into an 𝐀𝐫𝐭𝐢𝐟𝐢𝐜𝐢𝐚𝐥 𝐇𝐢𝐯𝐞𝐦𝐢𝐧𝐝 🐝, a striking case of mode collapse that persists even across heterogeneous ensembles. Our #neurips2025 𝐃&𝐁 𝐎𝐫𝐚𝐥 𝐩𝐚𝐩𝐞𝐫 (✨𝐭𝐨𝐩 𝟎.𝟑𝟓%✨) dives deep into this phenomenon, introducing 𝐈𝐧𝐟𝐢𝐧𝐢𝐭𝐲-𝐂𝐡𝐚𝐭, a real-world dataset of 26K real-world open-ended user queries spanning 17 open-ended categories + 31K dense human annotations (𝟐𝟓 𝐢𝐧𝐝𝐞𝐩𝐞𝐧𝐝𝐞𝐧𝐭 𝐚𝐧𝐧𝐨𝐭𝐚𝐭𝐨𝐫𝐬 𝐩𝐞𝐫 𝐞𝐱𝐚𝐦𝐩𝐥𝐞) to push AI’s creative and discovery potential forward. Now you can build your favorite models to be truly original, diverse, and impactful in the open-ended real world. 📍Paper: arxiv.org/abs/2510.22954 📍Data: huggingface.co/collections/li… We also systematically reveal Artificial Hivemind across: 💥 𝐆𝐞𝐧𝐞𝐫𝐚𝐭𝐢𝐯𝐞 𝐚𝐛𝐢𝐥𝐢𝐭𝐢𝐞𝐬: not only do individual LLMs repeat themselves, but different models produce strikingly similar content, even when asked fully open-ended questions. 💥 𝐃𝐢𝐬𝐜𝐫𝐢𝐦𝐢𝐧𝐚𝐭𝐢𝐯𝐞 𝐚𝐛𝐢𝐥𝐢𝐭𝐢𝐞𝐬: LLMs, LM judges, and reward models are systematically miscalibrated when rating alternative responses to open-ended queries. (1/N)

It began from a 🤯🤯 observation: when giving LMs text tokenized at *character*-level, its generation seemed virtually unaffected — even tho these token seqs are provably never seen in training! Suggests: functional char-level understanding, and tokenization as test-time control.

VAGEN poster 𝐭𝐨𝐦𝐨𝐫𝐫𝐨𝐰 at #NeurIPS! 🎮🧠 - 🕚 11am–2pm Wed - 📍 Exhibit Hall C,D,E #5502 We had much fun exploring: • How 𝐰𝐨𝐫𝐥𝐝 𝐦𝐨𝐝𝐞𝐥𝐢𝐧𝐠 helps VLM RL agents learn better policies • 𝐌𝐮𝐥𝐭𝐢-𝐭𝐮𝐫𝐧 𝐏𝐏𝐎 credit assignment via 𝐭𝐰𝐨-𝐥𝐞𝐯𝐞𝐥 𝐚𝐝𝐯𝐚𝐧𝐭𝐚𝐠𝐞 𝐞𝐬𝐭𝐢𝐦𝐚𝐭𝐨𝐫 (Bi-Level GAE) for turn-level and token-level critic learning Come chat about agents, RL, and world models 👀

🚀Excited to share our NeurIPS 2025 paper VAGEN, a scalable RL framework that trains VLM agents to reason as world models. VLM agents often act without tracking the world: they lose state, fail to anticipate effects, and RL wobbles under sparse, late rewards. Our solution is clear: - VAGEN guides VLM to think into StateEstimation (what is the current visual state?) and TransitionModeling (what happens after an action). - Further, we optimize with Bi-Level GAE for long-horizon credit assignment (turn level to token level) and a WorldModeling Reward that scores each turn’s observation and prediction against ground truth. On five agentic tasks from game, navigation to robotic manipulation, we boost a 3B model from 0.21 -> 0.82 and outperforms proprietary reasoning models like GPT-5! Paper & project: mll.lab.northwestern.edu/VAGEN

Glance Accelerating Diffusion Models with 1 Sample

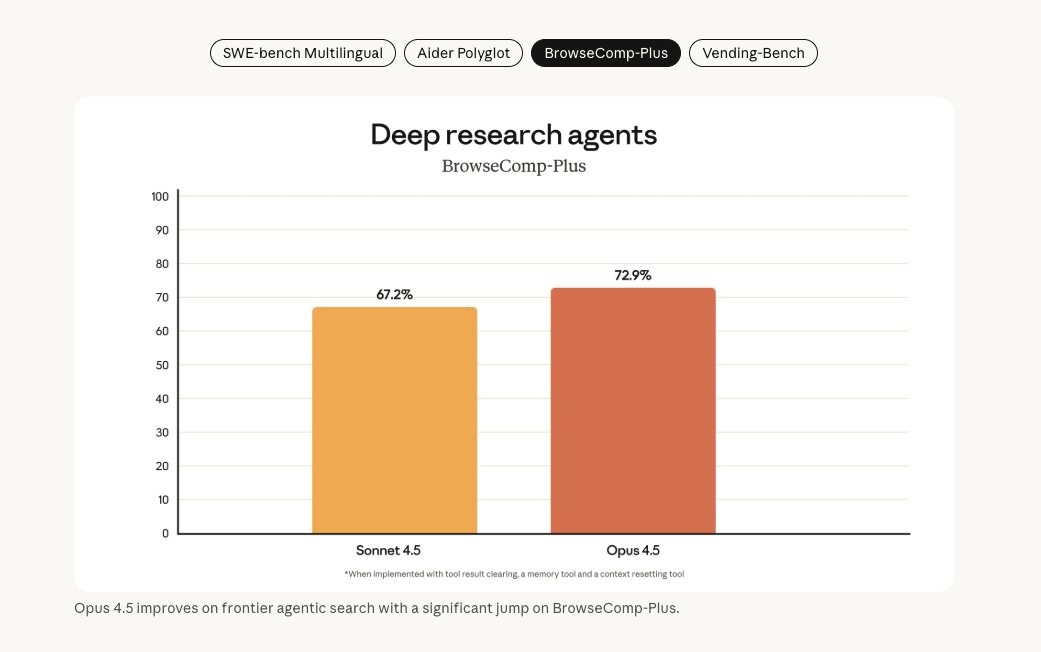

🚀 Introducing BrowseComp-Plus: A More Fair and Transparent Evaluation Benchmark of Deep-Research Agent. It is a new Deep-Research evaluation benchmark built on top of BrowseComp. It features - 📚 a fixed, carefully curated corpus of web documents - ✅ human-verified positive documents - ⚔️ web-mined challenging hard negatives. With BrowseComp-Plus, you can thoroughly evaluate and compare the performance of different components in a deep-research system. e.g. GPT-5 + Qwen3-Embedding. Code, dataset, and leaderboard links are provided at the end of this thread.