Weiwei Sun@sunweiwei12

🚨 Modern LLMs are trained on trillions of tokens, but for any given output, only a tiny subset of examples really matter. Training Data Attribution (TDA) is about finding those examples and measuring their influence.

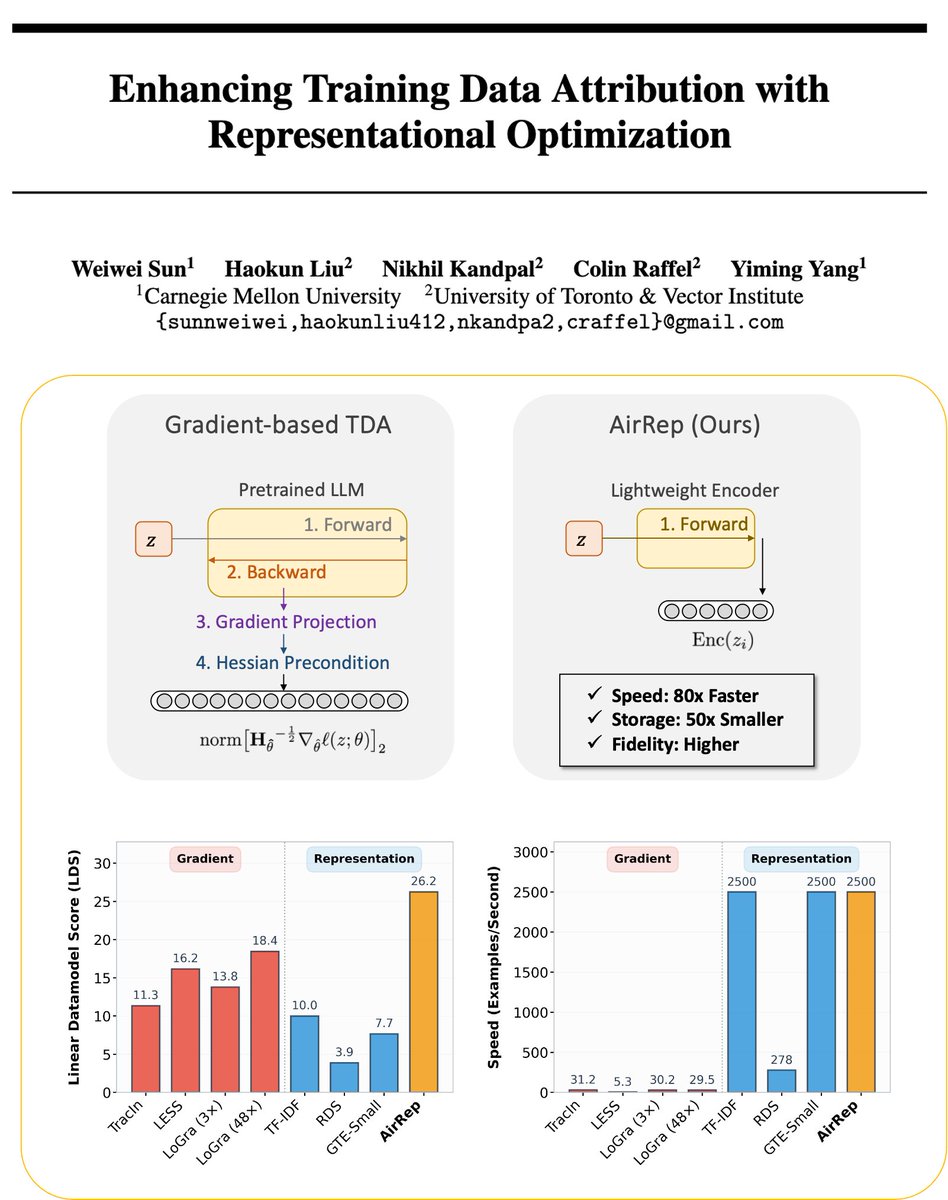

Gradient-based approaches, while well-founded, are extremely costly for LLMs because they require computing and storing gradients.

💡 We introduce AirRep, a small representation model trained to predict how training data influences model behavior.

The result: as accurate as gradient-based methods (and often more accurate), 80× faster, and with 50× storage reduction. On a single GPU, AirRep can process 2500 examples per second, while a well-optimized gradient-based model can only handle 30.

#neurips2025