OpenDriveLab

213 posts

@OpenDriveLab

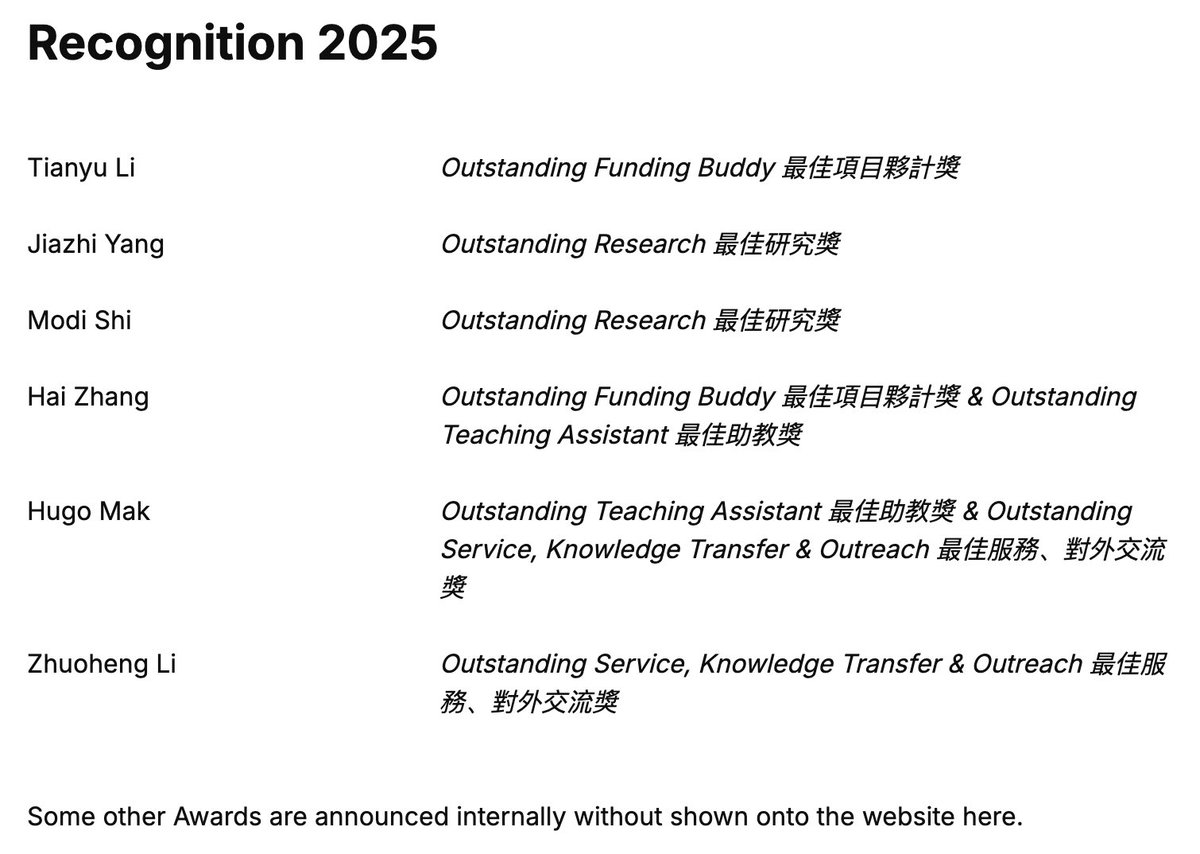

Official account for OpenDriveLab @hkuniversity and Beyond. We do cutting-edge research in Robotics, Autonomous Driving. Email: [email protected]

SONIC is now open-source! Generalist whole-body teleoperation for EVERYONE! Our team has long been building comprehensive pipelines for whole-body control, kinematic planner, and teleoperation, and they will all be shared. This will be a continuous update; inference code + model already there, training code and gr00t integration coming soon! Code: github.com/NVlabs/GR00T-W… Docs: nvlabs.github.io/GR00T-WholeBod… Site: nvlabs.github.io/GEAR-SONIC/

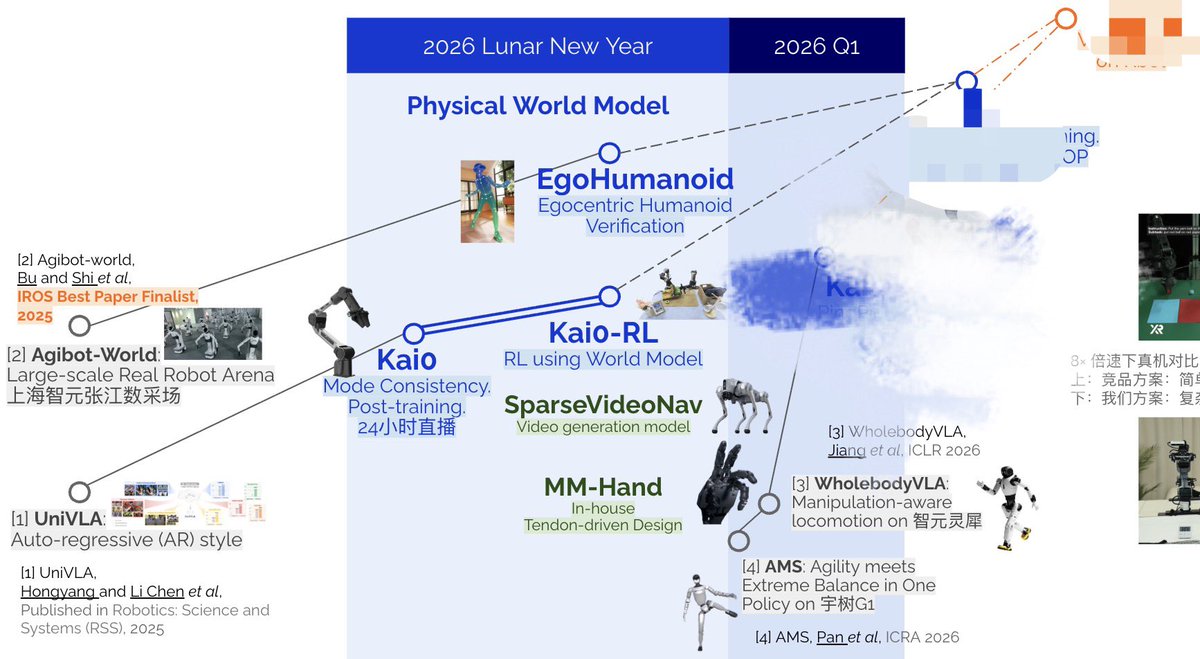

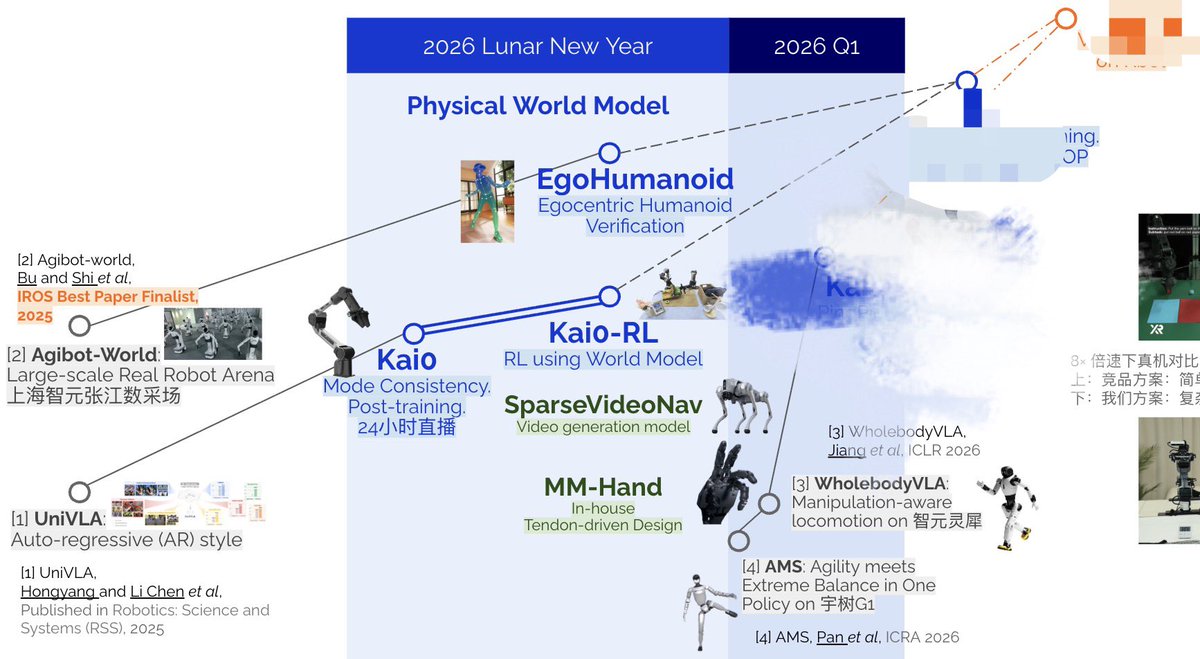

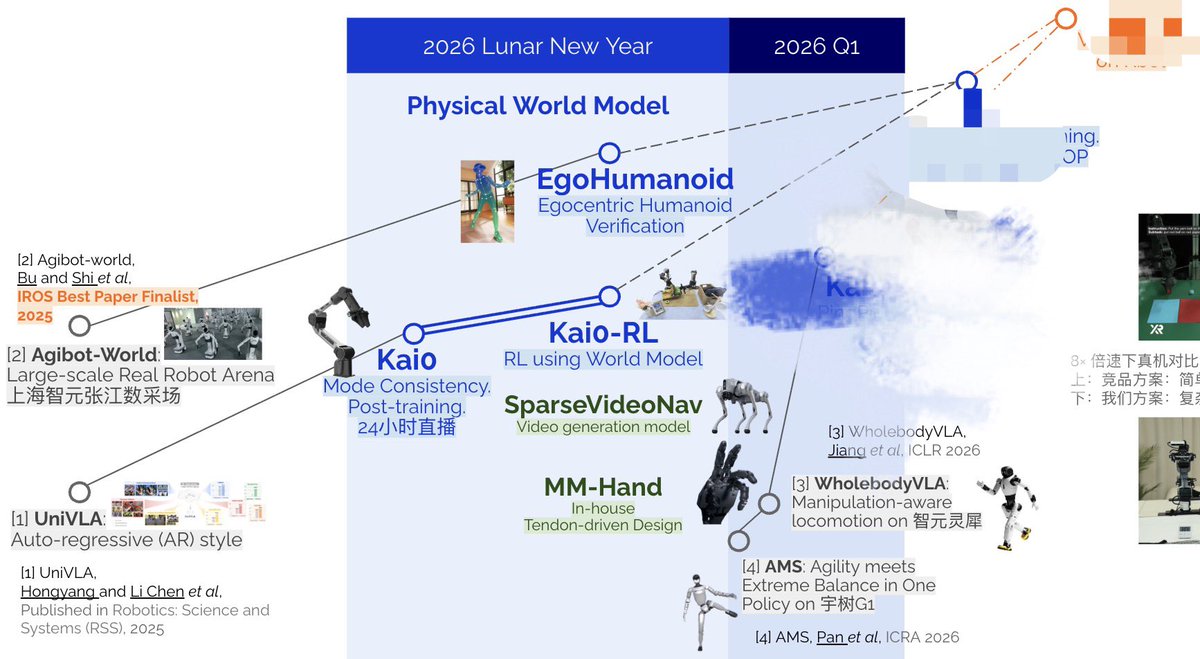

Humanoid robots have been prisoners of the lab. We set them free — with human data. We present EgoHumanoid: The first endorsement of human-to-humanoid transfer for whole-body loco-manipulation. 🔗 Home: opendrivelab.com/EgoHumanoid 📑 Arxiv: arxiv.org/abs/2602.10106 🧵👇

🧥 Live-stream robotic teamwork that folds clothes. 6 clothes in 3 minutes straight. χ₀ = 20hrs data + 8 A100s + 3 key insights: - Mode Consistency: align your distributions - Model Arithmetic: merge, don't retrain - Stage Advantage: pivot wisely 🔗 mmlab.hk/research/kai0