Russ Tedrake

29 posts

Russ Tedrake

@RussTedrake

Professor at MIT, studying robotics. Vice President of Robotics Research, Toyota Research Institute.

Meet SceneSmith: An agentic system that generates entire simulation-ready environments from a single text prompt. VLM agents collaborate to build scenes with dozens of objects per room, articulated furniture, and full physics properties. We believe environment generation is no longer the bottleneck for scalable robot training and evaluation in simulation. Website: scenesmith.github.io 👇🧵(1/8)

Want to scale robot data with simulation, but don’t know how to get large numbers of realistic, diverse, and task-relevant scenes? Our solution: ➊ Pretrain on broad procedural scene data ➋ Steer generation toward downstream objectives 🌐 steerable-scene-generation.github.io 🧵1/8

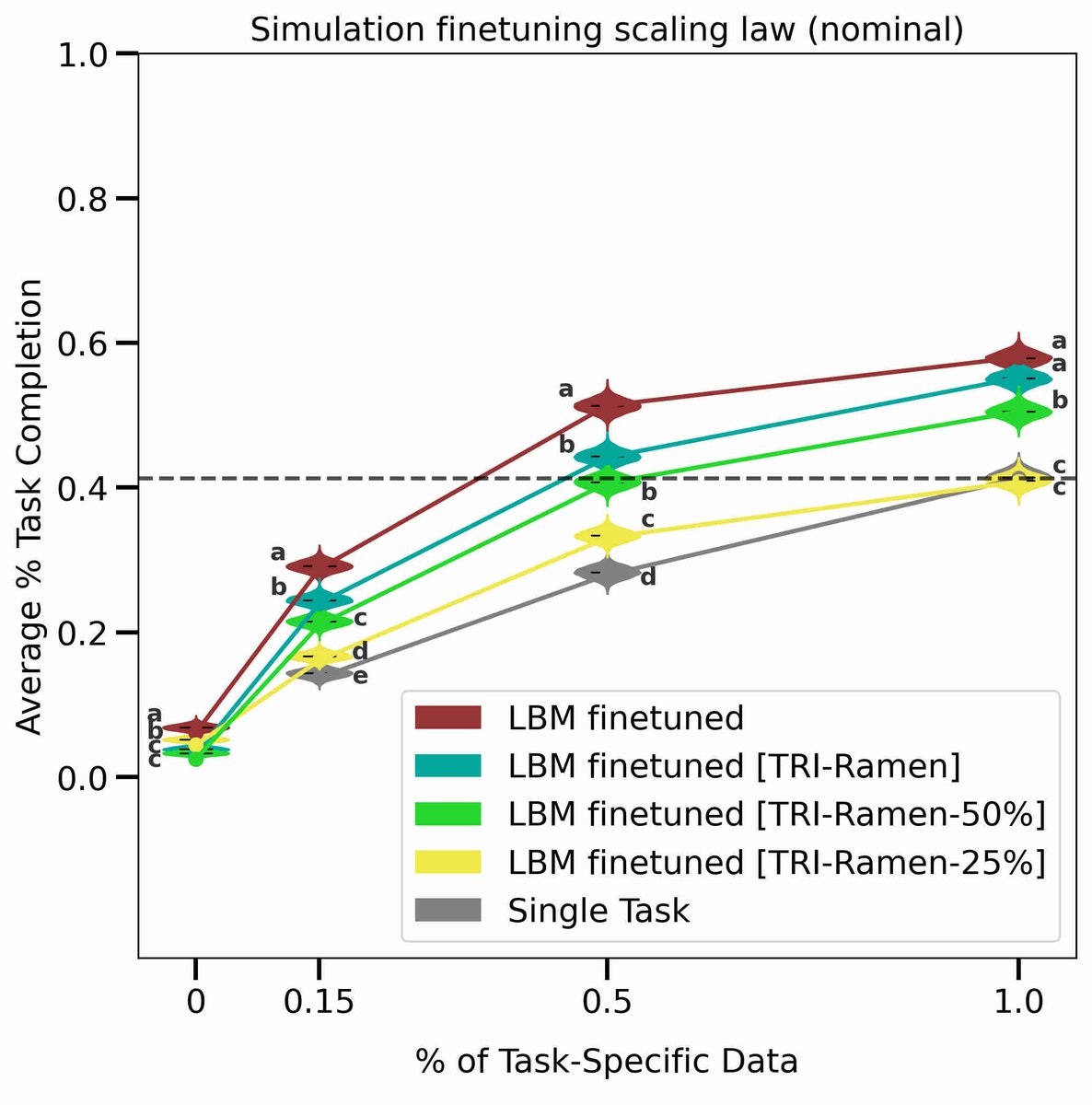

Learning from both sim+real data could scale robot imitation learning. But what are the scaling laws & principles of sim+real cotraining? We study this in the first focused analysis of sim+real cotraining spanning 250+ policies & 40k+ evals arxiv.org/abs/2503.22634 (1/6)