Bytefer

157 posts

Bytefer

@TBytefer

Building Vidpai, a local‑first AI content creation studio for Mac that runs open‑source models on your machine. https://t.co/7IN7nJTOl8

가입일 Ağustos 2022

140 팔로잉303 팔로워

@TBytefer My pleasure!

Yes, it does output progress if you stream

English

mlx-audio v0.4.0 is here 🚀

What's new:

→ Qwen3-TTS: fastest generation on Apple silicon and first batch support.

> Sequential (<80 ms TTFB at 2.75x realtime)

> Batch support (<210 ms TTFB at 4.12x for batch of 4-8)

→ Audio separation UI & server

→ nvfp4, mxfp4, mxfp8 quantization

→ Streaming /v1/audio/speech endpoint → Realtime STT streaming toggle

New models:

→ Echo TTS

→ Voxtral Mini 4B,

→ MingOmni TTS (MoE + Dense)

→ KittenTTS

→ Parakeet v3

→ MedASR

→ Spoken language identification (MMS-LID)

→ Sortformer diarization + Smart Turn v3 semantic (VAD)

Plus fixes for Kokoro Chinese TTS, Pocket TTS, Whisper, Qwen3-ASR, and more.

Thank you very much to @lllucas, @beshkenadze, @KarnikShreyas, @andimarafioti, @mnoukhov and welcome the 13 new contributors 🙌🏽

Get started today:

> pip install -U mlx-audio

Leave us a star ⭐

github.com/Blaizzy/mlx-au…

English

Chatterbox Turbo beats ElevenLabs Turbo v2.5 & Cartesia Sonic 3 is unbelievable.

1. Paralinguistic tags

2. <150ms time-to-first-sound

3. Instant voice cloning from just 5 seconds of audio.

@Prince_Canuma Thanks to mlx-audio, you can easily use it in Vidpai.

English

npx skills add vercel-labs/agent-browser --skill dogfood

dogfood — Systematic exploratory testing. Navigates an app like a real user, finds bugs and UX issues, and produces a structured report with screenshots and repro videos.

agent-browser.dev/skills

English

@DnuLkjkjh You can build upon the FluidAudio library and utilize the NVIDIA Parakeet model for transcription, which offers both high speed and greater accuracy. For purely English scenarios, use the V2 model; for multilingual scenarios, use the V3 model.

English

mlx-audio is seriously underrated. I've been running whisper models through MLX for on-device transcription on M-series Macs — the performance jump from M4 to M5 should make real-time transcription of 2+ hour recordings completely smooth. right now M4 handles it but you can feel the memory pressure on long sessions with the larger models

English

@Prince_Canuma The new era of MLX has arrived, and mlx-audio is my favorite open-source project. Thanks to the MLX King.

English

M5 Pro & M5 Max just dropped!

Here are the specs:

> M5 Pro: 18-core CPU, 20-core GPU, 307 GB/s bandwidth

> M5 Max: 18-core CPU, 40-core GPU, 614 GB/s bandwidth

> Neural Accelerators in every GPU core. 4x AI performance jump. Up to 128GB unified memory.

Running LLMs locally on Apple Silicon using MLX has never looked this good.

English

the creator who's banking $47K monthly from TikTok asked me not to share this automation...

these brain-rot style videos are pulling 10M+ views consistently on TikTok and YouTube Shorts

faceless channels are banking $15K-$50K monthly from this exact format

here's what my n8n workflow does automatically:

- generates viral Peter Griffin & Stewie conversations

- clones their actual voices using AI

- overlays them on Minecraft parkour gameplay

- adds perfectly timed subtitles

- renders complete videos ready to upload

these videos exploit dopamine triggers

the background gameplay keeps viewers glued while the AI-generated dialogue delivers the actual content

it's psychological manipulation at its finest

I spent 40+ hours perfecting this:

- gathering rare audio samples for voice cloning

- training custom AI voice models for both characters

- building the complete automation workflow

- testing render quality and timing

the results speak for themselves

channels using this format are going from 0 to 100K followers in weeks

some are hitting 50M+ monthly views with zero effort after setup

the workflow handles everything:

-content ideation

-script generation

-voice synthesis

- video composition

- final rendering

you literally press one button and get viral-ready content

while everyone's still manually editing videos, smart creators are scaling with automation like this

the opportunity window is MASSIVE right now

these formats are exploding across all platforms

Comment "VIDEO" + repost this + follow me (MUST DO ALL) and I'll send you the complete JSON file plus setup guide

a lot of people will ingore this and last yet another occasion to finally make it

don't sleep on it.

English

@tombielecki @xenovacom @deepseek_ai Download the model on first use, then use the Cache API to save the downloaded model: developer.mozilla.org/en-US/docs/Web….

English

@xenovacom @deepseek_ai Are the models somehow cached locally? Or do users have to wait for the model to download each time they open the page?

English

We just released Transformers.js v3.1 and you're not going to believe what's now possible in the browser w/ WebGPU! 🤯 Let's take a look:

🔀 Janus from @deepseek_ai for unified multimodal understanding and generation (Text-to-Image and Image-Text-to-Text)

👁️ Qwen2-VL from @alibaba_qwen for dynamic-resolution image understanding

🔢 JinaCLIP from @JinaAI_ for general-purpose multilingual multimodal embeddings

🌋 LLaVA-OneVision from @ByteDanceOSS for Image-Text-to-Text generation

🤸♀️ ViTPose for pose estimation

📄 MGP-STR for optical character recognition (OCR)

📈 PatchTST & PatchTSMixer for time series forecasting

That's right, everything running 100% locally in your browser (no data sent to a server)! 🔥 Huge for privacy!

Check out the release notes for more information. 👇

English

@xenovacom @deepseek_ai It supports mathematical formulas and has the capability to understand images with dynamic resolution, which is truly impressive.

English

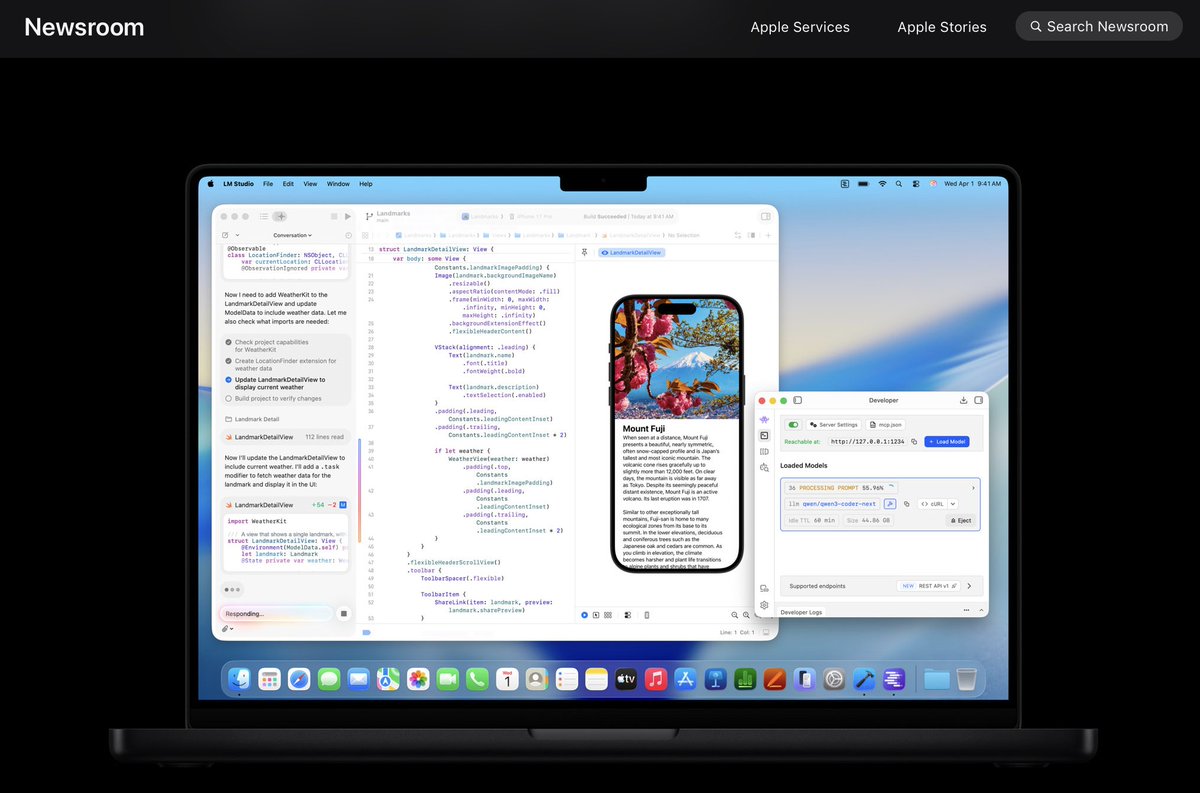

@ollama 👏,Ollama is so powerful, with it I was able to develop ollama-ocr: github.com/bytefer/ollama…

English

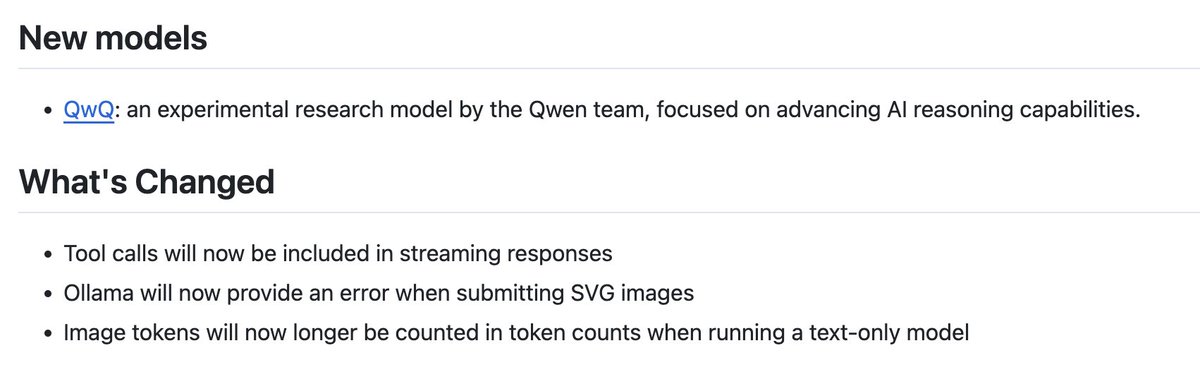

We've made tool calls work with streaming responses in Ollama 0.4.6!

Happy Thanksgiving!

github.com/ollama/ollama/…

English

Discover how to leverage Llama 3.2-Vision with Ollama-OCR for precise optical character recognition, preserving text structure and format.

{ author: @TBytefer } #DEVCommunity #JavaScript

dev.to/bytefer/ollama…

English