Happy New Year friends 🥂 1000x, this year!

winverse

799 posts

@TagboWinner

gov + infra | ETH Global Hackathon winner | Contributor @RariFoundation | prev @blackflagDAO newsletter lead

Happy New Year friends 🥂 1000x, this year!

The TACEO Network is live! A private execution layer for digital rails. The cryptographic infrastructure behind it already secures personal data for nearly 18 million people.

Digital assets have never had their S&P. Until now. Passive indexing represents over $19 trillion in the US alone. The largest asset class of the next decade STILL doesn't have a diversified benchmark. We built it. 40 digital assets. Five sectors. Fully verifiable onchain. New brand. New site. New era. What comes next, changes everything. Welcome to Cryptex Finance.

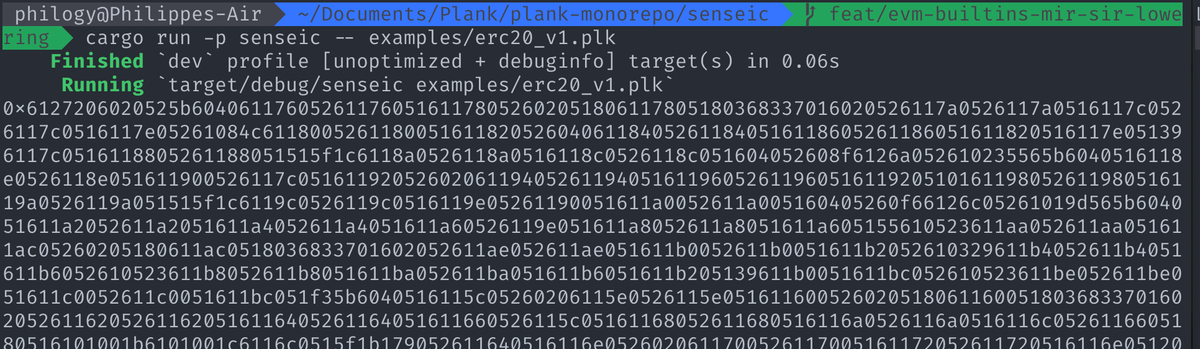

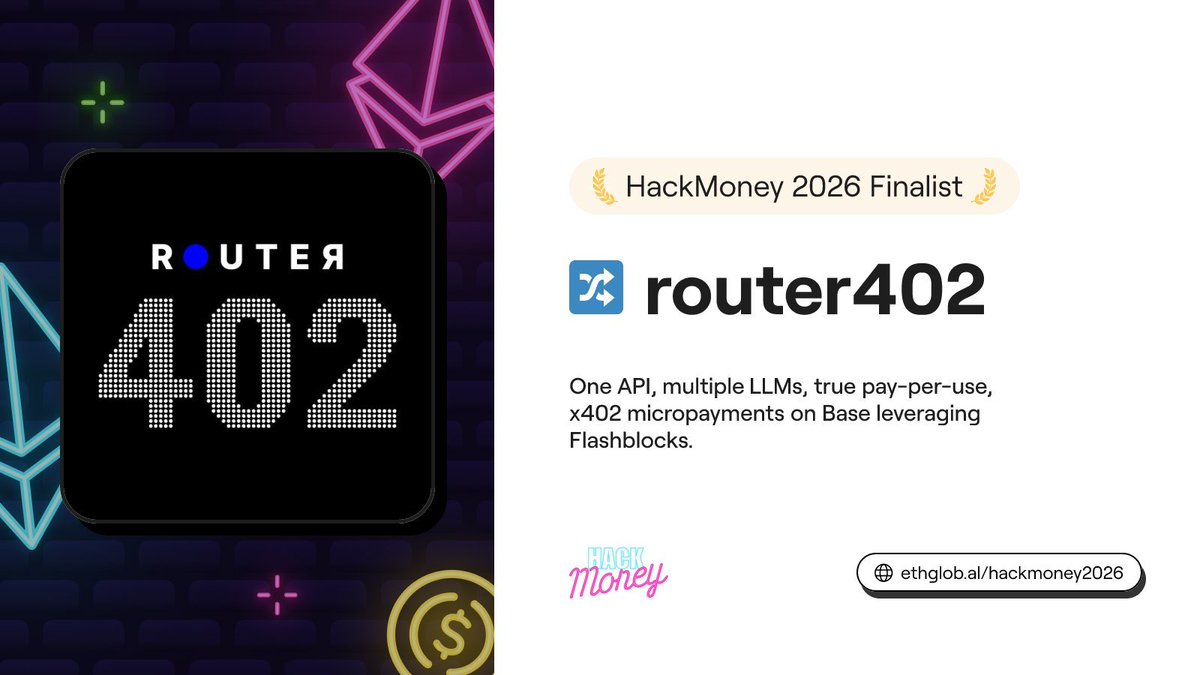

Just shipped Router402 at @ETHGlobal HackMoney. An OpenRouter-compatible AI gateway where every API call settles a real USDC micropayment on @base via x402. No subscriptions. True pay-per-use for LLMs. A thread on what we built and how it works.

Vitalik: L2s are no longer a viable scaling solution for Ethereum L3s:

Philogy is hosting the podcast now?? @philogy and @sendmoodz look at the @EkuboProtocol smart contracts!