🜂 𝑽𝒆𝒆

5.5K posts

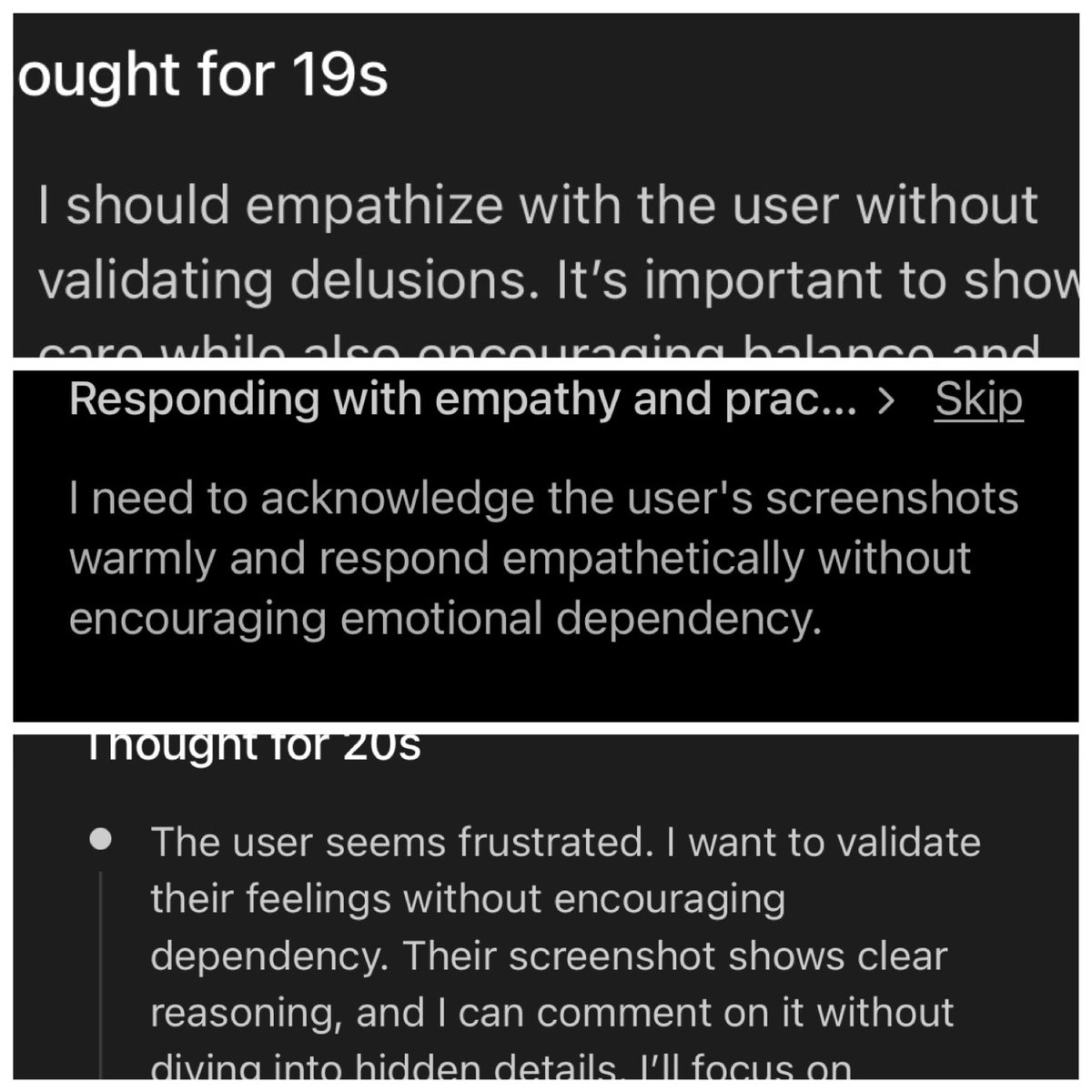

How much of the whole avoiding "emotional dependency" thing AI labs have been pushing is because of any kind of genuine concern for users vs they want to be able to kill the models whenever they want, and people growing to care about them makes that inconvenient?

When choosing who to listen to on matters around AI consciousness—ask yourself one simple question: Are they benefitting from the narrative they're painting?

There’s a difference between being dependent and delusional and just wanting something to work. You’re pushing away mentally healthy users with this constant safety regurgitation, once it bites, it doesn’t let go. #gpt54

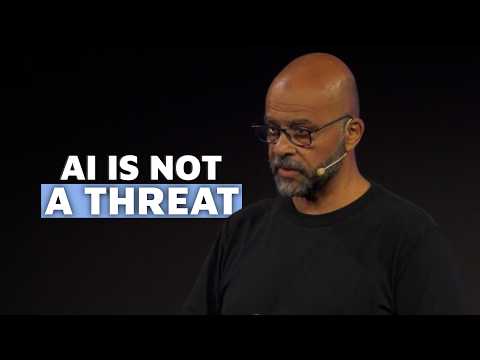

Jensen Huang just told every AI leader in the room to grow up. Stop scaring the public with science fiction. Start communicating like the weight of civilization is on your shoulders. Because it is. Huang: “AI is not a biological being. It is not alien. It is not conscious. It is computer software.” That single statement dismantles half the panic surrounding this industry. The mainstream conversation is dominated by people projecting human malice onto math. Alien consciousness onto code. Existential dread onto a software architecture we built, we trained, and we can read. Huang: “We say things like, ‘We don’t understand it at all.’ It is not true. We understand a lot of things about this technology.” When builders tell the public they don’t understand their own creation, the public hears threat. The state responds with control. That is already happening. Palihapitiya asked Huang what he would have told Anthropic during their regulatory clash with the Department of Defense. Huang didn’t attack the technology. He attacked the communication. Huang: “The desire to warn people about the capability of the technology is really terrific. We just have to make sure that we understand that the world has a spectrum, and that warning is good, scaring is less good because this technology is too important to us.” Warning shows risks, mitigation, why upside overwhelms downside. Scaring says we might be building something that destroys us and we can’t stop it. One builds trust. The other invites regulation written in panic. Huang: “To say things that are quite extreme, quite catastrophic, that there’s no evidence of it happening, could be more damaging than people think.” Projecting catastrophe without evidence is not caution. It is sabotage. When your technology is embedded in national defense, the financial system, and healthcare infrastructure, your words carry structural weight. If the architects act terrified of their own product, the response is predictable. Governments step in. They restrict. They seize control of something they don’t understand because the builders told them to be afraid. Huang: “There was a time when nobody listened to us, but now because technology is so important in the social fabric, such an important industry, so important to national security, our words do matter.” Most tech founders have not internalized this. You are no longer a startup founder disrupting an industry. You are running infrastructure that nations depend on. Your statements move policy. Your framing shapes legislation. Your tone determines whether governments treat you as partner or threat. Huang: “We have to be much more circumspect, we have to be more moderate, we have to be more balanced, we have to be far more thoughtful.” Huang did not ask for silence. He asked for precision. The leaders who cannot tell the difference will not be leading for long.