Christopher Agia

94 posts

Christopher Agia

@agiachris

PhD in Computer Science candidate @Stanford. My interests span learning for robotic planning, control, vision systems, and their interfacing representations.

The degree to which you are awed by AI is perfectly correlated with how much you use AI to code.

🤖 Can a single robot policy manipulate diverse tools without ever seeing them before? Introducing SimToolReal 🔨 : a generalist dexterous manipulation policy that transfers zero-shot sim→real to unseen tools + unseen tasks All videos are 1x speed (60 Hz control) 🧵👇

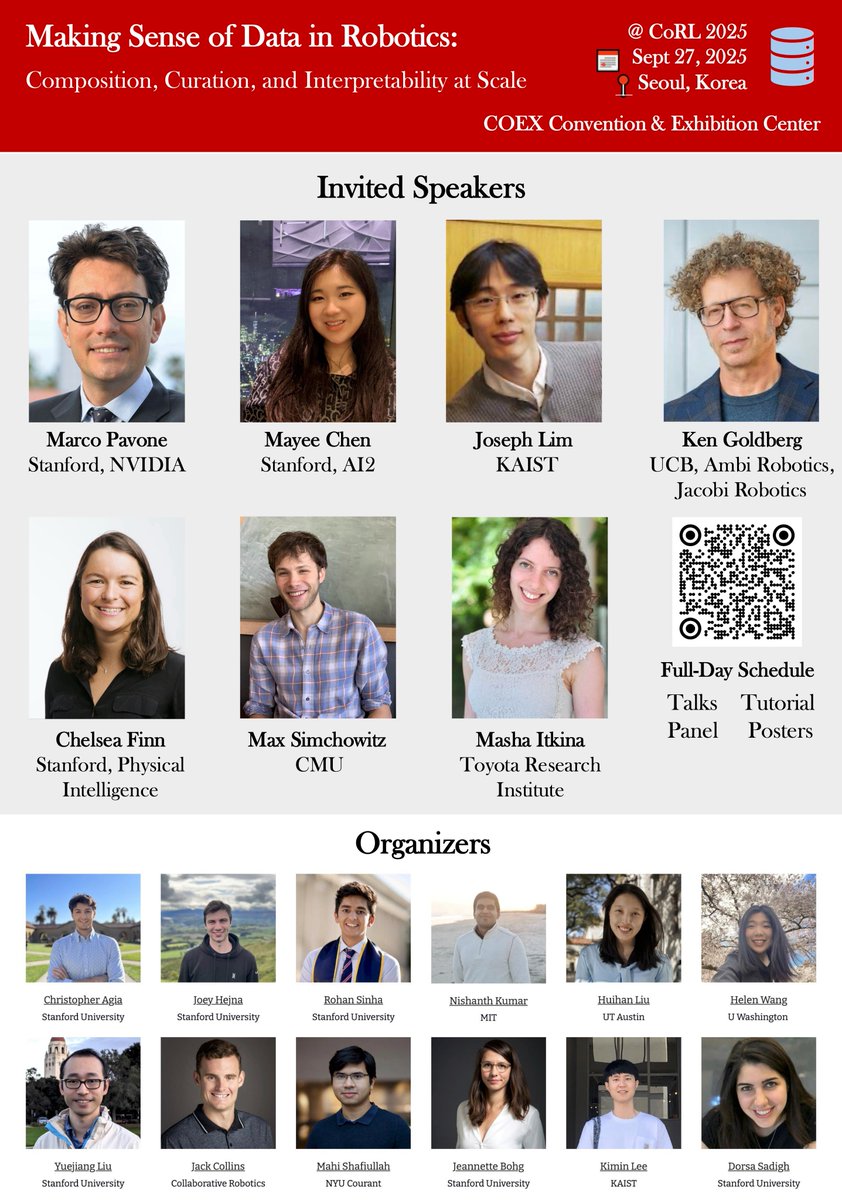

It's almost time for #CoRL 2025! A reminder that we're hosting the Data in Robotics workshop this Saturday Sept 27th. We have a packed schedule and are also attempting to livestream the event for those who can't attend in person.

What makes data “good” for robot learning? We argue: it’s the data that drives closed-loop policy success! Introducing CUPID 💘, a method that curates demonstrations not by "quality" or appearance, but by how they influence policy behavior, using influence functions. (1/6)

What makes data “good” for robot learning? We argue: it’s the data that drives closed-loop policy success! Introducing CUPID 💘, a method that curates demonstrations not by "quality" or appearance, but by how they influence policy behavior, using influence functions. (1/6)

We're hosting the 1st workshop on Making Sense of Data in Robotics at @corl_conf this year! We'll investigate what makes robot learning data "good" by discussing: 🧩 Data Composition 🧹 Data Curation 💡 Data Interpretability Paper submissions are due 8/22/2025! 🧵(1/3)

We're hosting the 1st workshop on Making Sense of Data in Robotics at @corl_conf this year! We'll investigate what makes robot learning data "good" by discussing: 🧩 Data Composition 🧹 Data Curation 💡 Data Interpretability Paper submissions are due 8/22/2025! 🧵(1/3)

We're hosting the 1st workshop on Making Sense of Data in Robotics at @corl_conf this year! We'll investigate what makes robot learning data "good" by discussing: 🧩 Data Composition 🧹 Data Curation 💡 Data Interpretability Paper submissions are due 8/22/2025! 🧵(1/3)