Andrew Trice

7.8K posts

Andrew Trice

@andytrice

Distinguished Engineer & Solution Architect @ Capital One

가입일 Haziran 2009

720 팔로잉2.7K 팔로워

Andrew Trice 리트윗함

This is huge: Llama-v2 is open source, with a license that authorizes commercial use!

This is going to change the landscape of the LLM market.

Llama-v2 is available on Microsoft Azure and will be available on AWS, Hugging Face and other providers

Pretrained and fine-tuned models are available with 7B, 13B and 70B parameters.

Llama-2 website: ai.meta.com/llama/

Llama-2 paper: ai.meta.com/research/publi…

A number of personalities from industry and academia have endorsed our open source approach: about.fb.com/news/2023/07/l…

English

Andrew Trice 리트윗함

A 2-minute introduction to the fundamental building block behind Large Language Models:

Text Embeddings

(This is the most helpful explanation you'll read online today. I promise.)

The Internet is mainly text.

For centuries, we've captured most of our knowledge using words, but there's one problem:

Neural networks hate text.

Judging by how good language models are today, this might not be obvious, but turning words into numbers is more complex than you think.

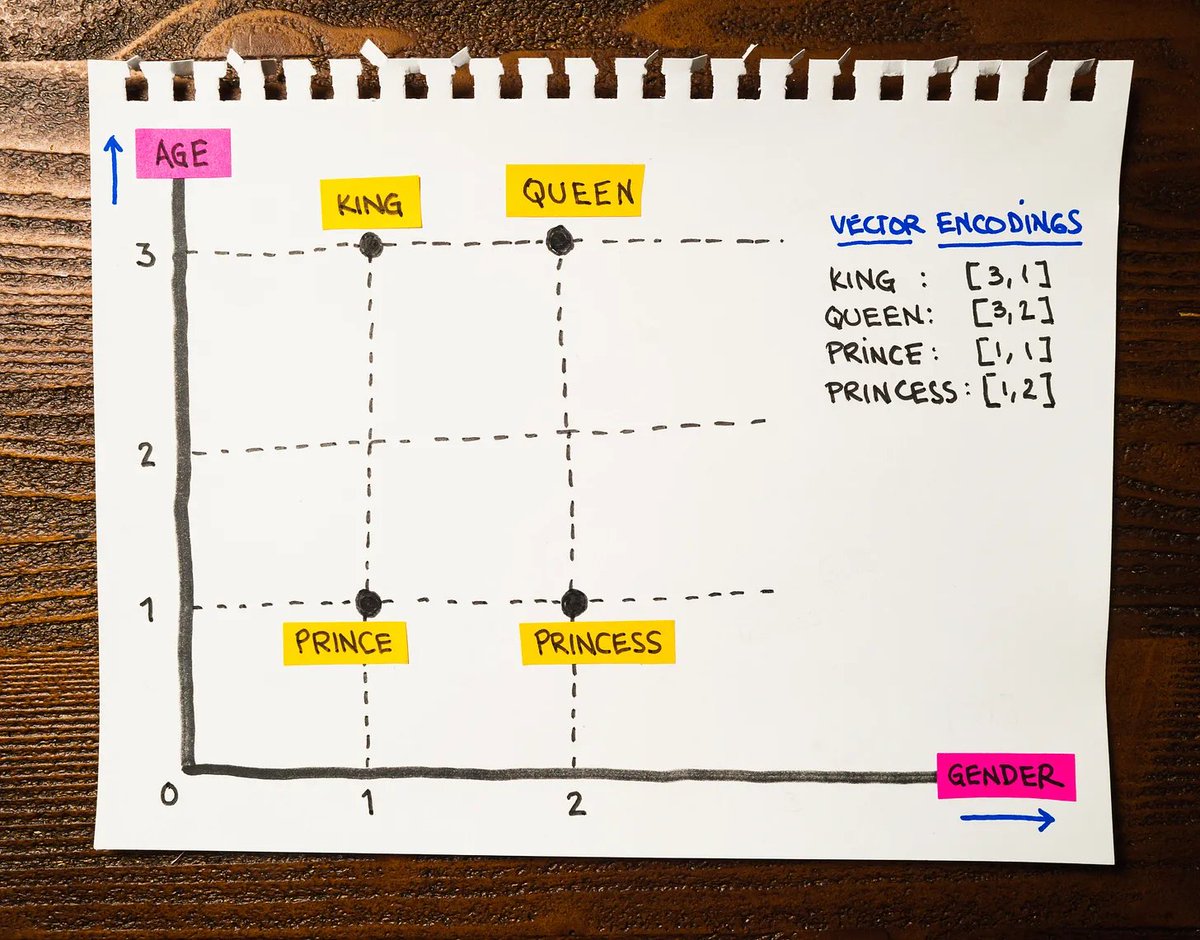

Imagine a 4-word vocabulary: King, Queen, Prince, and Princess.

The most straightforward approach to converting our vocabulary into numbers is to use consecutive values:

• King → 1

• Queen → 2

• Prince → 3

• Princess → 4

Unfortunately, neural networks tend to see what's not there. Is a Princess four times as important as a King? Of course not, but the values say otherwise: Princess is "worth" 4 while a King is "worth" 1.

We don't know how a neural network will interpret this, so we need a better representation.

Instead of using numerical values, we can use vectors. We call this particular representation "one-hot encoding," where we use ones and zeros to differentiate each word:

• King → [1, 0, 0, 0]

• Queen → [0, 1, 0, 0]

• Prince → [0, 0, 1, 0]

• Princess → [0, 0, 0, 1]

This encoding fixes the problem of a network misinterpreting ordinal values but introduces a new one:

According to the Oxford English Dictionary, there are 171,476 words in use. We certainly don't want to deal with large vectors with mostly zeroes.

Here is where the idea of "word embeddings" enters the picture.

We know that the words King and Queen are related, just like Prince and Princess are. Word embeddings have a simple characteristic: related words should be close to each other, while words with different meanings should lie far away.

The attached image is a two-dimensional chart where I placed the words from our vocabulary.

Look at the image and something critical will become apparent:

King and Queen are close to each other, just like the words Prince and Princess are. This encoding captures a crucial characteristic of our language: related concepts stay together!

And this is just the beginning.

Notice what happens when we move on the horizontal axis from left to right: we go from masculine (King and Prince) to feminine (Queen and Princess). Our embedding encodes the concept of "gender"!

And if we move on the vertical axis, we go from a Prince to a King and from a Princess to a Queen. Our embedding also encodes the concept of "age"!

We can derive the new vectors from the coordinates of our chart:

• King → [3, 1]

• Queen → [3, 2]

• Prince → [1, 1]

• Princess → [1, 2]

The first component represents the concept of "age": King and Queen have a value of 3, indicating they are older than Prince and Princess with a value of 1.

The second component represents the concept of "gender": King and Prince have a value of 1, indicating male, while Queen and Princess have a value of 2, indicating female.

I used two dimensions for this example because we only have four words, but using more would allow us to represent other practical concepts besides gender and age.

For instance, GPT3 uses 12,288 dimensions to encode their vocabulary. That's a lot!

Text Embeddings are the backbone of some of the most impressive generative AI models we use today.

I love explaining Machine Learning and Artificial Intelligence ideas. If you enjoy in-depth content like this, follow me @svpino so you don't miss what comes next.

English

While I'm on the topic of AI/GPT (see last tweet), if you haven't tried GitHub Copilot, you should. Natural language -> source code in seconds. (Ctrl+Enter to browse results.) Plus, it can translate code between languages, or document your code & more github.com/features/copil…

English

Highly recommended viewing to understand the impact AI/LLMs are going to have on computing in the coming years. Feels like we're at a tipping point: youtube.com/watch?v=qbIk7-…

YouTube

English

IBM Wazi as a Service: Automate, Accelerate and Manage Your zOS Dev Test Cloud Infrastructure ibm.com/cloud/blog/ann…

English

@csantanapr A TV. seriously, I use an Insignia 43". It is standard 4K, so it's not "ultra wide", but its the equivalent of 4 1080p monitors side by side. Just make sure you can force the TV HDMI mode to "HDMI2" otherwise you won't get high resolution.

English

Andrew Trice 리트윗함

Suggested Read: 5 Best Terraform Tools That You Need in 2022 buff.ly/3N6O7nx

English

@swelljoe @sandofsky Isn’t that how all databases/ledgers work? It’s just a decentralized ledger/db

English

@louwrentius @sandofsky I can see use cases for an secure, decentralized/distributed ledger (that’s all it is). Just without all the crypto hype

English

. @github it seems like there have been a lot of issues/degraded performance in Actions this month, which is impacting team productivity and eroding trust. Can you share a post-mortem of the issues? githubstatus.com/history

English

@github it seems like there have been a lot of issues/degraded performance in Actions this month, which is impacting team productivity and eroding trust. Can you share a post-mortem of the issues? githubstatus.com/history

English

Time-lapse & demo of my latest guitar build... a cigar box style guitar made from reclaimed wood from an old piano: youtu.be/SyMad356Alw

YouTube

English

@thibault_imbert All the time. Problem is, I now have a sea of unread messages

English