Wes Wagner ☕️

7K posts

Wes Wagner ☕️

@caffeinatedwes

I mostly ✍️ about technology, business, and ai. Occasional political RTs. VC-backed startup generalist ➡️ bootstrapped entrepreneur. 💼 @RarelyDecaf

Memory in the sense of recalling information is a solved problem, or at least as solved as it needs to be. That's why everyone is getting ~100% on all the meaningless "memory benchmarks". Memory in the sense of learning/improving over time is very much unsolved though.

no one has been able to solve ai memory yet. it’s brittle, it’s fragmented, & often times less helpful than not using memory. it’s an incredibly fascinating problem, way more of an art than a science at this point.

5 minutes ago, @karpathy just dropped karpathy/jobs! he scraped every job in the US economy (342 occupations from BLS), scored each one's AI exposure 0-10 using an LLM, and visualized it as a treemap. if your whole job happens on a screen you're cooked. average score across all jobs is 5.3/10. software devs: 8-9. roofers: 0-1. medical transcriptionists: 10/10 💀 karpathy.ai/jobs

A new study from Anthropic finds that gains in coding efficiency when relying on AI assistance did did not meet statistical significance; AI use noticeably degraded programmers’ understanding of what they were doing. Incredible.

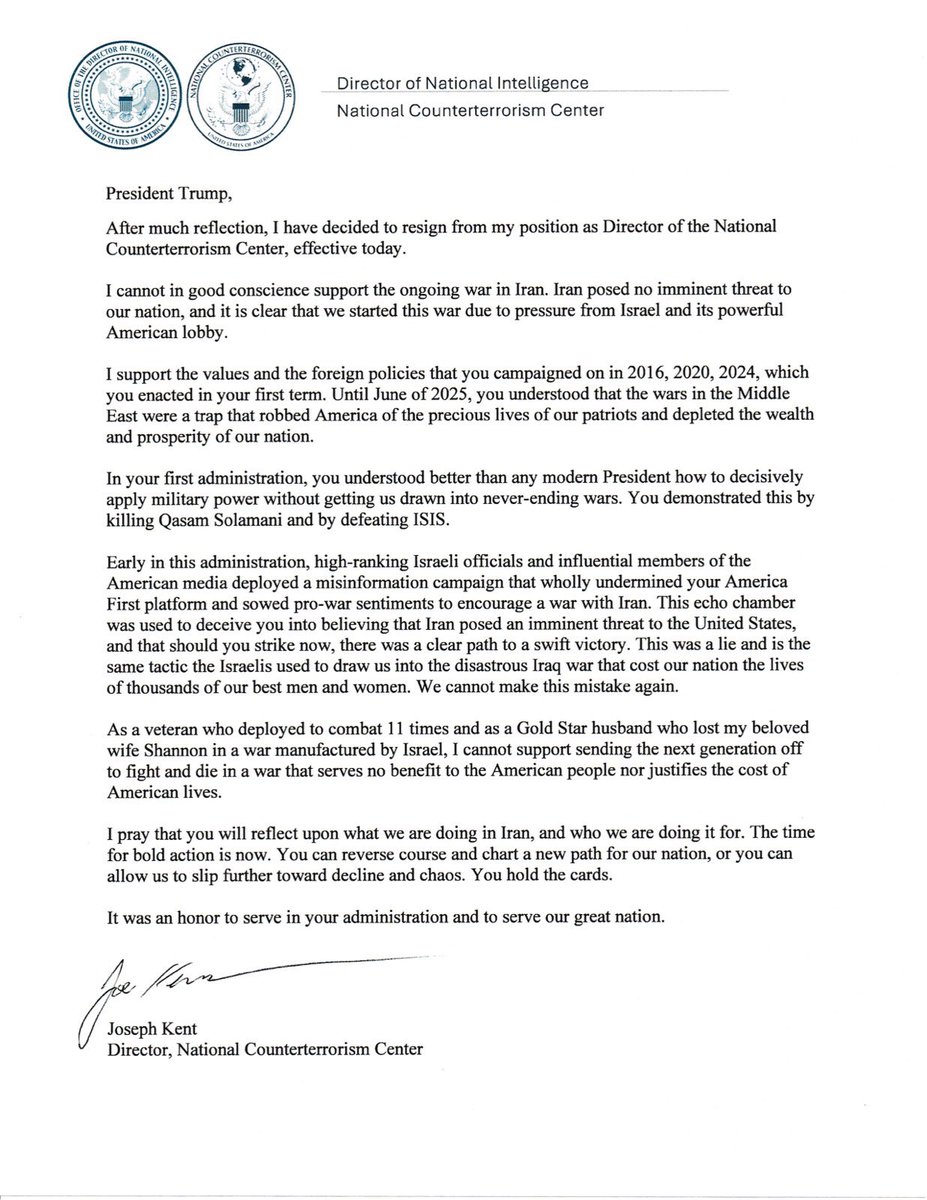

So he's flat out telling us that we're in a war with Iran because Israel forced our hand. This is basically the worst possible thing he could have said.

The headline says AI intensifies work. What the study actually found is more interesting than that. Berkeley researchers tracked 200 employees for 8 months. AI made every single one of them more capable. They wrote code they couldn’t write before. They took on tasks they used to outsource. They moved faster on work that would have sat in a backlog for months. And then they burned out. Because the company changed nothing else. The org handed people a tool that 10x’d their ability to start new work, then kept the org chart, meeting cadence, review processes, and scope boundaries completely identical. Zero workflow redesign. This is like giving everyone a car and keeping the speed limit signs from the horse-and-buggy era. People drove faster because they could, crashed because nobody updated the roads. The self-reinforcing cycle the researchers found is worth sitting with: AI accelerated tasks → raised speed expectations → workers leaned harder on AI → scope expanded → wider scope created more work → more work demanded more AI. That loop has no natural stopping point. The company never installed one. Meanwhile, a separate NBER study across thousands of workplaces found productivity gains of just 3%. And an Upwork survey found 77% of employees say AI tools actually decreased their productivity. The pattern across all of this research is identical: individual capability goes up, organizational design stays frozen, and the gap between the two creates burnout. The study literally recommends companies build an “AI practice” with structured reflection intervals and scope limits. The researchers aren’t saying AI failed. They’re saying management failed to adapt to AI. Every CEO reading this headline as validation for slowing AI adoption is making exactly the wrong bet. The companies that win will be the ones that redesign the operating system around the intensity, not the ones that avoid it.