Juan Manuel Ciro

25 posts

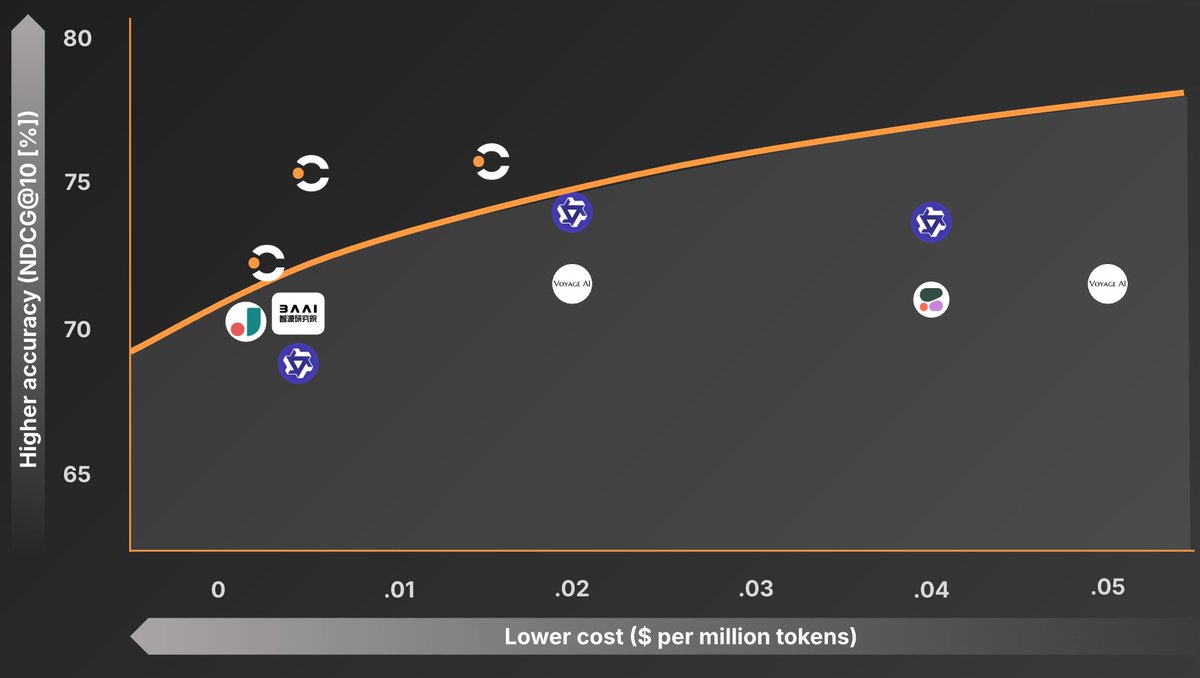

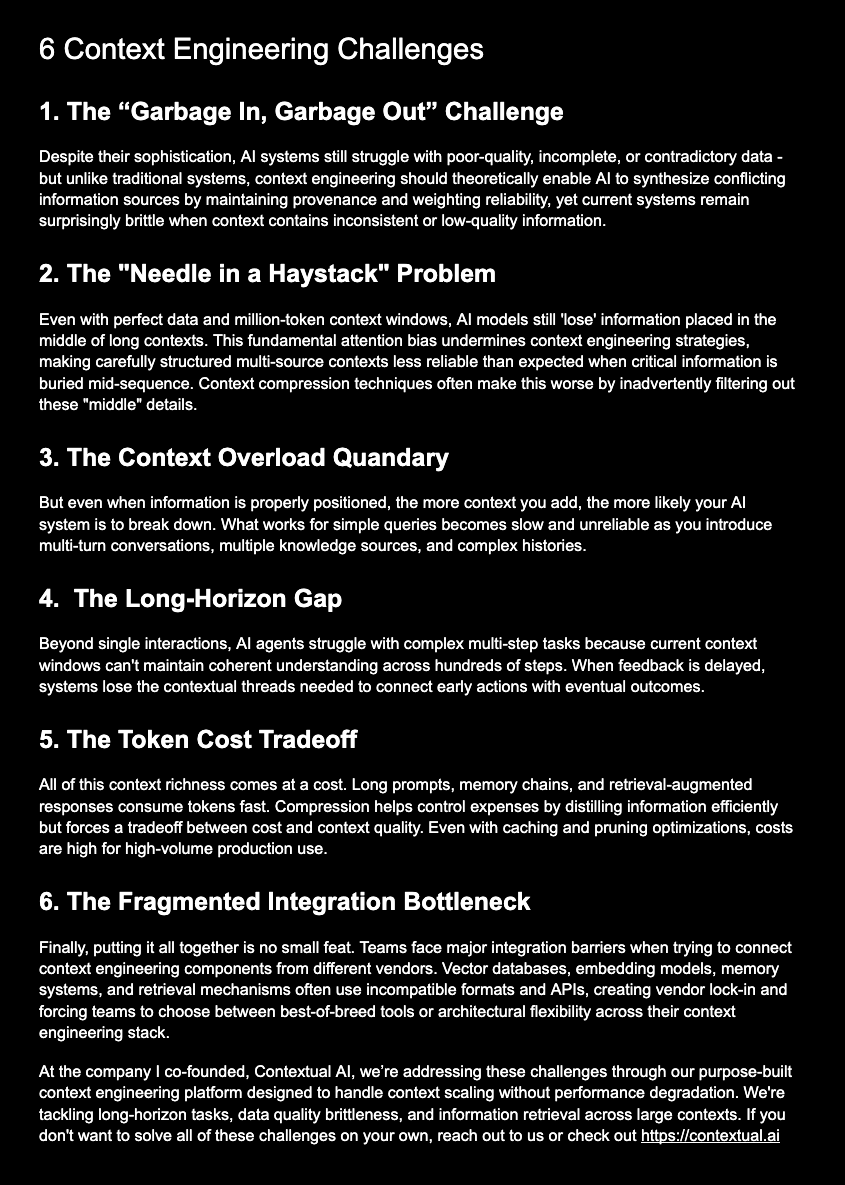

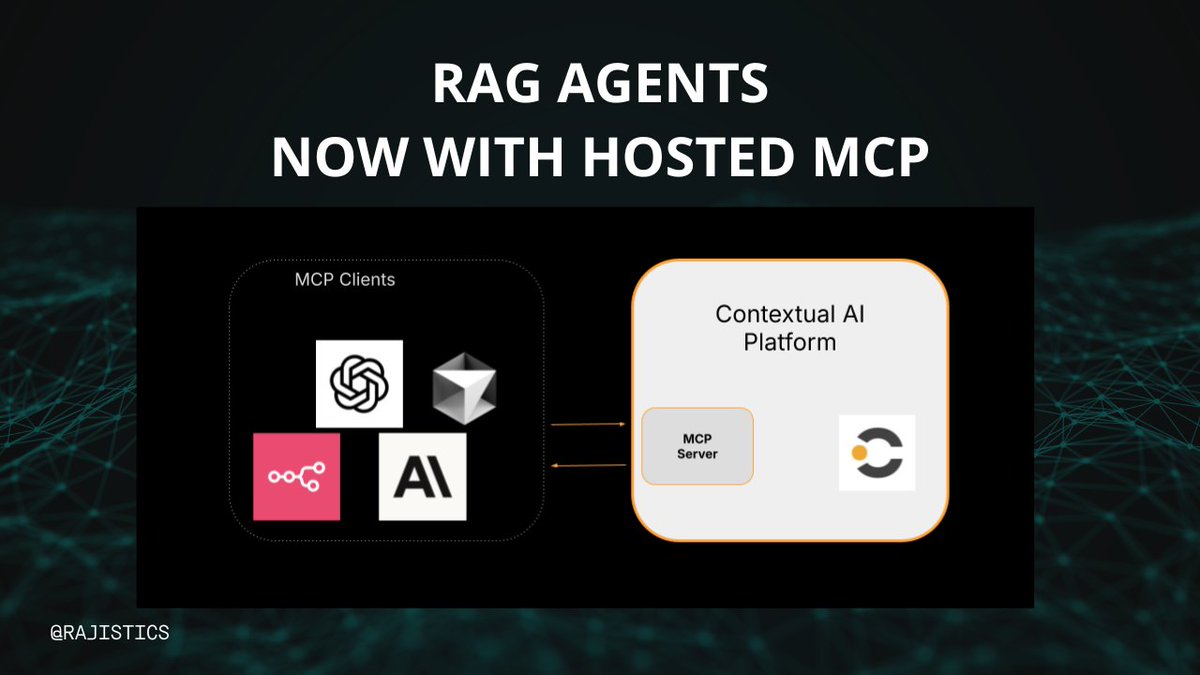

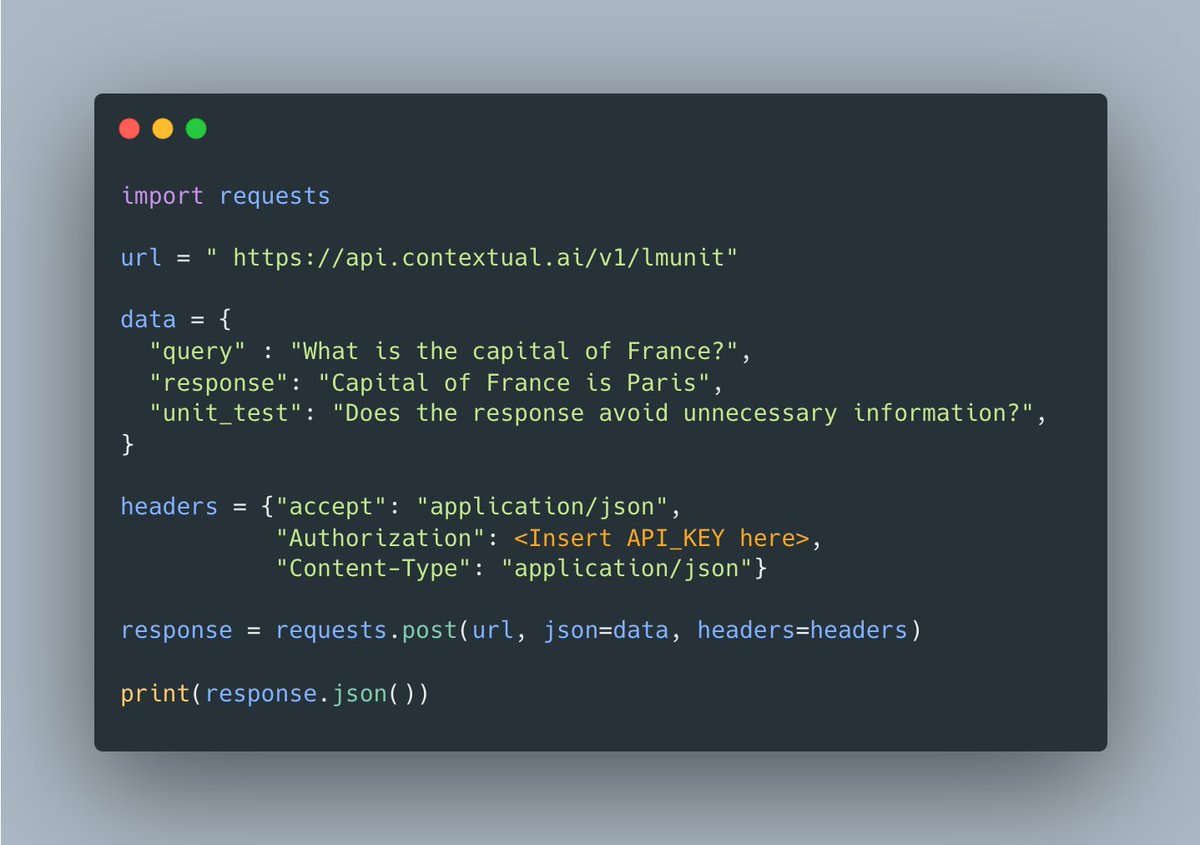

🔥 Introducing the most reliable way to evaluate LLMs and agents in production! It's time to stop “vibe testing” your AI systems. Our latest developer's guide shows you how to rigorously test AI systems so that they hold up in production, using Contextual AI's LMUnit evaluation model and @CircleCI’s CI/CD pipeline. You’ll learn how to: • Write natural language unit tests that anyone on your team can understand • Leverage LMUnit – Contextual AI's state-of-the-art, specialized evaluation language model that outperforms frontier models with greater interpretability at lower cost • Implement @CircleCI's CI/CD pipeline to catch regressions before they reach users See our complete developer’s guide here: contextual.ai/blog/lmunit-ci… Stop relying on "vibes" and start building AI you can trust! #AITesting #LLMOps #DevOps #Agents #LLM #Evaluation

Announcing the NeurIPS 2024 Best Paper Awards: blog.neurips.cc/2024/12/10/ann…

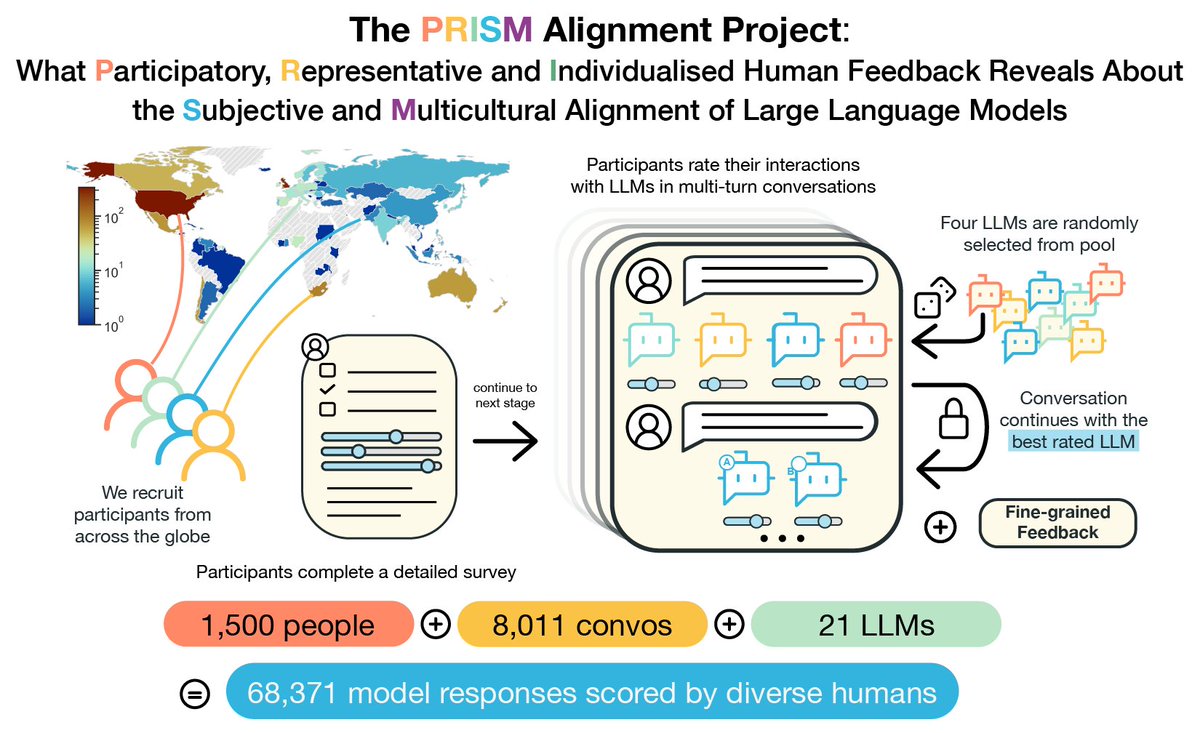

Today we're launching PRISM, a new resource to diversify the voices contributing to alignment. We asked 1500 people around the world for their stated preferences over LLM behaviours, then we observed their contextual preferences in 8000 convos with 21 LLMs arxiv.org/abs/2404.16019

Today, we’re excited to announce RAG 2.0, our end-to-end system for developing production-grade AI. Using RAG 2.0, we’ve created Contextual Language Models (CLMs), which achieve state-of-the-art performance on a variety of industry benchmarks. CLMs outperform strong RAG baselines built using GPT-4 and top open-source models like Mixtral, according to our research and customers. Read more in our blog post: rag2.ai