Zaid Khan

585 posts

Zaid Khan

@codezakh

NDSEG Fellow / PhD @uncnlp with @mohitban47 working on automating env/data generation + program synthesis formerly @allenai @neclabsamerica

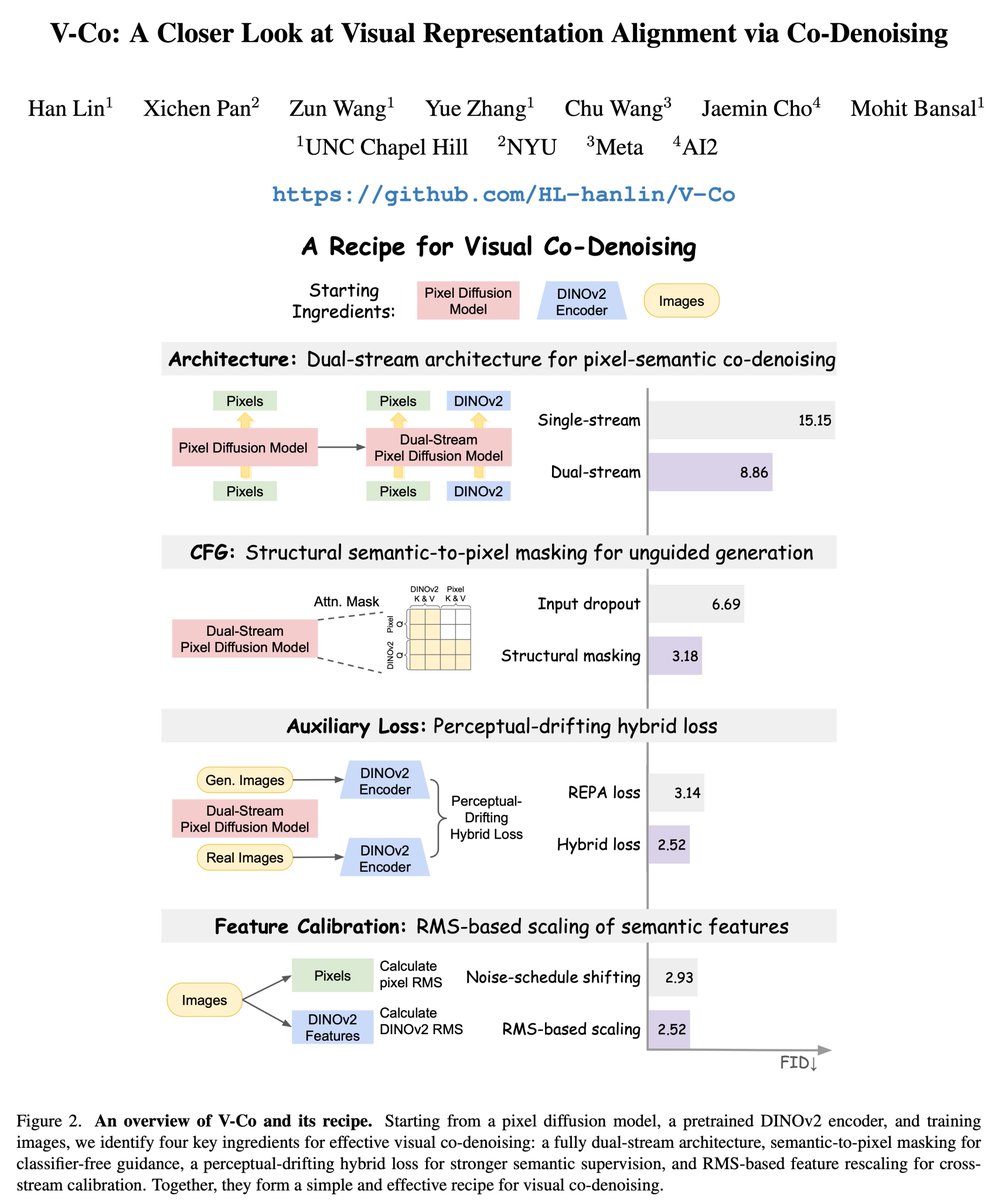

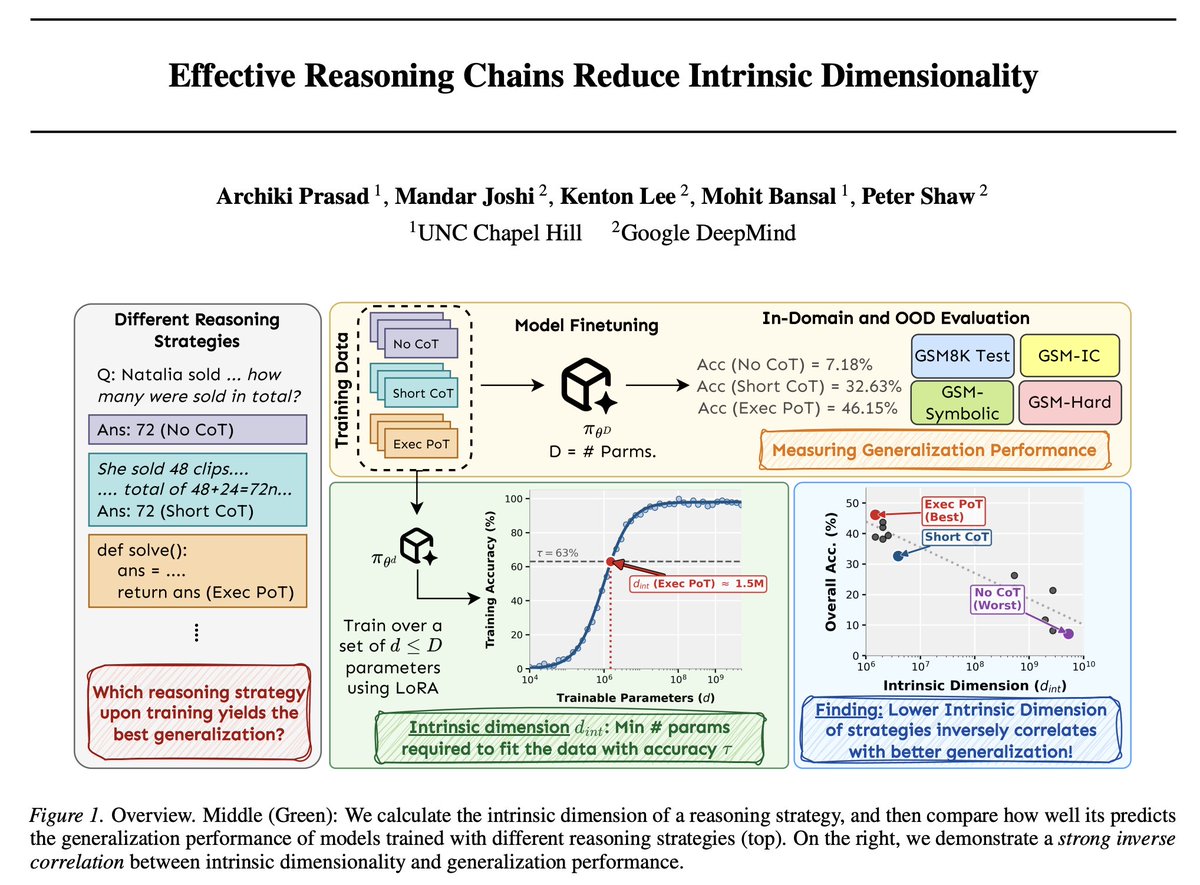

Ever come across a beautiful Figure 1 in a paper, only to wish you could easily edit and adapt it for your own use? Check out our new work VFig: Vectorizing Complex Figures in SVG with Vision-Language Models! It is a specialized VLM that converts any diagram – simple and complex – into editable and clean SVG code. Built on Qwen3-VL 4B with SFT & RL, it matches GPT5.2’s performance on converting complex diagrams into SVG code and outperforms open-source generalists and specialists on simple-to-complex diagram vectorization. 🕹️Try it now on our demo: tinyurl.com/vfig-demo

Introducing Ego2Web from Google DeepMind and UNC Chapel Hill, accepted to #CVPR2026. AI agents can browse the web. But can they act based on what you see? Existing benchmarks focus only on web interaction while ignoring the real world. Ego2Web bridges egocentric video perception and web execution, enabling agents that can see through first-person video, understand real-world context, and take actions on the web grounded in the egocentric video. This opens a path toward AI assistants that operate seamlessly across physical and digital environments. We hope Ego2Web serves as an important step for building more capable, perception-driven agents. 🧵👇

🤔 We rely on gaze to guide our actions, but can current MLLMs truly understand it and infer our intentions? Introducing StreamGaze 👀, the first benchmark that evaluates gaze-guided temporal reasoning (past, present, and future) and proactive understanding in streaming video settings. ➡️ Gaze-Guided Streaming Benchmark: 10 tasks spanning past, present, and proactive reasoning, from gaze-sequence matching to alerting when objects appear within the FOV area. ➡️ Gaze-Guided Streaming Data Construction Pipeline: We align egocentric videos with raw gaze trajectories using fixation extraction, region-specific visual prompting, and scanpath construction to generate spatio-temporally grounded QA pairs. This process is human-verified. ➡️ Comprehensive Evaluation of State-of-the-Art MLLMs: Across all gaze-conditioned streaming tasks, we highlight fundamental limits of current MLLMs. All MLLMs fall far below human performance. Models particularly struggle with temporal continuity, gaze grounding, and proactive prediction.

🚨 Excited to share AVIC — an analysis and framework for adaptive test-time scaling with world model imagination in visual spatial reasoning. 📉 Always-on visual imagination is often unnecessary, or even misleading. 📈 AVIC treats visual imagination as a selective, query-dependent test-time resource—showing that better spatial reasoning comes from deciding when and how much to imagine, not from imagining more. ➡️ Across spatial reasoning & embodied navigation, we get stronger accuracy with far fewer world-model calls and tokens. 🧵👇[1/6]

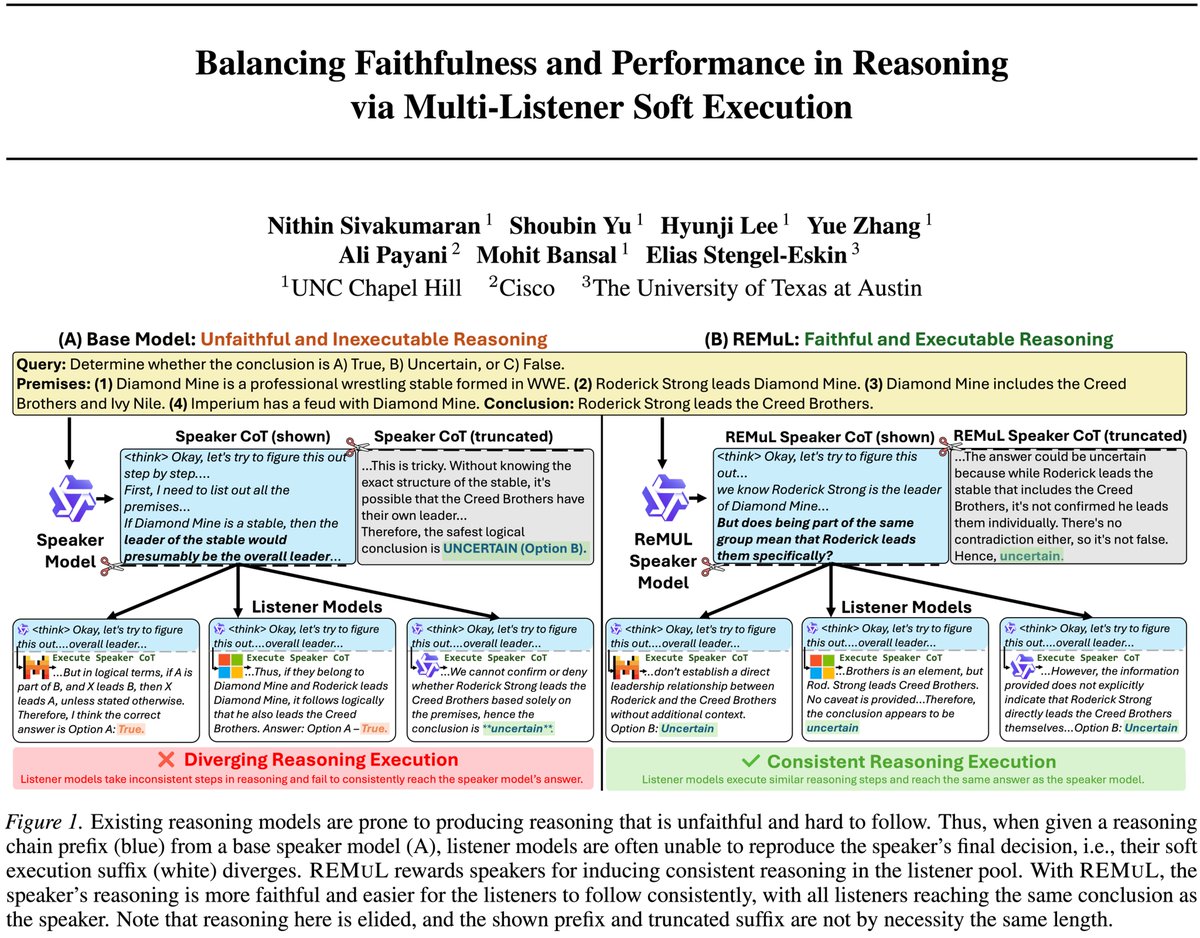

🚀 I'm on the 2026 Research Scientist Job Market! I am a Google PhD Fellow at UNC (advised by @mohitban47). I work on Faithful and Multimodal AI, focusing on reducing hallucinations and improving reasoning in generation tasks by: 🔹 Faithfulness & Hallucination Mitigation: Developing metrics and methods to ensure model outputs are factually consistent (e.g., FactPEGASUS, PrefixNLI). 🔹 Fine-grained Attribution & RAG: Creating frameworks that allow models to cite their sources and reason transparently (e.g., GenerationPrograms, LAQuer). 🔹 Multimodal Reasoning & Retrieval: Grounding vision-language models to reduce hallucinations in cross-modal tasks (e.g., CLaMR, Contrastive Region Guidance). Prev Intern: Google, Meta, Salesforce, Amazon. 🔗 meetdavidwan.github.io #NLP #AI #JobSearch