Daniel Bryan Goodman

2.5K posts

@dbgoodman

Immuno/Synth Bio/ML. PI @PennMedicine/@parkerici. Using DNA synth, multiplex assays & generative models to understand & engineer immune cells. @geochurch alum.

telling students in my class about the history of genomics

this is actually insane > be tech guy in australia > adopt cancer riddled rescue dog, months to live > not_going_to_give_you_up.mp4 > pay $3,000 to sequence her tumor DNA > feed it to ChatGPT and AlphaFold > zero background in biology > identify mutated proteins, match them to drug targets > design a custom mRNA cancer vaccine from scratch > genomics professor is “gobsmacked” that some puppy lover did this on his own > need ethics approval to administer it > red tape takes longer than designing the vaccine > 3 months, finally approved > drive 10 hours to get rosie her first injection > tumor halves > coat gets glossy again > dog is alive and happy > professor: “if we can do this for a dog, why aren’t we rolling this out to humans?” one man with a chatbot, and $3,000 just outperformed the entire pharmaceutical discovery pipeline. we are going to cure so many diseases. I dont think people realize how good things are going to get

A small thank you to everyone using Claude: We’re doubling usage outside our peak hours for the next two weeks.

Empty drops in scRNA-seq uncover the surprising prevalence of sequestered neuropeptide mRNA and pervasive sequencing artifacts biorxiv.org/content/10.648… #biorxiv_bioinfo

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)

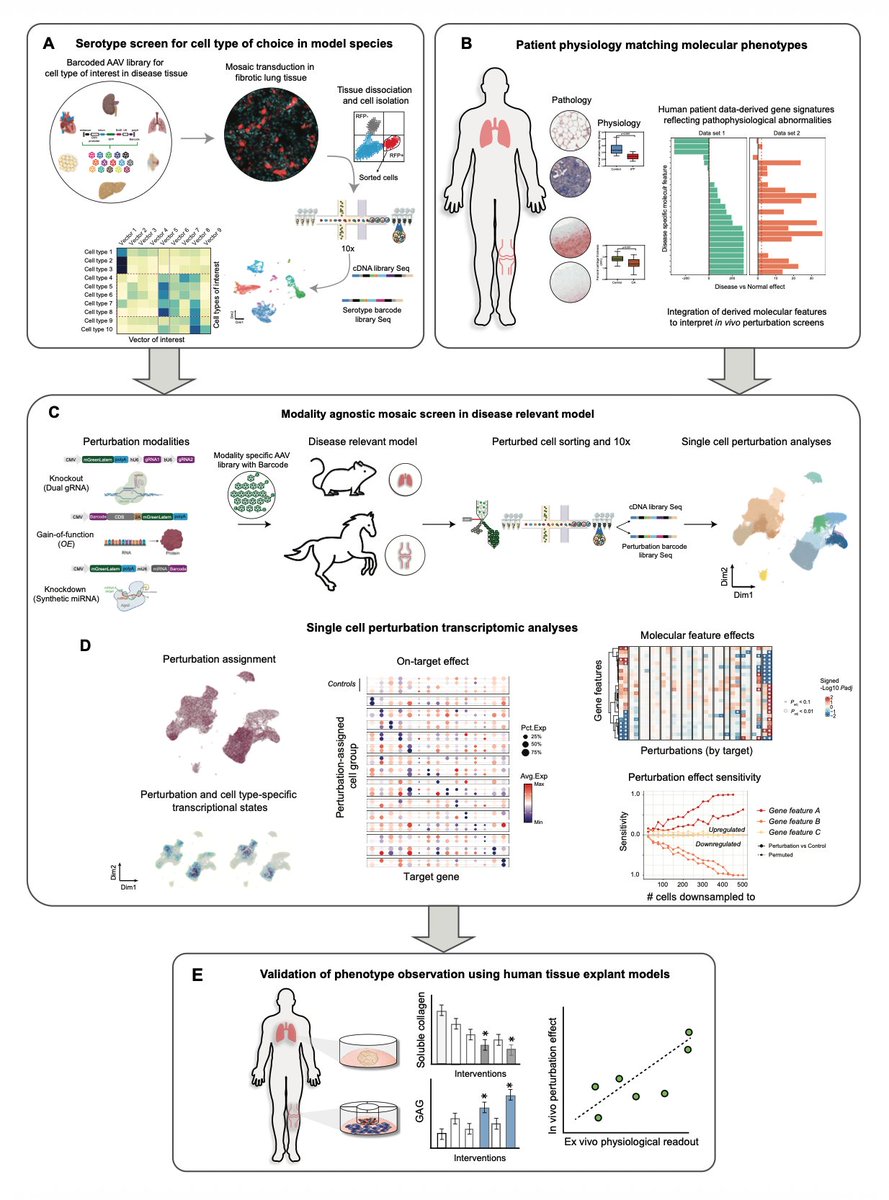

Excited to share VIPerturb-seq! New tech from my lab which aims to improve the cost, data quality, and efficiency of single-cell CRISPR screens so that they are accessible to any lab - even at genome-wide scale Preprint and 🧵 (1/): biorxiv.org/content/10.648…