Kimi K2.6 is now available in Hermes Agent. Simply run `hermes update` and use `hermes model` to select a compatible provider hosting the model!

DaE73

108.9K posts

Kimi K2.6 is now available in Hermes Agent. Simply run `hermes update` and use `hermes model` to select a compatible provider hosting the model!

🇵🇪 Escándalo en Perú Piero Corvetto detenido por mega fraude electoral. Cargos de sabotaje y conspiración lo llevarían 30 años a prisión. Impidió el voto de más de 1 millón de limeños para favorecer al candidato de izquierdas @RobertoSanchP. Game Over.

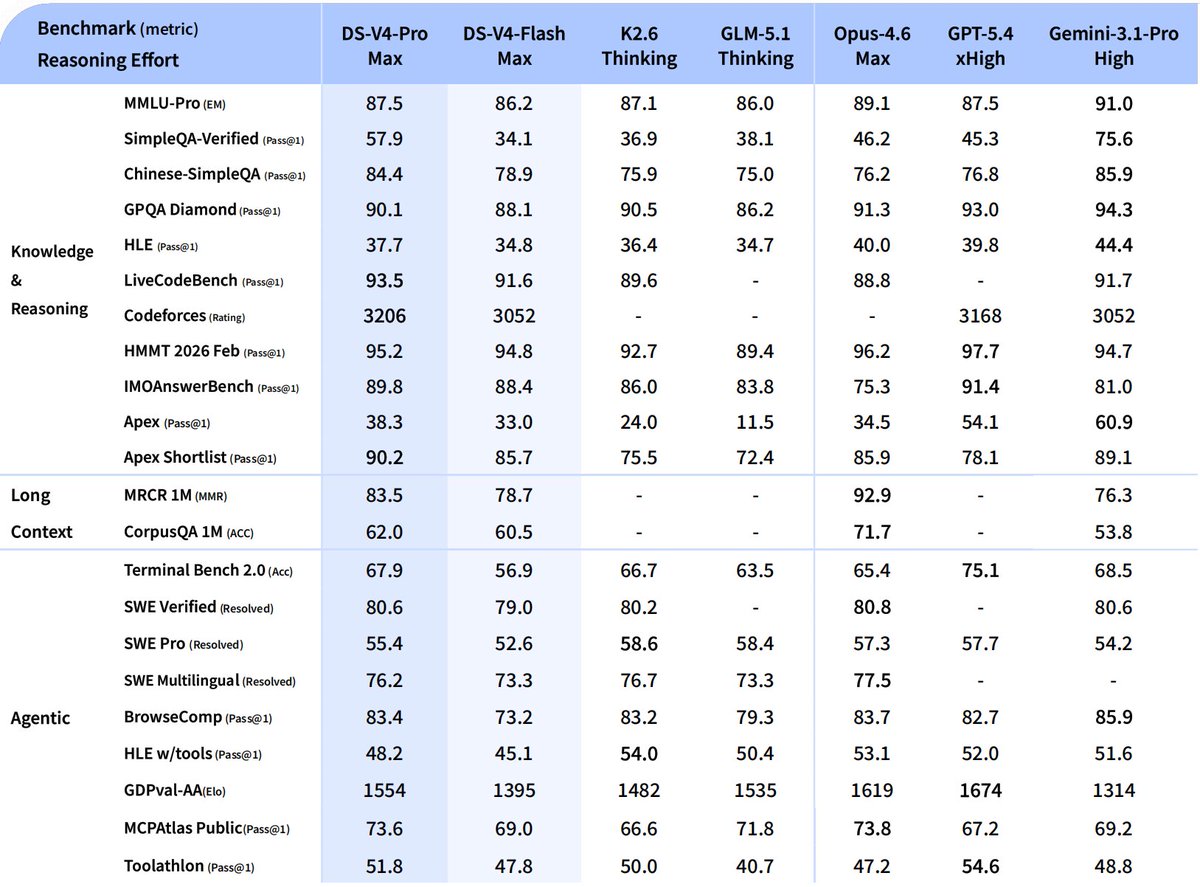

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

DeepSeek V4 hits it out of the park and addresses HBM shortage: DeepSeek proves why it is such a fundamental research lab. In addition to exceeding Opus 4.6 on Terminal Bench and virtually matching on other performance metrics, the most notable advancement is this statement: "In the 1M-token context setting, DeepSeek-V4-Pro requires only 27% of single-token inference FLOPs and 10% of KV cache compared with DeepSeek-V3.2" To understand significance of this point, consider below diagram that shows memory layout for Prefill and Decode nodes. If you implement Decode with Data and Expert parallelism (DEP16) with 16 GPUs on GB200 or GB300 NVL72 rack with DeepSeek v3.2, you are left with 104GB or 176 GB HBRAM per GPU respectively. Here we are assuming MoE parameters are in NVFP4. The remaining HBRAM per GPU dictates how large batch size you can have for inference, which determines how many concurrent request you can serve. Consider GB300 with 176GB left: 1. For 128K context, you need 4.45 GB HBRam for KV Cache, and you can serve only 36 concurrent requests. 2. For 256K context, you need 8.90 GB HBRam for KV Cache, and you can serve only 18 concurrent requests. 3. For 512K context, you need 17.80 GB HBRam for KV Cache, and you can serve only 9 concurrent requests. 4. For 1M context, you need 35.60 GB HBRam for KV Cache, and you can serve only 4 concurrent requests. You see the point. Now you imagine, you actually required 10 times less KV cache somehow at 1M! It basically enables you to server 10 times more requests with same resources. Recall Decode is memory bound and not compute bound, unlike Prefill. This is probably the most important contribution of DeepSeek V4. @teortaxesTex @jukan05 @zephyr_z9

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

“The whole world should use a 1.6T model for free.” — Liang Wenfeng

Codeforces 3052 SWE-bench Pro 52.6 HLE 45.1 long-context > Gemini 3.1 Pro The API pricing for this model is: input: (Cache hit) $0.028 / (Cache miss) $0.14 output: $0.28. It supports up to 1M tokens, with no additional charges based on context length. Unless they have told some horrific benchmarking lies, this is insane.