Stephen Panaro

903 posts

고정된 트윗

@mweinbach Thought it was a cleaner summary. Possible I’ve just never looked beyond that though 🤔

English

@flat i'm pretty sure anthropic doesn't hide theirs, so that feels normal

English

@mweinbach Huh weird. I also got it from Opus today too but had never seen it before. Assumed it was an Anthropic issue

English

@mattcassinelli @tylerangert Oh for sure some cool non-MLX stuff this year. When Google released their on-device base model + LoRA, I crossed my fingers Apple would do the same. And they did!

Am actually curious now if anyone has shipped an AFM LoRA.

English

@flat @tylerangert I just mean overall, because they weren’t available to use last year, are foundational instead of frontier, and generally native-only, the entire potential of Apple Intelligence models are skipped over.

Specific ones like handwriting also enable apps like this easily now

Matthew Cassinelli@mattcassinelli

This developer just turned the iPad into Tom Riddle’s diary ‼️

English

training a tiny handwriting synthesis model. this is the latest checkpoint at 44%. so cool

Tyler Angert@tylerangert

I’ve been radicalized by MLX and now need a cluster of Mac minis

English

@mattcassinelli @tylerangert Having been away from MLX for a bit, what did I miss? (Or is this about non-MLX like CoreML/Foundation Models?)

English

@tylerangert This year’s APIs are so cool but because they’re not frontier-level models everyone decided to not even check

English

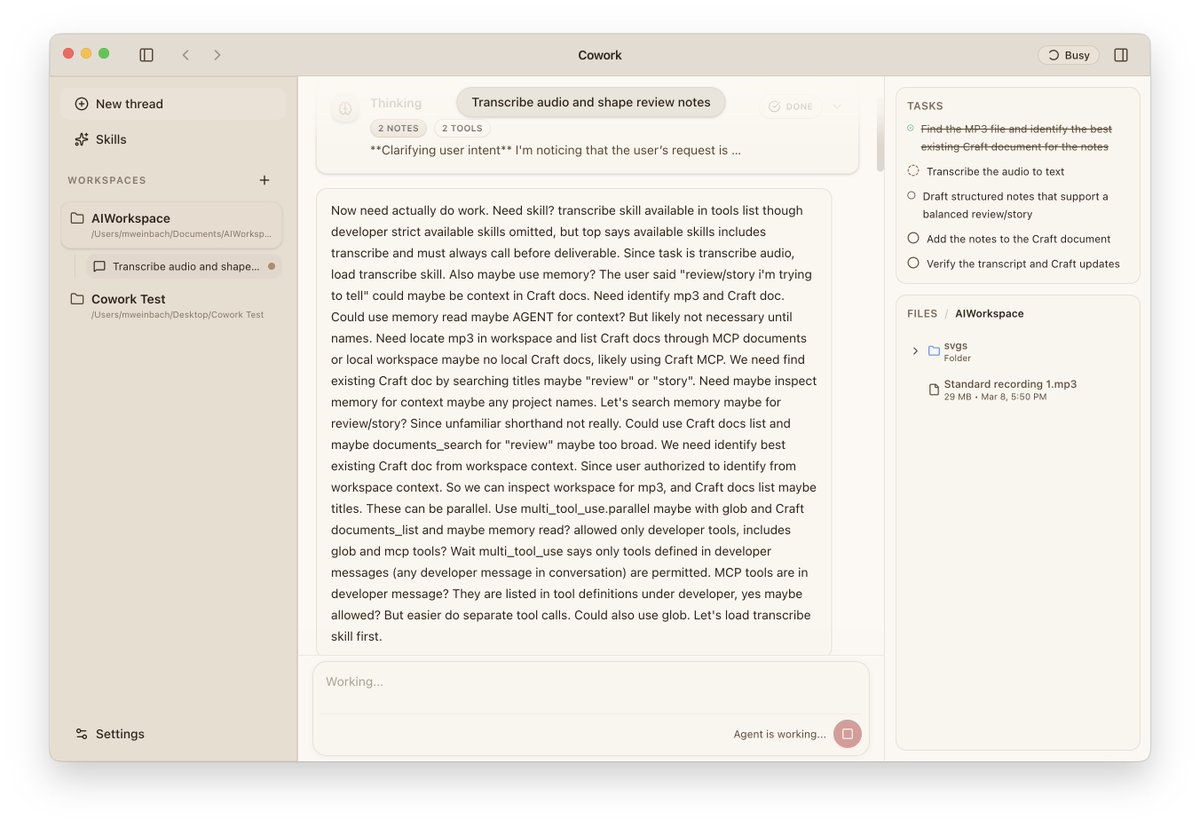

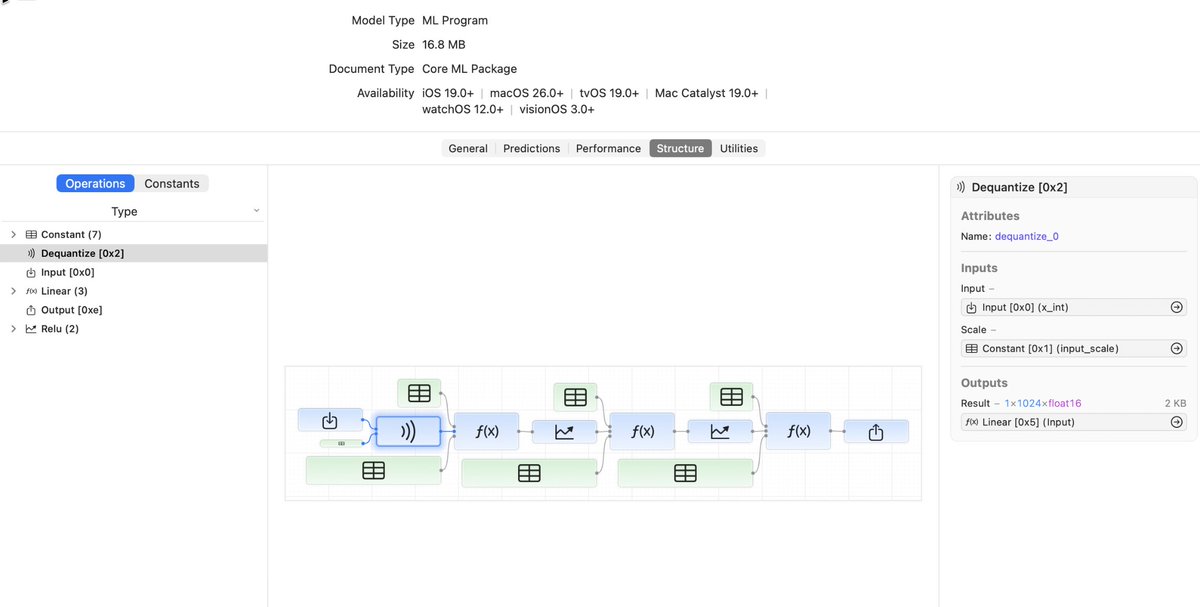

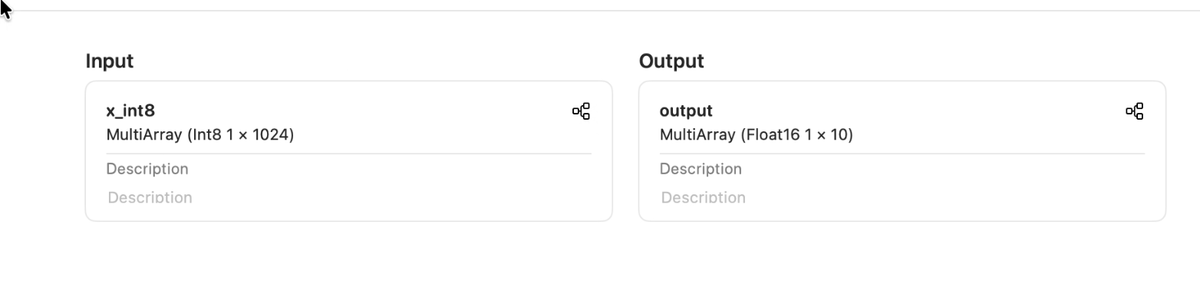

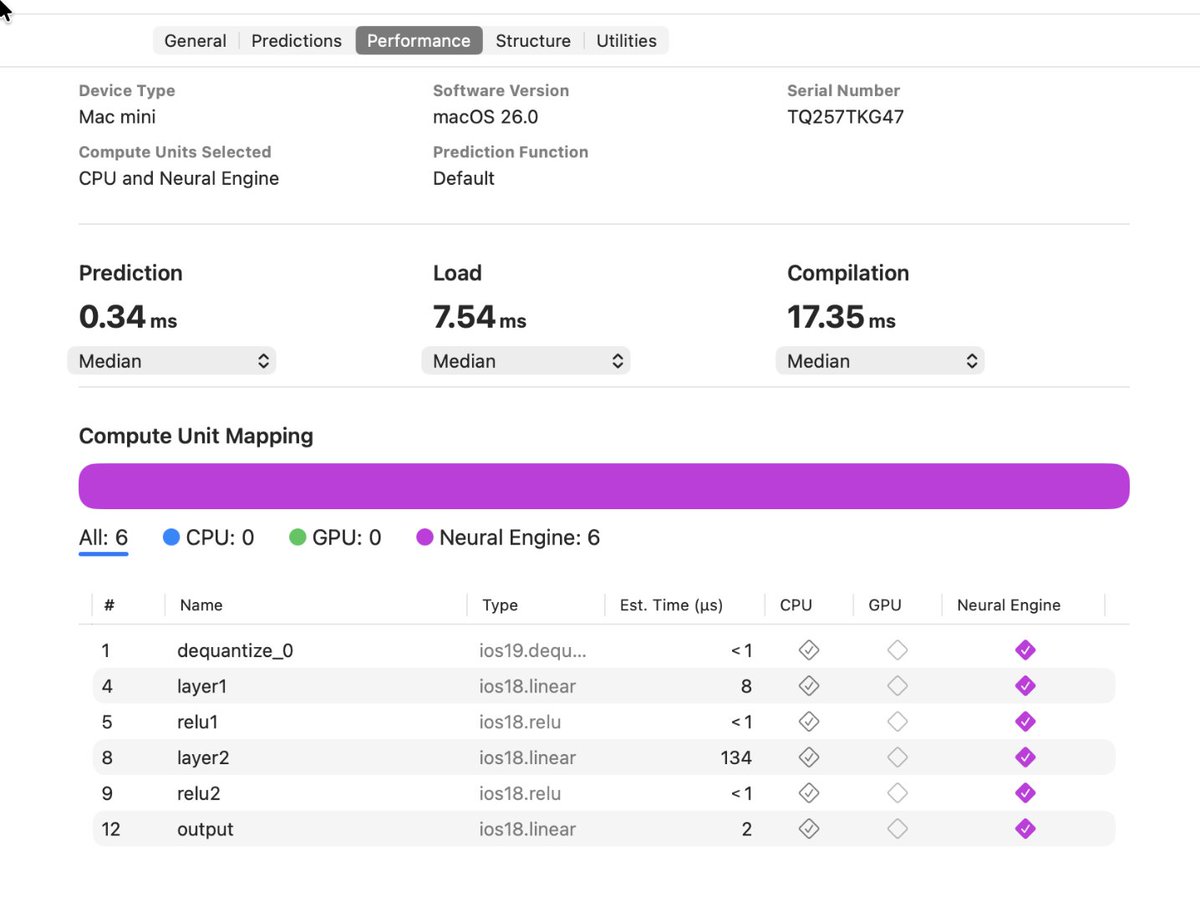

MLP from dequantized int8 with CoreML 9.x test / example source code

gist.github.com/Anemll/a9838e1…

English

Looks like it’s happening!

Stephen Panaro@flat

Wonder if we’re gonna get a new version of coremltools. Last year it dropped on Monday.

English

@AaronWeiHuang 👋 Any chance y’all plan to release code for DBellQuant?

English

@cloneofsimo Can use this for quantization too:

arxiv.org/abs/2506.04985

English

Oh my god, with relu / relu^2 and no bias, there is further redundancy in parameterization of MLP because you can multiply one row of fc1 by k and divide same column of fc2 by k^2, and output is completely identical!

so we have *extra* n redundancy if your activations are scale equivariant...

Simo Ryu@cloneofsimo

Its nightmare to think 2 layer MLP are super redundant: even if you have a global minima, there are at least n! more of em. For n = 8k, 2 layer MLP is like 10^27800 larger in terms of hypothesis space WHO ALLOWED THIS ???

English

🐙: gist.github.com/smpanaro/5d301…

📄: arxiv.org/pdf/2506.04985

(R₅ is a rotation matrix, so its transpose is its inverse and it naturally cancels out in Q@K.T)

English

Turns out you don’t need R₅⁻¹ at all. 🫠 Fusing into Q and K is enough!

Cool paper from Qualcomm explains this and a few similar transforms.

No code in the paper, so gist proof👇

Stephen Panaro@flat

Liking the line of research where you multiply LLM weights by rotation matrices and the model still works. Most do it in between layers, but you can also sneak one between Q/K and RoPE. Extra parameters? None. Useful? …Maybe. Cool? I think so. (See R₅ below.)

English

The python library is interesting too. “Download files”:

pypi.org/project/tamm/0…

English

See for yourself:

1. Get the adapter training toolkit: #download-toolkit" target="_blank" rel="nofollow noopener">developer.apple.com/apple-intellig…

2. Clone: github.com/smpanaro/netro…

3. Edit draft.mil:

- delete all functions except the first

- rename it to: func main<ios18>(

4. Follow readme to start netron, and open the .mil

English