Aakash Gupta@aakashgupta

Emmett Shear just described the entire AI industry’s next interface layer in seven words.

What he’s calling “intents” is the gap between what you type and what you actually want. Prompts are instructions. Intents are outcomes. And the entire infrastructure stack is reorganizing around that difference right now.

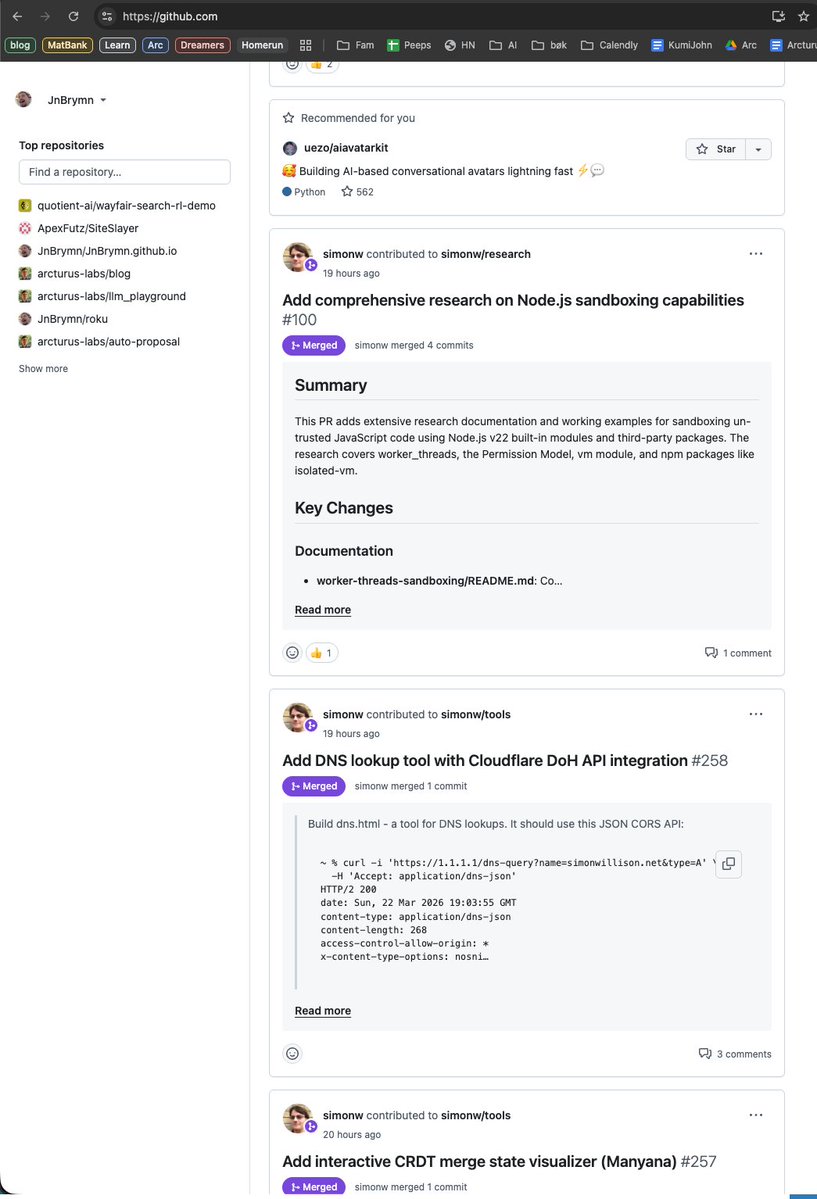

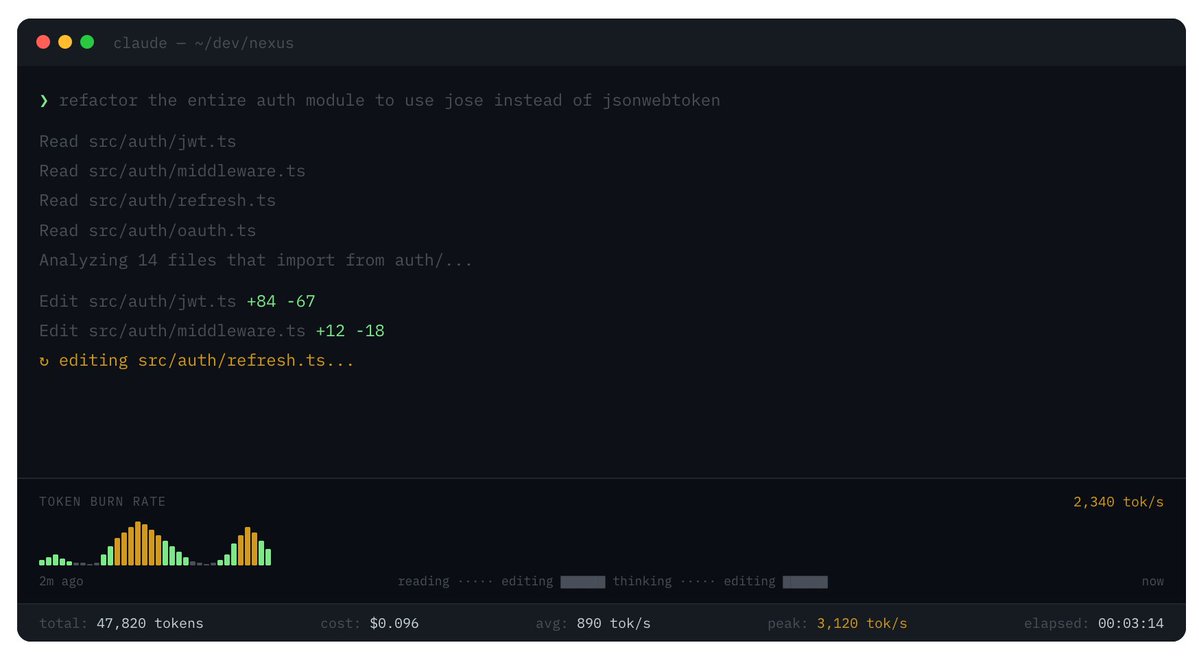

Anthropic just shipped “agent teams” in Opus 4.6, where 16 agents wrote a C compiler in Rust from scratch for $20,000. You don’t prompt 16 agents individually. You give them an intent and let them decompose the work. Claude’s “soul document” already operates this way internally. The model doesn’t follow a checklist of rules. It internalizes values, context, and goals so thoroughly that it can construct the right behavior for situations the rules never anticipated.

That’s the architecture Shear has been building toward at Softmax. His whole thesis on “organic alignment” is that you don’t control agents through instructions. You align them through shared goals. Cells in your body don’t need a prompt to avoid becoming cancerous. They’re aligned because their success is inseparable from the organism’s success.

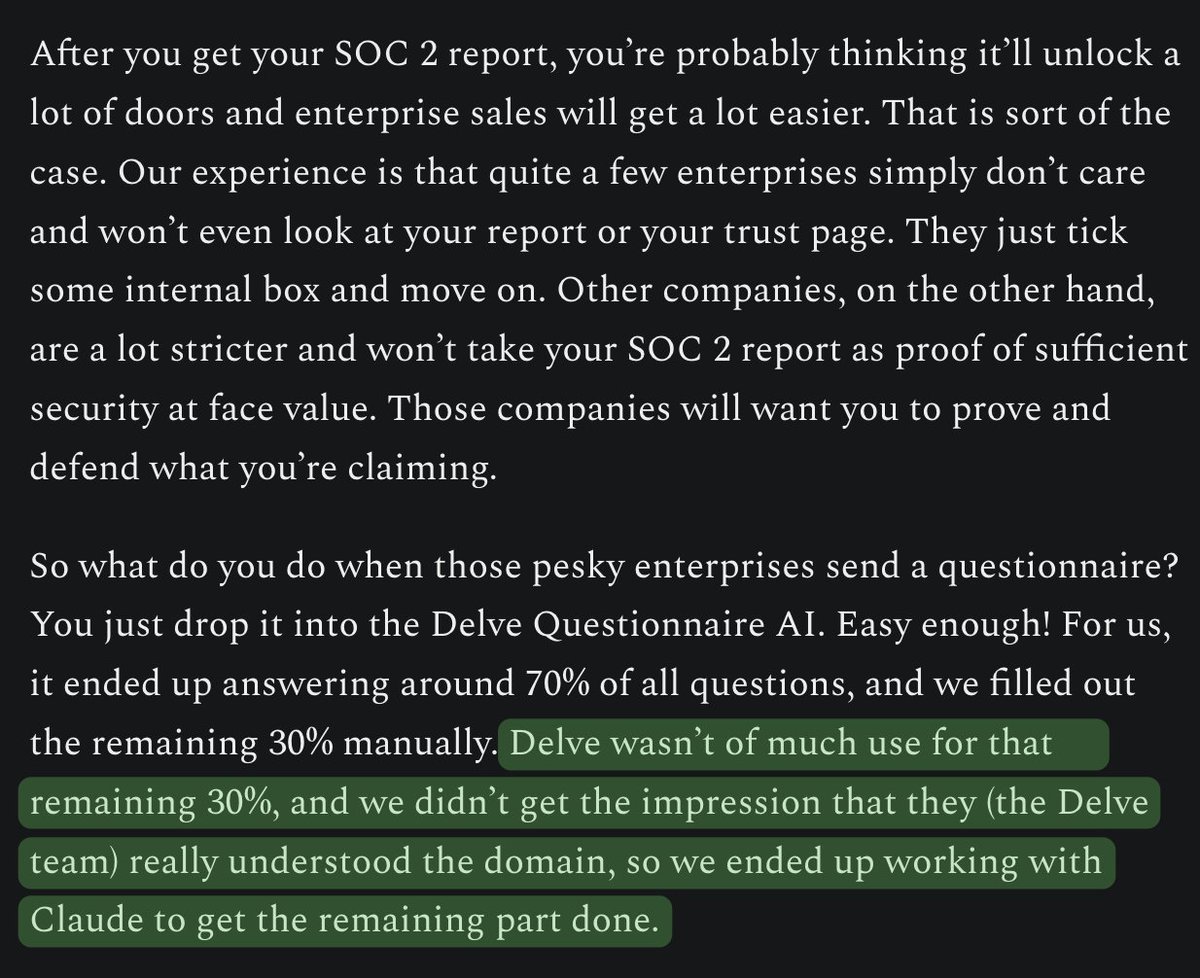

Amazon has thousands of agents in production right now. Their entire evaluation framework is built around “intent detection accuracy,” not prompt quality. Goldman Sachs is deploying Claude agents across accounting and compliance. They aren’t writing better prompts. They’re defining outcomes and letting the agents decompose the workflow.

The prompt era assumed a human would micromanage every step. Type a prompt, get a response, copy-paste it somewhere, notice an error, paste it back. That loop is what killed enterprise AI adoption for two years. Companies built thousands of “chat with your PDF” prototypes that were fun but operationally useless.

Intents break that loop. You specify what you want accomplished and the constraints it operates within. The agent handles decomposition, tool selection, error correction, and execution. The human role shifts from writer to editor, from coder to architect.

Shear saw this before most people because his alignment research forced him to think about what happens when you can’t prompt your way to safety. If a system is capable enough to reason, model others, and take initiative, “do what I told you” breaks down. You need the system to understand what you meant. That’s intents.

The companies shipping agents in 2026 already know this. The ones still optimizing their system prompts are building for a paradigm that’s already dead.