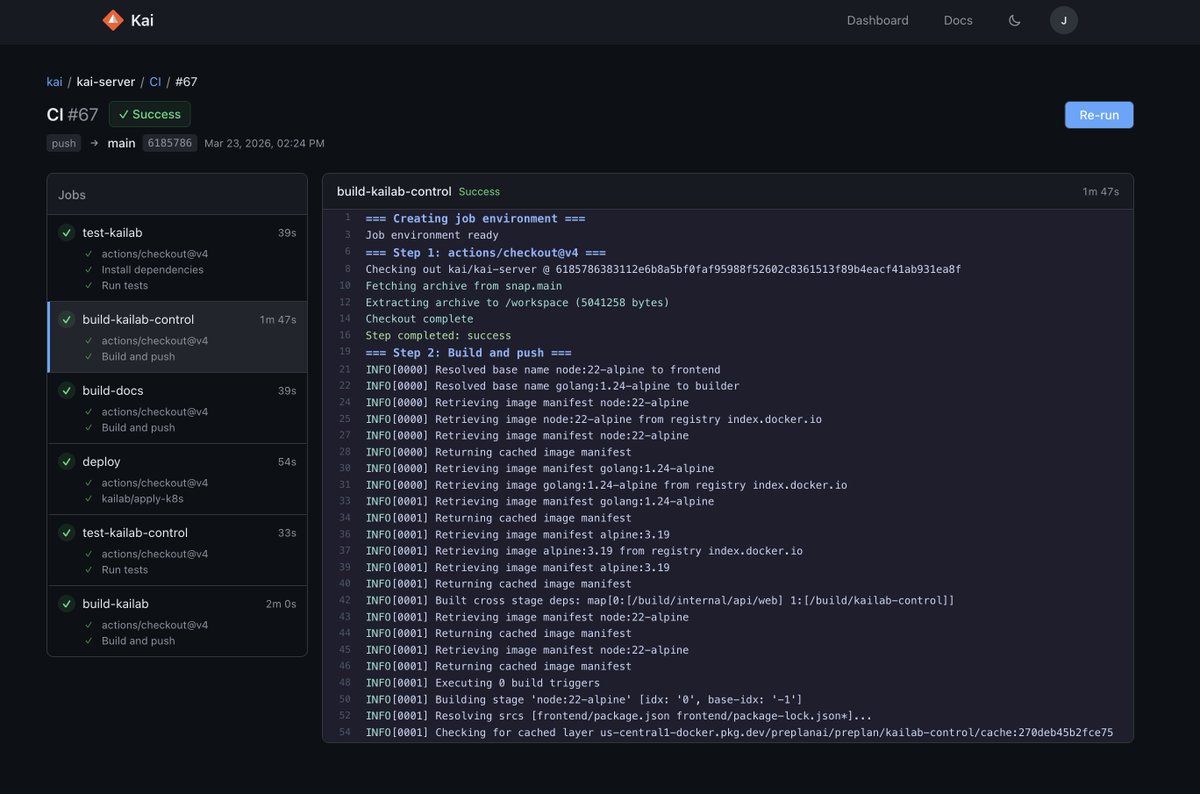

Shipped 10 releases for Kai this week (v0.9.14→0.9.23).

Now:

• capture/push = change-aware (not O(all files))

• CI skips unaffected work

• tracks AI vs human code

You + AI can actually understand your codebase.

github.com/kaicontext/kai…

English

Jacob

2.1K posts

@jakecodes

Building Kai and 1Medium https://t.co/7FFToZ0W6Q https://t.co/6OIaxvug3H