Cheng-I Jeff Lai

154 posts

Cheng-I Jeff Lai

@jefflai108

Voice AI @Meta l Ex @WaveFormsAI @GoogleDeepMind | phd @MIT_CSAIL

After 2 wonderful years, I left Meta this week. During this time, I worked on several projects related to speech and LLMs: - Built the first multi-channel audio foundation model with M-BEST-RQ (arxiv.org/abs/2409.11494) - Made ASR with SpeechLLMs faster (arxiv.org/abs/2409.08148) and more accurate (ieeexplore.ieee.org/document/10890…) - Shipped the first production-ready full-duplex voice assistant (about.fb.com/news/2025/04/i…) - Improved Moshi’s reasoning capability with chain-of-thought (arxiv.org/abs/2510.07497) I am grateful to my managers for having my back on critical projects, and fortunate to have collaborated with several brilliant researchers and engineers during this time. As to what's next, I am still in NYC and continuing to do speech research. More on that later!

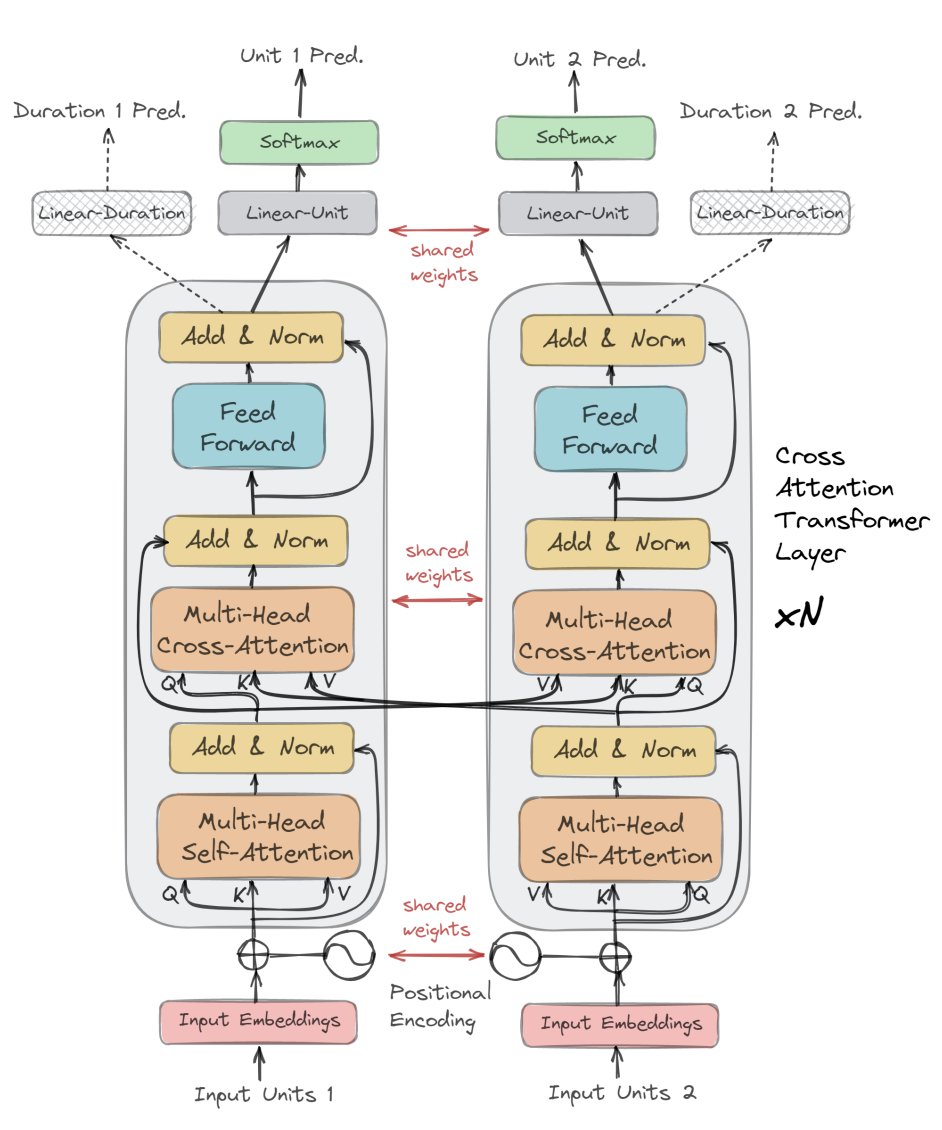

🚀 Full-duplex (FDX) models are all the rage in voice AI right now — but what is “full-duplex”?

Think of it like the difference between a normal phone call 📞 and a walkie-talkie 🎙️:

• Half-duplex = only one side talks at a time

• Full-duplex = both sides can speak simultaneously

User (U): u u u u u u - - - - - - - - - - - u u u

System (S): - - - - - - - - s s s s s s s s s s s --

In theory, an FDX model tries to learn the joint probability P(U, S) — basically a language model over "user+system" token pairs.

Speech isn’t naturally discrete, so we use neural codecs 🎧 to turn waveforms into tokens (this itself is an entire field of research).

👉 In practice, we don’t model the full joint probability. Since the user audio is available, we instead learn the conditional distribution: P(s_t | U_{<=t}, S_{

ヌラールオヂオーコデクの論文、全く違うデータで学習されたモデルを比較して「ワイらのモデル最強や!!😤😤😤」と主張しているものばかりで😩😩😩😩😩😩😩😩😩😩😩に関するMOS値が1000000になった

We've raised $100M from Kleiner Perkins, Index Ventures, Lightspeed, and NVIDIA. Today we're introducing Sonic-3 - the state-of-the-art model for realtime conversation. What makes Sonic-3 great: - Breakthrough naturalness - laughter and full emotional range - Lightning fast -

Several of my team members + myself are impacted by this layoff today. Welcome to connect :)