Luis Chamba-Eras 🇪🇨

29.5K posts

Luis Chamba-Eras 🇪🇨

@lachamba

Prof. @UNLoficial @CisUnl | Leader @GiticUnl @dia4k12 | #running #trail🏃| I love my 👨👩👧👧

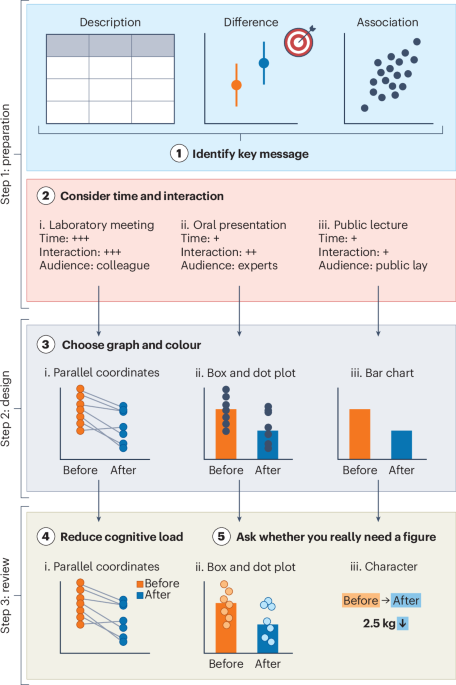

I’m thrilled to announce that my new comment titled “How to design effective scientific figures” is now released from @NatureHumBehav This provides five keys to make your graphical items attractive 🎨 Hope this is useful for your project! nature.com/articles/s4156…

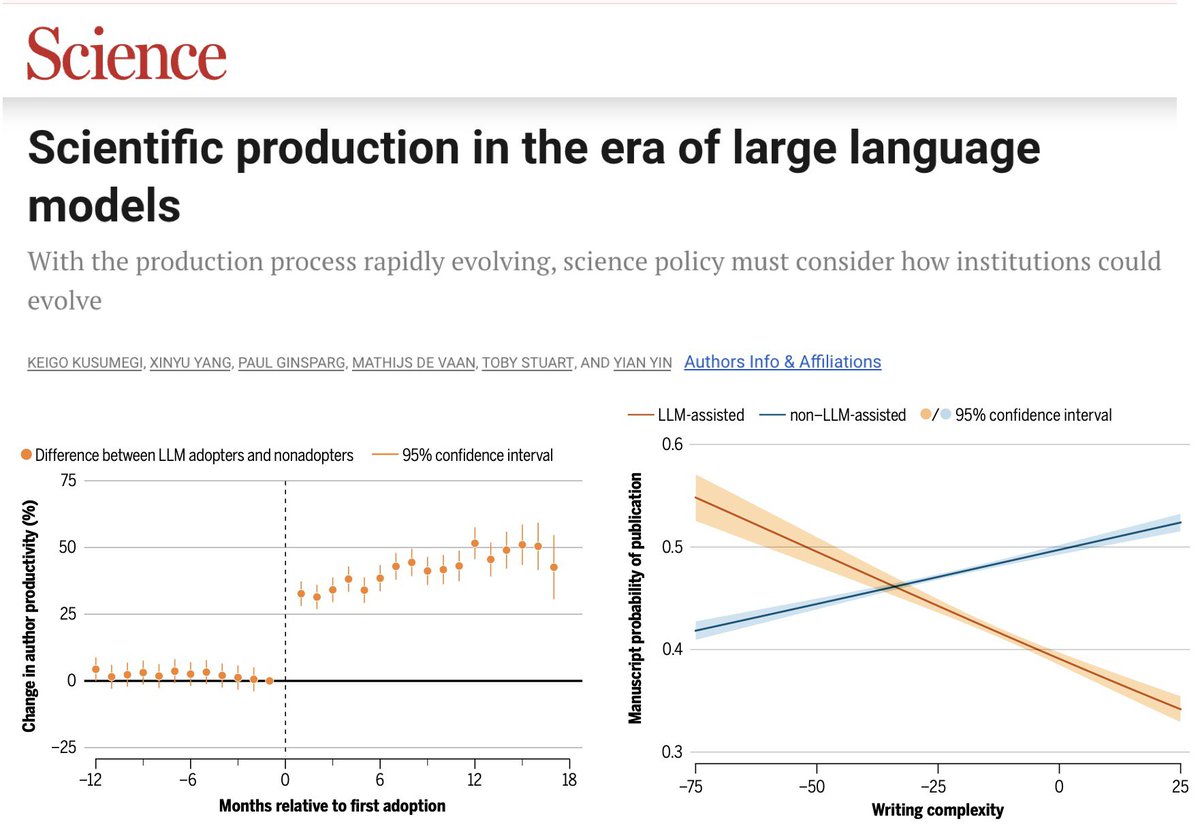

How are large language models impacting the submission and review process at high-impact journals? Severely. Since the release of ChatGPT in 2022, AI-generated and AI-assisted papers, identified by Pangram, drove a 42% increase in submission volume at Organization Science (figure below). While the journal rejected the majority of these submissions, there is a human cost to reviewing papers, which volunteer reviewers are shouldering. AI-generated content is also showing up in reviews, which similarly suffer in quality because of it -- editors at Organization Science found that AI-generated reviews are lower quality, less specific, and less topically diverse than human-written ones. The problem is not isolated. Earlier this year, ICML desk-rejected 497 papers from authors who submitted AI-generated reviews, after those authors opted into a policy that disallowed the use of AI. Grant funders also saw a surge in applications: the Marie Skłodowska-Curie Actions, a set of major research fellowships for the EU, received 142% more proposals in 2025 compared to 2022. Many scientific and academic systems implicitly rely on friction as a barrier to entry. LLMs have removed that friction, allowing for a deluge of AI slop that is straining the capacity of these institutions.