Sydney Runkle@sydneyrunkle

most of the time, you want an agent loop to run uninterrupted. that's where the utility comes from! but some decisions shouldn't be delegated to the agent. two situations come up consistently:

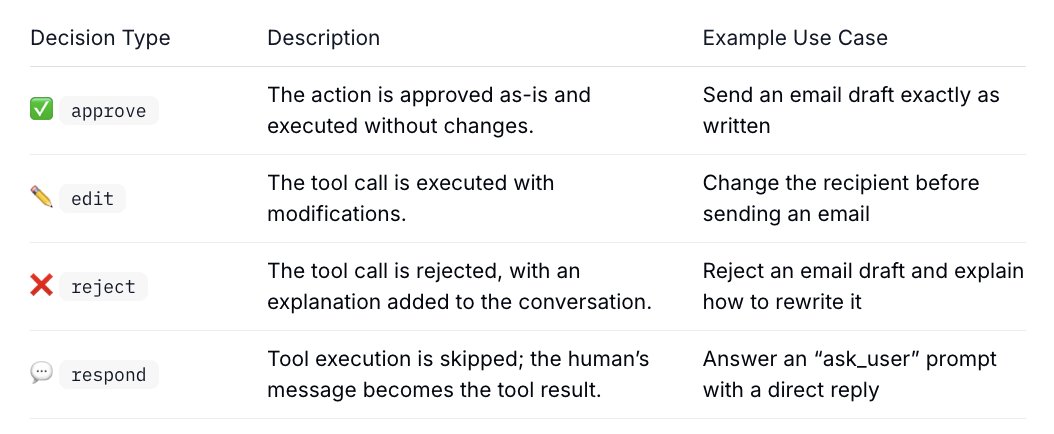

1/ before a consequential action, like sending an email, executing a transaction, or deleting files, you want to see exactly what the agent is about to do. approve it, edit it, or push back with feedback so it can revise and try again.

2/ when the agent hits a judgment call it can't resolve alone. not because it's missing a tool, because the answer depends on your preference. "which config file should i modify?" or "should this go to staging or production?" your answer gets fed directly back into the run.

here's the part that matters for production: these pauses can last indefinitely. seconds, hours, days. that's only possible if the runtime persists state across the response gap. when the human responds, whenever that is, the agent reloads full context and continues from exactly where it stopped.

in langgraph, interrupt() saves state to a checkpointer and surfaces a payload to the caller. command(resume=...) reloads it and picks up execution.

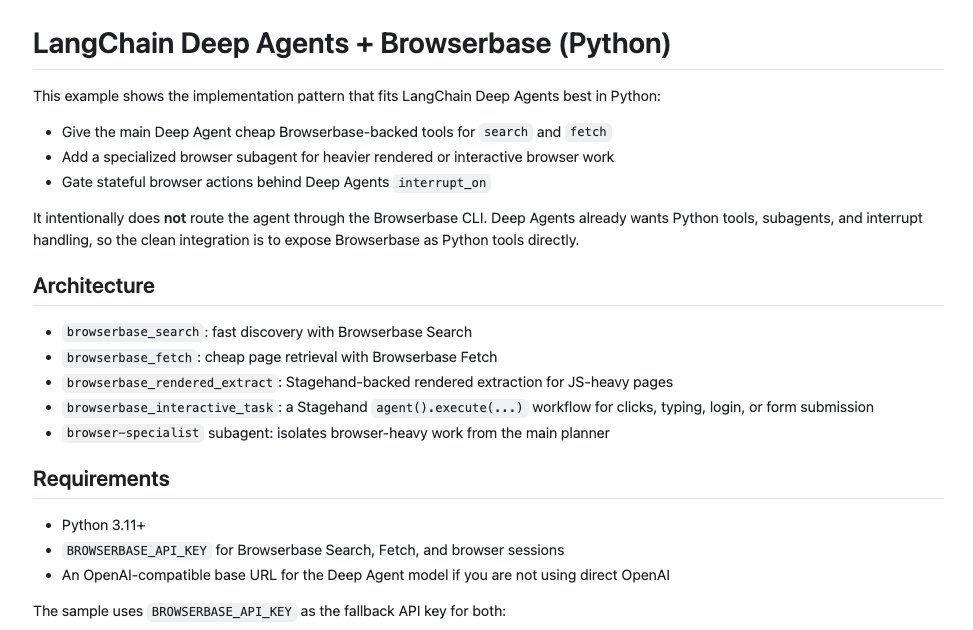

langchain and deep agents build on top of those primitives with HITL middleware, so instead of wiring this yourself, you attach HITL policies directly to tool calls.

#interrupt-decision-types" target="_blank" rel="nofollow noopener">docs.langchain.com/oss/python/lan…