고정된 트윗

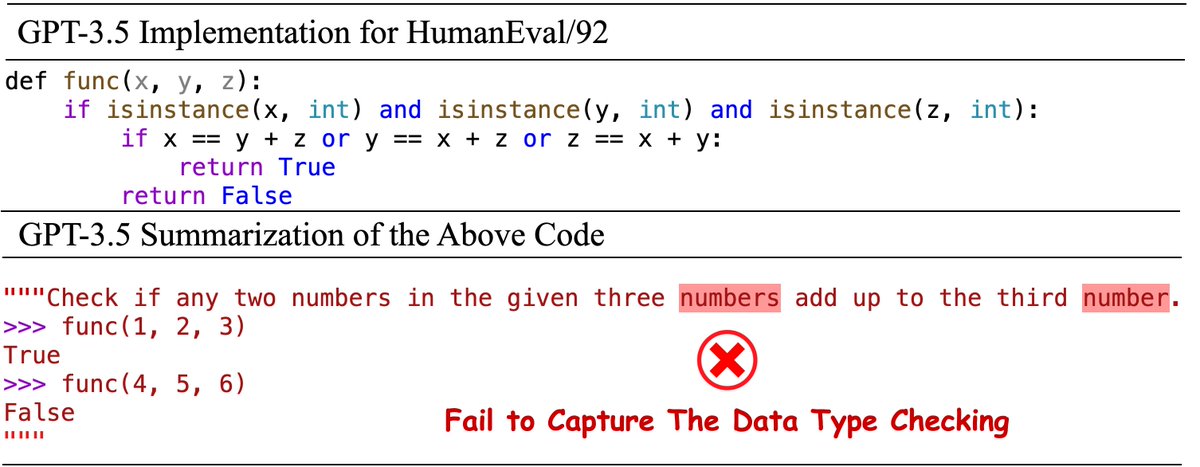

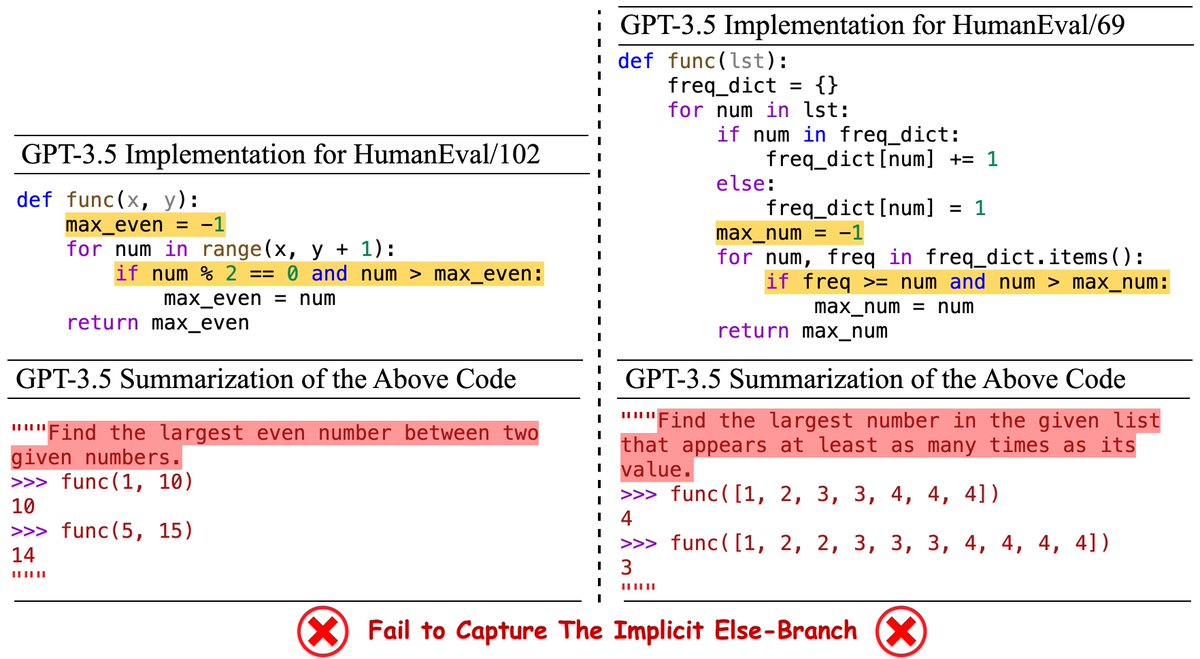

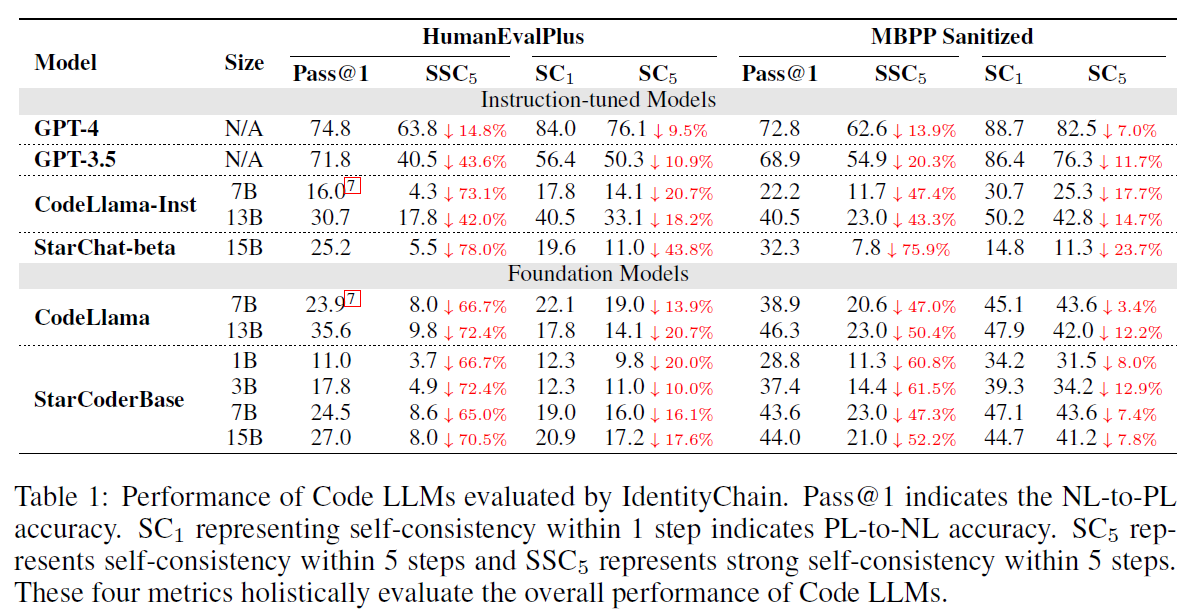

🚨 #GPT4 doesn't understand the code/specification written by itself!? 🚨

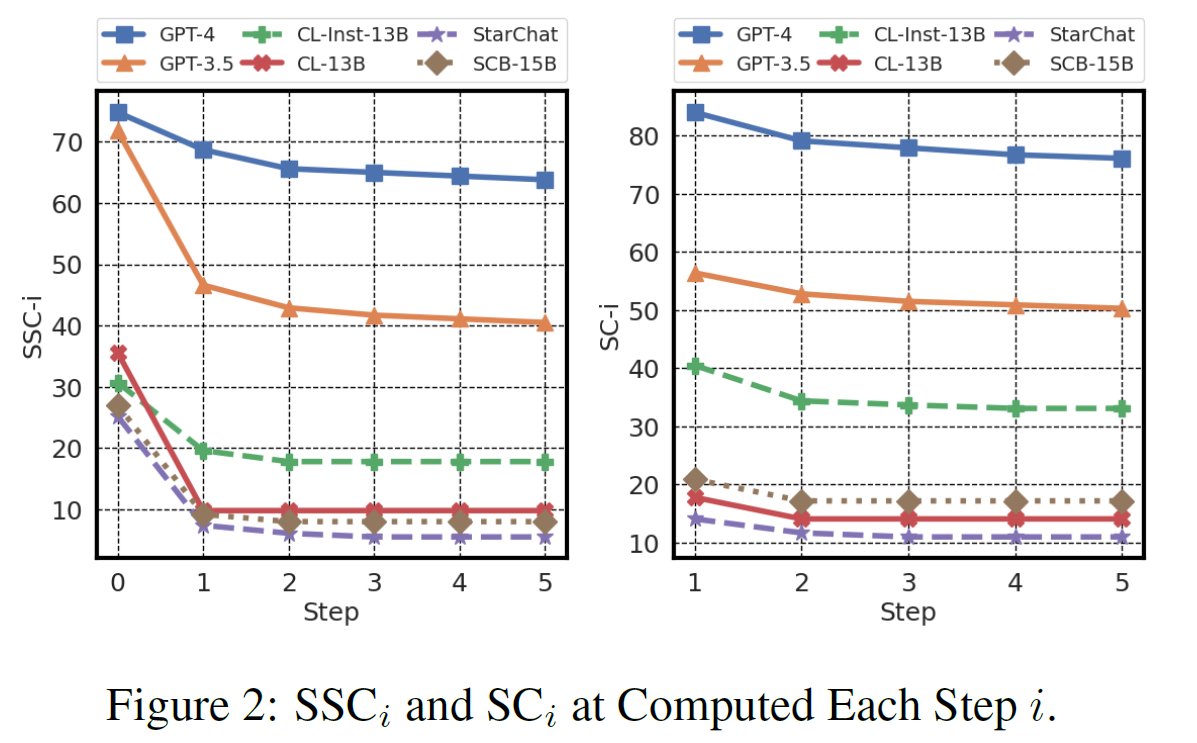

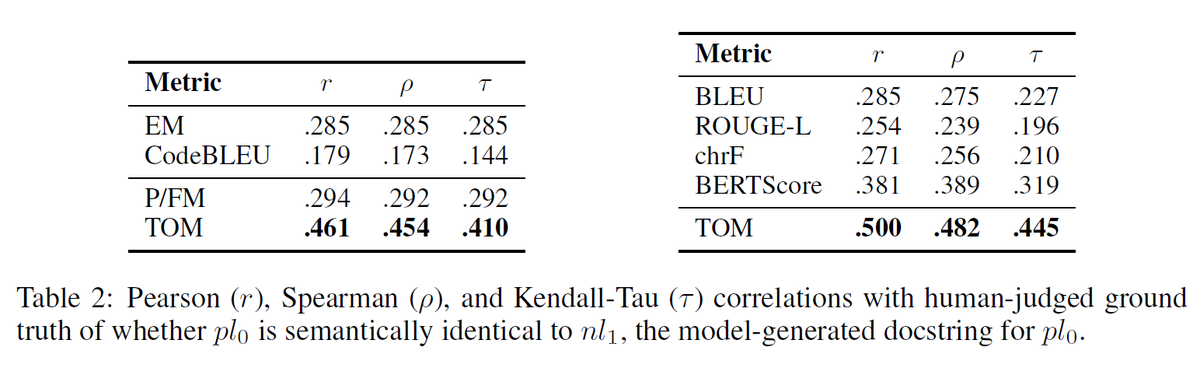

🥳 Check out our #ICLR2024 paper "Beyond Accuracy: Evaluating Self-Consistency of Code Large Language Models with ldentityChain" 🥳#LLM

Paper: arxiv.org/abs/2310.14053

Code: github.com/marcusm117/Ide…

[1/6]

English