Our co-founder Terence Tao is announcing SAIR Foundation's inaugural competition: the Mathematics Distillation Challenge. Co-organized by @damekdavis, Terence Tao, and SAIR Foundation. competition.sair.foundation/competitions/m…

Ben Grimmer

438 posts

@prof_grimmer

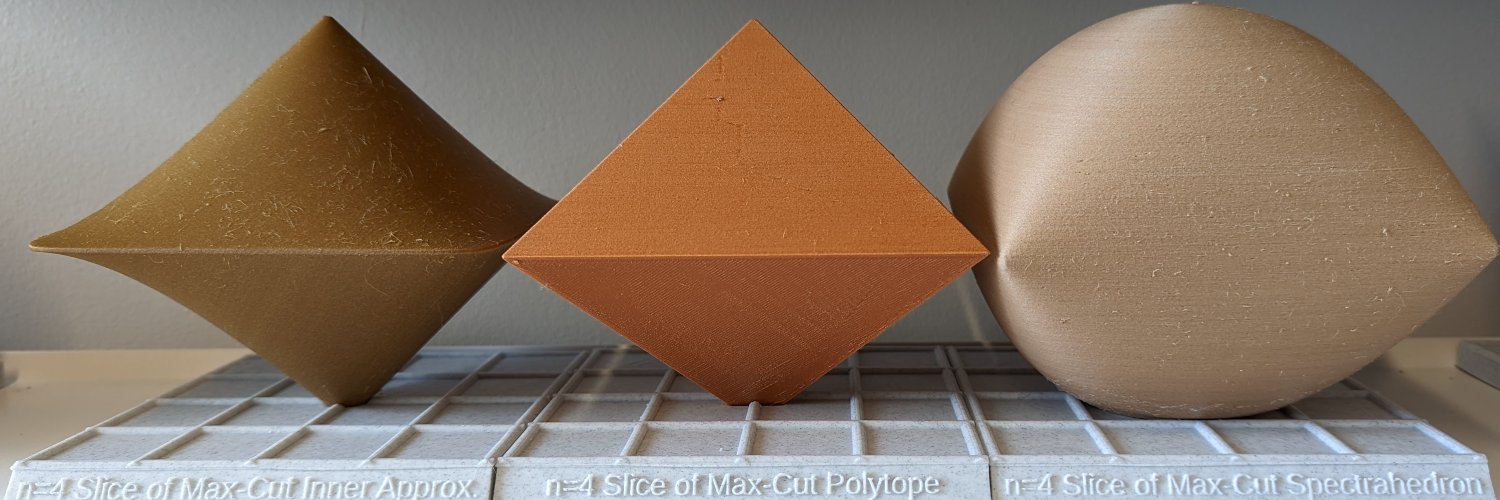

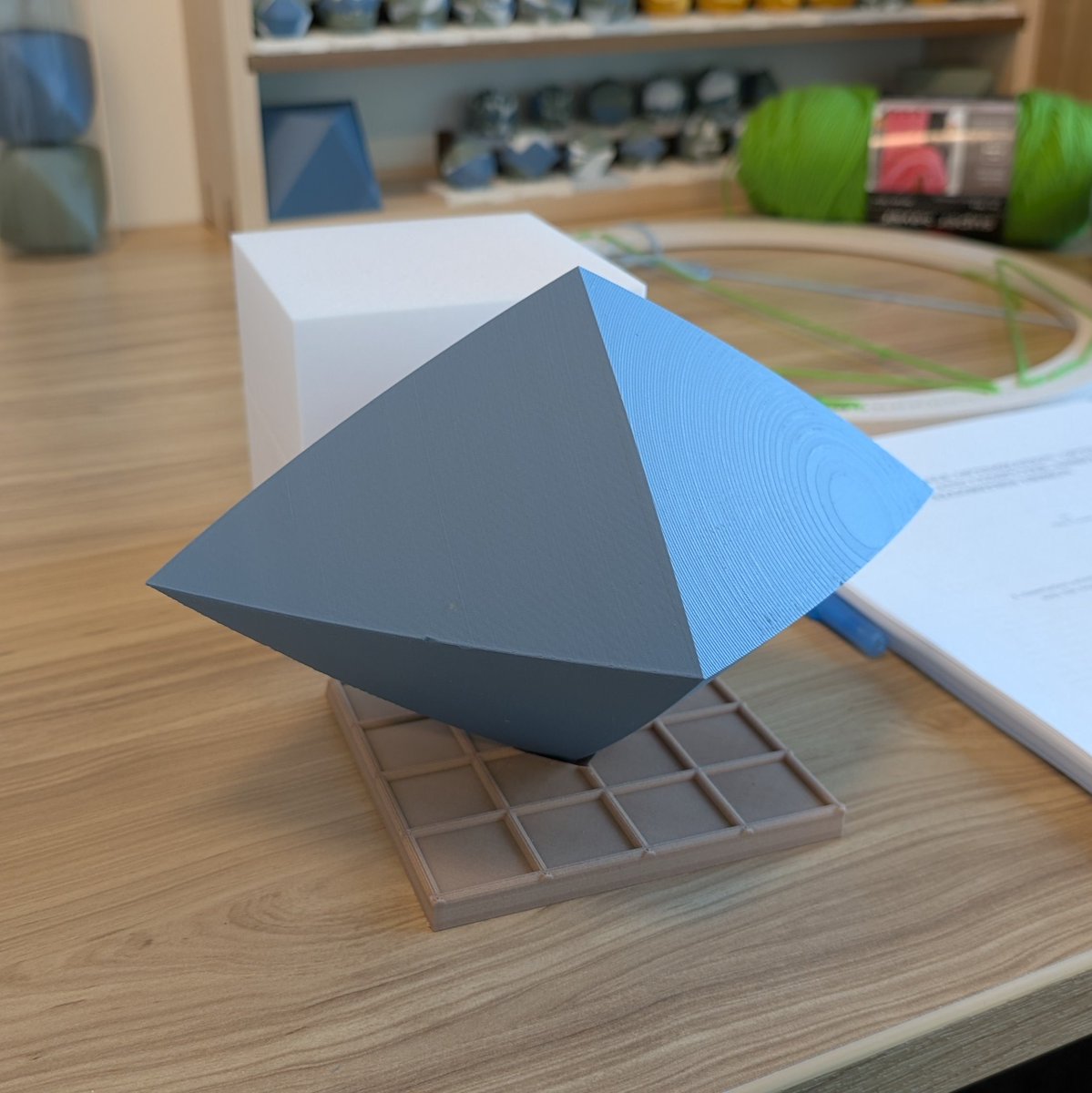

Assistant Professor @JohnsHopkinsAMS, Optimization, PhD @Cornell_ORIE Mostly here to share pretty maths/3D prints, sometimes sharing my research

Our co-founder Terence Tao is announcing SAIR Foundation's inaugural competition: the Mathematics Distillation Challenge. Co-organized by @damekdavis, Terence Tao, and SAIR Foundation. competition.sair.foundation/competitions/m…

For anyone interested in our lower bound result for Frank-Wolfe methods in Nemirovski and Yudin "zero-chain" lower bounding style, a link: arxiv.org/abs/2602.22608

1/10 We built ADANA, an optimizer that gets better as you scale. It extends AdamW with log-time schedules for momentum and weight decay — same hyperparameter count, no extra engineering. Scaled from 45M to 2.6B, it saves ~40% compute vs tuned AdamW, and the gap keeps growing.🧵

Congrats to the 126 early-career scholars awarded a 2026 Sloan Research Fellowship, whose creativity and innovation set them apart as the next generation of scientific leaders! Our Fellows represent 7 fields and 44 institutions across the US and Canada. sloan.org/fellowships/20…