Shunsuke Saito

941 posts

Shunsuke Saito

@psyth91

Director, Research Scientist @AIatMeta Digital Human/Vision/Graphics デジタルヒューマンの研究してます。 #pifuhd #sapiens All opinions are my own.

Back to working on exo + ego in @rerundotio. This is a big jump in progress! One of the main painpoints I've had is getting the ego and exo views aligned in the same coordinate system, but I finally managed to get it all working. This means that now I have 1. Slam working for ego 2. Calibrated exo views 3. 3D keypoints for the full human body 4. 6DoF wrist poses 5. Temporally aligned videos 6. Spatially aligned multi cameras Now it's time to scale it up 🙂

Introducing SAM 3D, the newest addition to the SAM collection, bringing common sense 3D understanding of everyday images. SAM 3D includes two models: 🛋️ SAM 3D Objects for object and scene reconstruction 🧑🤝🧑 SAM 3D Body for human pose and shape estimation Both models achieve state-of-the-art performance transforming static 2D images into vivid, accurate reconstructions. 🔗 Learn more: go.meta.me/305985

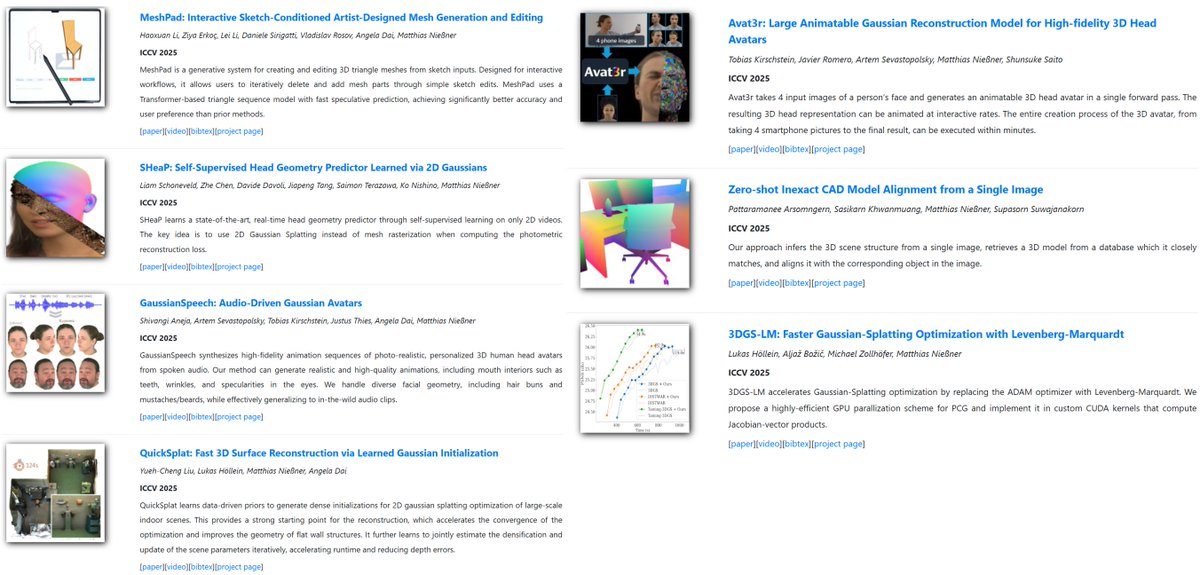

📢📢 𝐀𝐯𝐚𝐭𝟑𝐫 📢📢 Avat3r creates high-quality 3D head avatars from just a few input images in a single forward pass with a new dynamic 3DGS reconstruction model. Video: youtu.be/P3zNVx15gYs Project: tobias-kirschstein.github.io/avat3r Our core idea is to make Gaussian Reconstruction Models animatable. We find that a simple cross-attention to an expression code sequence is already sufficient to model complex facial expressions. We then incorporate position maps from DUSt3R and feature maps from Sapiens to facilitate the prediction task. While DUSt3R's position maps act as a pixel-aligned initialization for the Gaussians' positions, the Sapiens feature maps help the cross-view transformer to match corresponding image tokens in the 4 input images. One major challenge in creating a 3D head avatar from smartphone images comes from inconsistent facial expressions when the subject could not remain perfectly static during the capture. We eliminate this static requirement by simply showing our model input images with different facial expressions during training. This technique makes our model robust to inconsistent input images later on. Finally, we show that despite the model has been trained with 4 input images, one can even create a 3D head avatar when only a single image is available. To achieve this, we employ a pre-trained 3D GAN to lift the single image to 3D and then render the 4 input images for our model. This allows us to create 3D head avatars from single images and even highly out-of-distribution examples like AI generated faces, paintings or statues. Great work by @TobiasKirschst1 from his internship at Meta with Javier Romero, @ASevastopolsky, and @psyth91