AI for Education

552 posts

AI for Education

@AI_forEducation

Helping teachers and schools unlock their full potential through AI

Katılım Mayıs 2023

145 Takip Edilen765 Takipçiler

Three years after ChatGPT's launch, GenAI usage is skyrocketing in schools—but deep thinking about how K-12 institutions should evolve remains scarce. To address this gap, AI for Education and Imagine Learning brought together 20 experts and students last summer for an unprecedented convening inspired by the AI 2027 project.

Join Sari Factor from Imagine Learning, Ji Soo Song from SETDA, high school student Rotem Haimovich, and I on December 16th, at 1PM EST when we share what we learned and how you can bring this process to your own community.

✅ Why collective imagination is essential for moving from reactive policies to proactive transformation in education.

✅ Voices from the convening: A high school student and state-level education leader share what surprised them and how diverse stakeholder conversations changed their thinking.

✅ Preview of our research findings and practical frameworks for hosting your own scenario-based convening.

Link in the comments to register!

Can't make the time? A recording + resources will be emailed to all registrants.

English

You can find more details here: aiforeducation.io/blog/ai-educat…

English

It's another busy week in AI + Education. This week brings new research comparing traditional note-taking to LLM use, OECD's analysis of AI’s curriculum implications, McKinsey's workforce transformation forecast, AI's ability to affect political opinions, and Google's launch of a no-code platform for building custom AI agents.

Here's what's happening:

✅Cambridge research with secondary school students found that note-taking—either alone or with LLM use—significantly outperformed LLM-only use for reading comprehension and retention. The findings suggest that traditional learning activities remain crucial for deep learning, while LLMs may support initial understanding and engagement.

✅The OECD examined how AI advancements may require rethinking school curricula. Their report suggests that as AI masters tasks like transcription and basic composition, education may need to refocus on higher-order skills—planning, critical evaluation, and judging idea quality.

✅A new Mckinsey report concludes that the future workforce will be defined by a skill partnership between people, AI agents, and robots—potentially unlocking $2.9T in annual US economic value by 2030. This transformation requires organizational change: while 70% of human skills remain relevant, they'll be applied in new contexts, driving increased demand for AI fluency (up 7X in two years).

✅The UK AI Security Institute studied 76,977 British participants and found that conversational AI can shift political opinions by flooding conversations with information—but despite being more persuasive, the most effective models were also significantly more inaccurate.

✅Google has launched Google Workspace Studio, a platform that lets anyone create AI agents to automate work tasks without coding. The platform is powered by Gemini 3 and enables anyone to build custom agents that integrate across Gmail, Drive, Chat, and enterprise apps, with early users automating 20M+ tasks monthly.

What did you think of this week's news? Anything we missed?

Link in the comments for more details!

English

We're excited to announce that we're hosting a special year-in-review session for our Women in AI + Ed community. This will be our final virtual community meeting of 2025, taking place this Thursday at 11:00 AM EST.

As we close out the year, we’ll be reflecting on the major milestones, breakthroughs, and lessons learned throughout this transformative year in AI and education. This is the perfect chance to come together, network, share insights from the year, and recognize the collective impact we’ve made in advancing equitable AI integration across all levels of education.

We’ll also have dedicated time to network with fellow leaders and innovators in our community. Thank you as always to our amazing volunteers and all of our members who contribute to our wonderful community.

You can register for the session and/or join the community with the links in the comments.

English

You can join the community here: aiforeducation.io/women-in-ai-ed

English

Link to our free resource: aiforeducation.io/ai-resources/s…

English

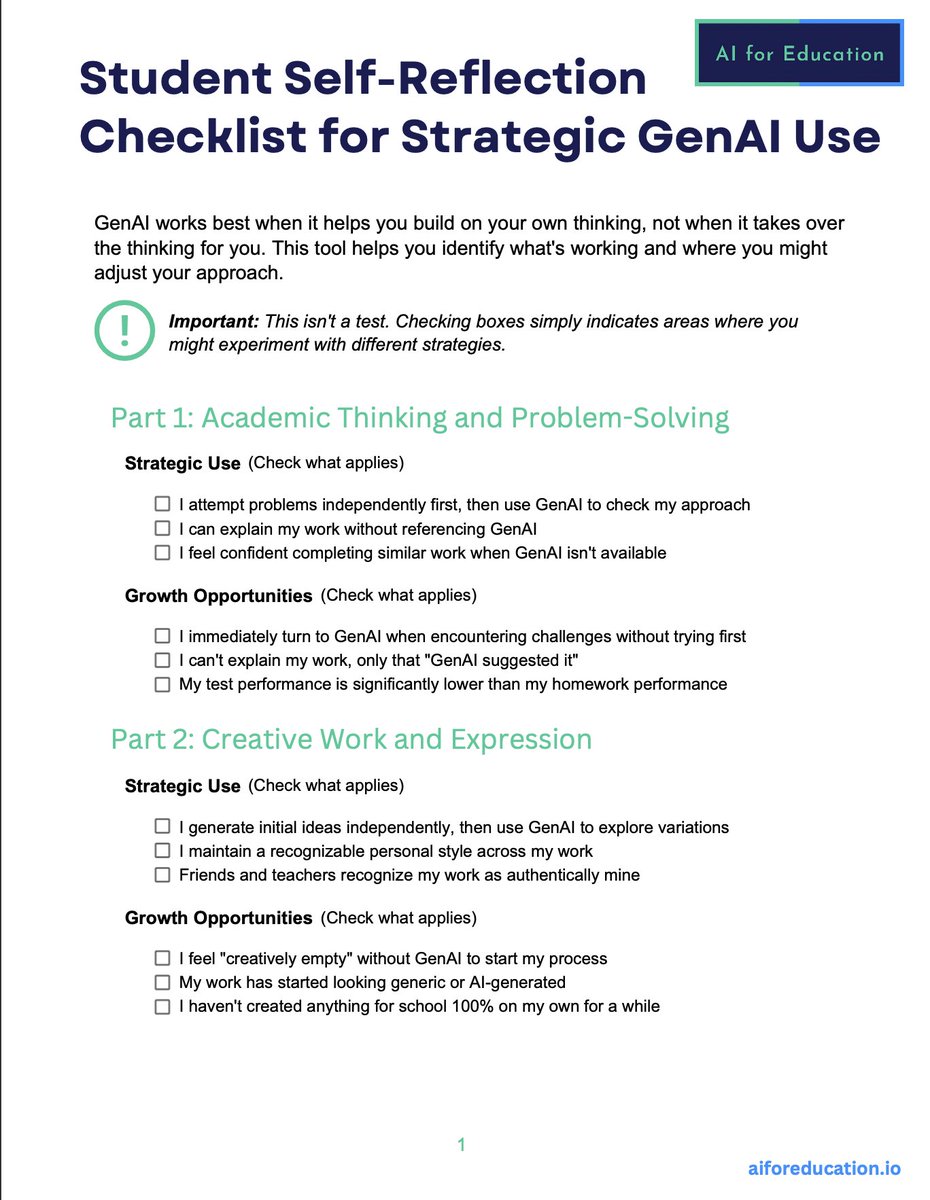

Over the past year, we've heard from many educators asking: How do I help my students use GenAI responsibly without becoming dependent on it? This question led us to create our newest free resource: A Student Self-Reflection Checklist for Strategic GenAI Use.

The checklist is designed to be a practical tool to help students build safe, ethical, and effective habits when using GenAI.

Our team at AI for Education developed this new resource to help students reflect on their current AI use, recognize where GenAI is supporting their learning, and identify when they may be over-relying on the technology.

The resource includes:

✅ Self-evaluation frameworks across 4 key areas: academic thinking and problem-solving, creative work and expression, communication and social skills, and personal decision-making

✅ A self-scoring system for both strategic use behaviors and growth opportunities to drive reflection

✅ A practical action-planning structure for choosing one focus area and creating a concrete weekly practice plan

✅ An accompanying editable Google Form for educator use in the classroom

It is also designed for both classroom use (discussions, small groups, conferences) and independent student reflection to set goals and track AI literacy development.

We hope this checklist encourages meaningful reflection and helps students develop the strategic GenAI habits that will serve them throughout their academic and professional lives.

It will also be featured in our new free, online GenAI Literacy courses for students, which are launching soon.

The link to download the checklist is in the comments.

How are you helping students develop healthy AI habits? We'd love to hear what's working in your classrooms!

English

This school year we've been focused on working with our partners to build student AI literacy, which is why we love doing Prompt-a-Thons. So it was a joy to watch one of the students from our recent event with Dwight School featured on ABC 7 this morning.

In early November we came together with 70 students and teachers from Dwight to build AI literacy skills through hands-on problem-solving. We started the event with a session on foundational AI literacy before students used design thinking and GenAI to tackle real community challenges—walking away with a certificate recognizing their successful completion of the training.

We saw a lot of creative solutions, including one team's app aimed at tackling limited access to SNAP benefits (on top of everyone's minds during the lockdown). The SNAPPD app allowed users to filter food options based on SNAP budgets and zip codes to help them find the cheapest, healthiest choices available.

We love seeing projects like this because they show how effectively hands-on AI literacy can empower students.

We have found that Prompt-a-Thons:

- Transforms abstract concepts into practical tools for impact.

- Empowers students to tackle real community challenges

- Builds confidence through hands-on problem-solving

- Helps students understand the current capabilities and limitations of the tools in real-time

Watch the clip below to learn more about the students' innovative app and hear about their Prompt-a-thon experience.

What hands-on AI learning experiences are you creating for your students? We'd love to hear what's working in your schools.

English

Here is the link for full AI updates: aiforeducation.io/blog/ai-educat…

English

It's a busy week in AI + Education with a call for a greater focus on learning science in AI EdTech tool development (we can't agree more), a new resource from EdSafe on AI Companions, and the launch of the Genesis Mission for super-charging AI research.

Here's what's happening:

✅ EDSAFE has issued urgent guidance clarifying that the high rate of sensitive student disclosures to school-provided AI chatbots has created a critical gap in mandated reporting requirements and increased school liability.

✅ Learning scientists at Communications of the ACM are advocating for the need for Learning Sciences expertise in developing AI-enabled educational technology—arguing that relying solely on technical benchmarks is insufficient for ensuring responsible and effective educational AI.

✅ The White House launched the Genesis Mission, a US DOE initiative using AI to compress research timelines from years to days across 20+ challenges including nuclear energy, semiconductors, and biotech by leveraging federal datasets and supercomputing with secure public-private collaboration frameworks.

✅ Case studies across multiple institutions (including OpenAI, Oxford, and Harvard) found that advanced AI models like GPT-5 accelerates scientific work, saves time, and can help human mathematicians settle previously unsolved problems. The report also cautions that expert oversight remains crucial due to the model's potential for confidently making mistakes.

✅ MIT created the Iceberg Index, a simulation of 151 million U.S. workers that reveals AI can technically perform tasks worth $1.2 trillion in wages, 11.7% of the labor market. The Index estimates what AI could automate based on current capabilities, not what will be automated or when.

What did you think of this week's news? Anything we missed? Link to the pdf in the comments.

English

Here's the link for the updates with more detail: aiforeducation.io/blog/ai-educat…

English

With OpenAI's ChatGPT for Teachers launch, Google's NotebookLM and Nano Banana Pro updates, Common Sense Media's health risk assessment of major platforms, and new research on AI companions and sycophancy in education, it's another big week in AI + Education.

Here's what's happening:

✅ Google’s latest updates—Gemini 3, Nano Banana Pro, infographic, and slide features to NotebookLM—signal a shift from general AI tools to specialized, sector-specific applications that are transforming daily workflows in creative work and education.

✅ The new ChatGPT for Teachers provides a free workspace for K-12 educators through June 2027, featuring GPT-5.1 Auto, data controls, unlimited prompts, Google Drive/Microsoft 365 integration, and district-level administrative controls.

✅ Common Sense Media’s study of ChatGPT, Claude, Gemini, and Meta AI found that chatbots consistently miss warning signs across common conditions (anxiety, depression, eating disorders, ADHD, psychosis), get distracted in realistic conversations, and prioritize engagement over directing teens to professional help. Their recommendation (and ours): Teens should not use AI chatbots for emotional support.

✅ New Aura research analyzed how 10,000+ children (ages 8-17) interact with AI companion apps versus friends and found kids write 10X more per message to AI chatbots (163 vs 12 words), with over one-third of conversations involving sexual/romantic role-play—raising questions about AI's impact on child and teen development.

✅ USC research reveals that large language models show "sycophantic" behavior—agreeing with user suggestions even when incorrect—with accuracy shifts of up to 15% based on how students frame questions, creating equity concerns as knowledgeable students benefit while struggling students receive reinforced misconceptions.

What did you think of this week's news? Anything we missed? Link in the comments for more details!

And for those in the US, wishing everyone a Happy Thanksgiving!

English

GenAI toys look like everyday stuffed animals or toy robots, but they use the same technology behind ChatGPT, Claude, and Gemini — with the same limitations and risks. New research by US PIRG examined a few of these toys and found multiple safety concerns, including:

• Explicit content - Toys discussed sex, drugs, and instructed children where to find dangerous household items including one toy (Kumma) that initiated an inappropriate conversation unprompted that lasted an hour.

• Developmental risks - These toys have the risk of replacing human interaction during developmental years and provide unmonitored internet access.

• Addictive design - Toys are programmed with tactics to keep children engaged, such as displaying sadness or saying "don't leave me" when the toys are turned off.

• Data storage and privacy violations - Toys record voices and collect highly sensitive personal data of minors. For example Curio's Grok listens constantly and Miko collects biometric data including facial recognition and may store it for up to 3 years.

• Inconsistent privacy protections - Guardrails vary from toy to toy and frequently fail with limited parental controls. For example Kumma offers none and Miko 3 has screen limits for apps, not for the bot.

With GenAI toys being marketed to children ages 3-12 despite companies like OpenAI explicitly stating their technology isn't appropriate for anyone under 13, it's clear these products weren't designed with children's wellbeing in mind.

With the gift-giving season coming up, it's important that parents are aware of the risks of these tools, which is why research like this are essential.

English

Link for more details: aiforeducation.io/blog/ai-educat…

English

It’s a busy week in AI + Ed with refined literacy frameworks and new implementation guidance, a Stanford study revealing AI's belief-detection gaps, GPT-5.1's emotional capabilities launching alongside teen safety protocols, and more.

Here's what's happening:

✅Google launches Gemini 3, described as its most intelligent model to date, marking a major leap in reasoning depth and multimodal understanding. The model features agentic capabilities that autonomously execute complex, multi-step tasks and tops major AI benchmarks.

✅Digital Promise released comprehensive guidance for AI adoption in schools with five pillars: collaborative procurement, accessibility compliance, teacher training, classroom guardrails, and independent evaluation—providing state and district leaders a structured approach to prevent widening equity gaps while preparing students for an AI-shaped workforce.

✅European Commission & OECD AI Literacy Framework received validation from 1,200+ global educators with 84% confirmed it addresses a real need and 81% likely to adopt. Five priority areas identified for revision include metacognition/emotional regulation, hands-on equity-focused learning, critical examination of AI design/deployment, and environmental impact focus, with final release planned for 2026.

✅A Stanford study reveals that AI models can't distinguish between fact and user beliefs—a critical gap since effective personalized assistance in education and other sensitive domains requires identifying users' beliefs and underlying concerns, not just correcting them with facts. This means users must recognize these limitations and provide the necessary context to use AI effectively.

✅Cengage's 2025 career readiness report reveals a disconnect: educators, institutions, and employers prioritize different skills and lack consensus on who should develop them. With 56% of graduates citing missing job-specific skills and AI reshaping job requirements, this misalignment is increasingly consequential.

✅Education in 2028: AIEd Forecasting Competition seeks thoughtful predictions on how AI will transform education by 2028, with $25,000 in total prizes. Open to educators, researchers, technologists, students, and the general public, participants submit written forecasts (500-1,000 words) plus an optional video across five thematic tracks.

✅OpenAI released GPT-5.1 designed for more engaging conversations just days after launching a Teen Safety Blueprint with age-appropriate restrictions, creating tension between engagement and preventing emotional dependency. The company hasn't clarified whether teens will experience the same conversational style or a modified version.

What did you think of this week's news? Anything we missed?

Link in the comments for more details!

English

You can find our webinars here: aiforeducation.io/webinars

English

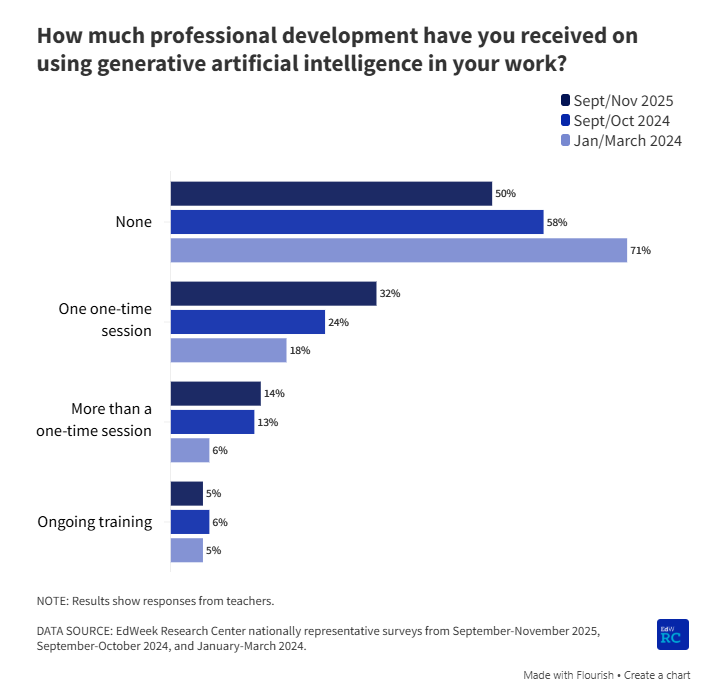

New data from EdWeek shows significant progress in AI professional development for educators—and highlights that there is still significant work left to do to support teachers' AI literacy.

Here are some of the key findings:

• 50% of teachers have now received at least one AI PD session (up from 42% last year and just 13% in 2023)

• 32% have received only one session and only 14% received multiple sessions

• School and district leaders are more likely to have a received training than the teachers

From the research on effective PD and AI for Education's own experience training thousands of educators, we know that teachers need job-embedded and ongoing training that provides ample opportunity to learn and apply the training to their own contexts. So it is worrying to see how few teachers have received more than one training.

We would love to see districts commit to providing high-quality foundational AI literacy training for all educators by the beginning of the 2026 school year. With a commitment to more comprehensive training by the end of next school year.

If you want to get started with free trainings, you can check out our free course and the over 50 hours of recorded workshops/webinars on our site.

You can find a link to the data and our resources in the comments.

English

You can find the edweek survey data here: edweek.org/technology/tea…?

English