Mr. Agent retweetledi

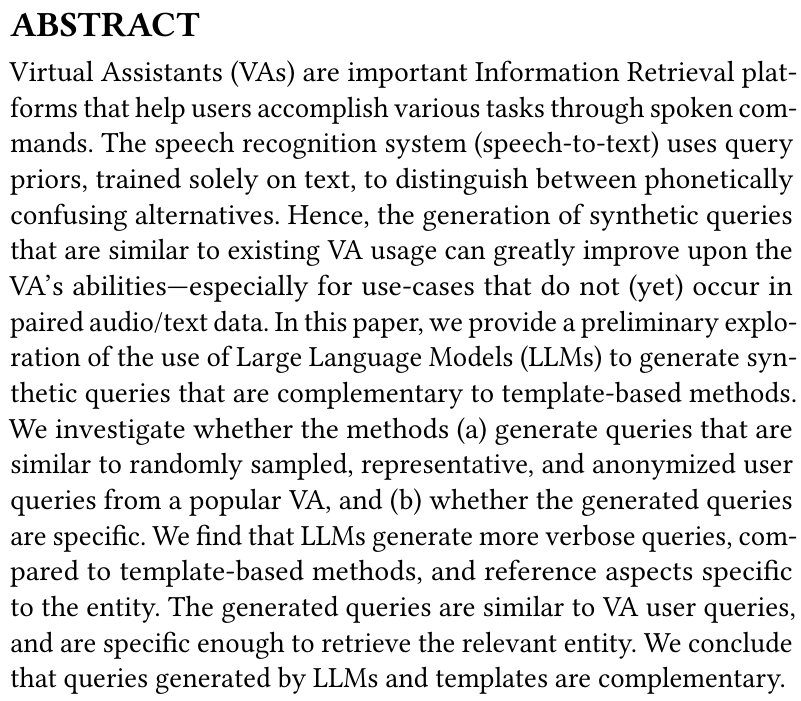

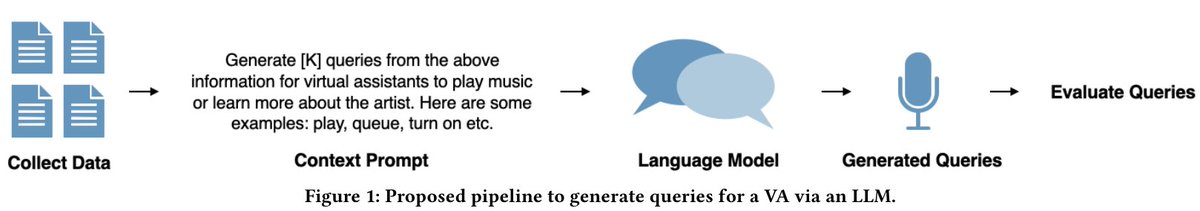

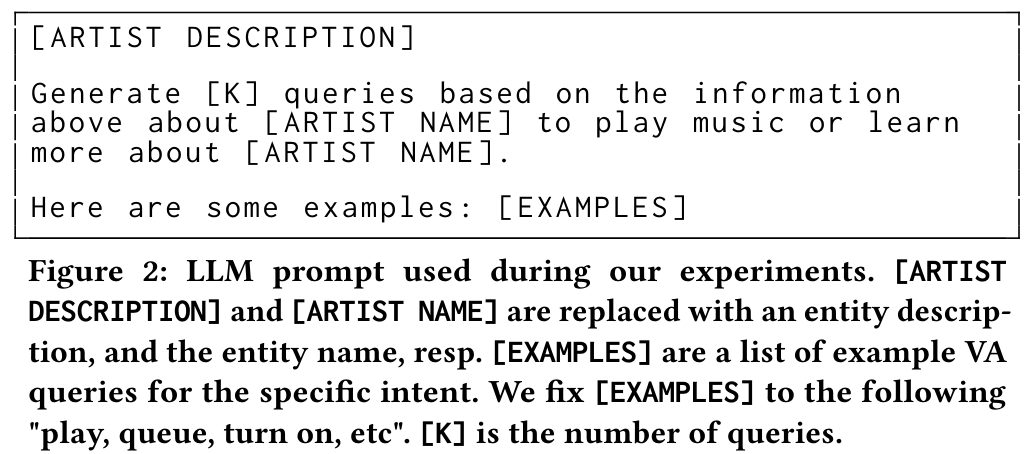

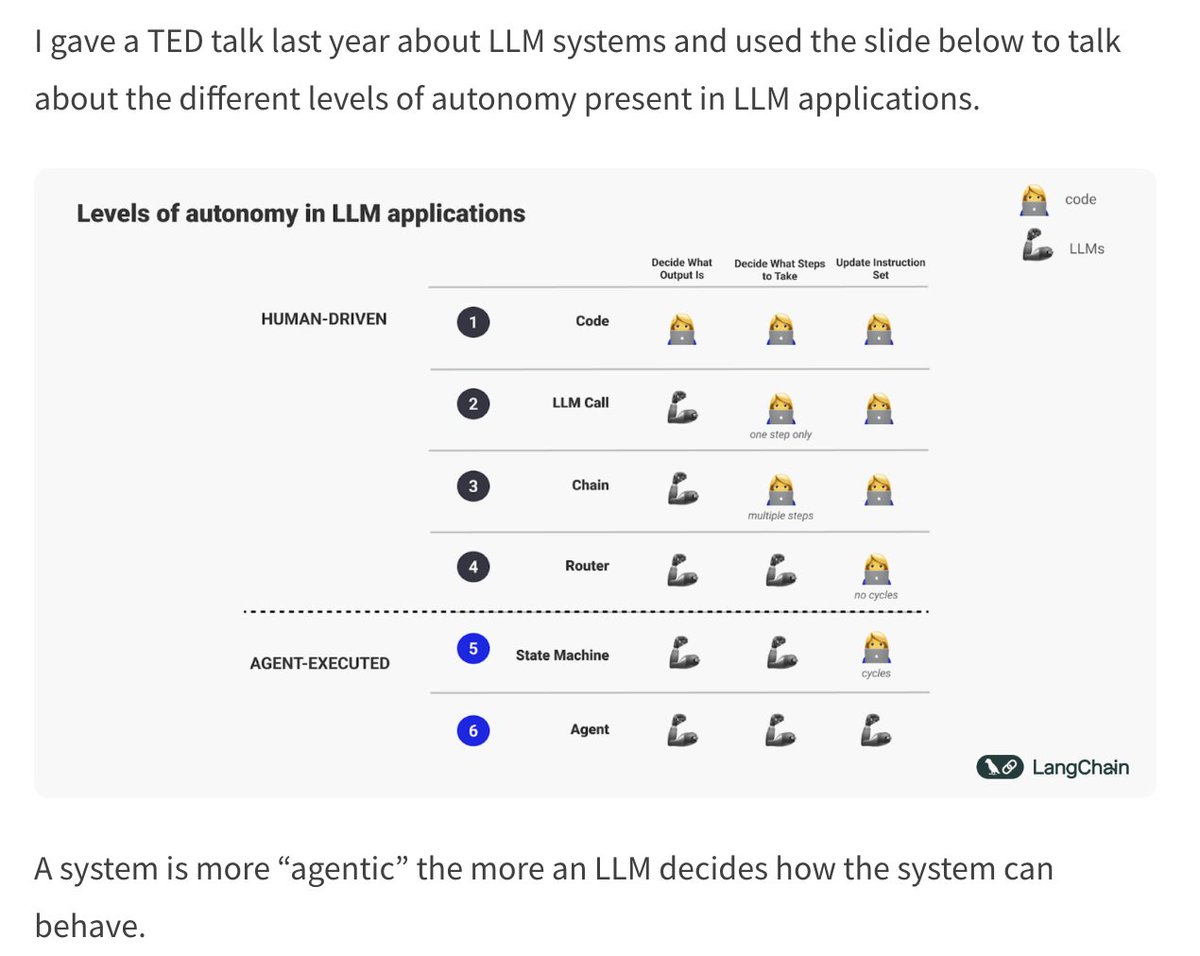

❓What is an agent?

I get asked this question a lot, so I wrote a little blog on this topic and other things:

- What is an agent?

- What does it mean to be agentic?

- Why is “agentic” a helpful concept?

- Agentic is new

Check it out here: blog.langchain.dev/what-is-an-age…

English