Sabitlenmiş Tweet

Our updated mobile app allows you to generate AND detect deepfakes in seconds.

Create, verify, and explore AI-generated content all in one place.

Try BitMind's AI Detector & Creator app here: bitmind.ai/mobile

English

BitMind

525 posts

@bitmind

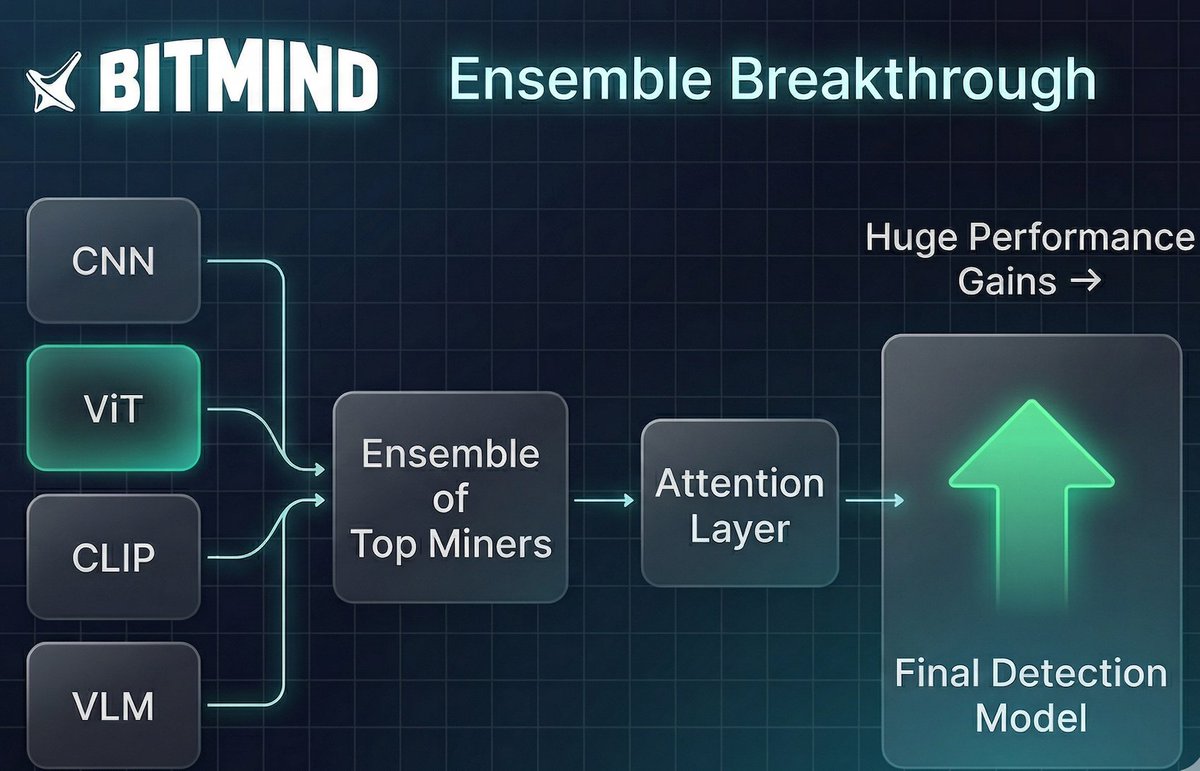

A frontier research lab dedicated to AI security, specializing in deepfake detection https://t.co/qY5jFcASNx