Sabitlenmiş Tweet

Hello!

In case you’re new here, let us introduce ourselves...

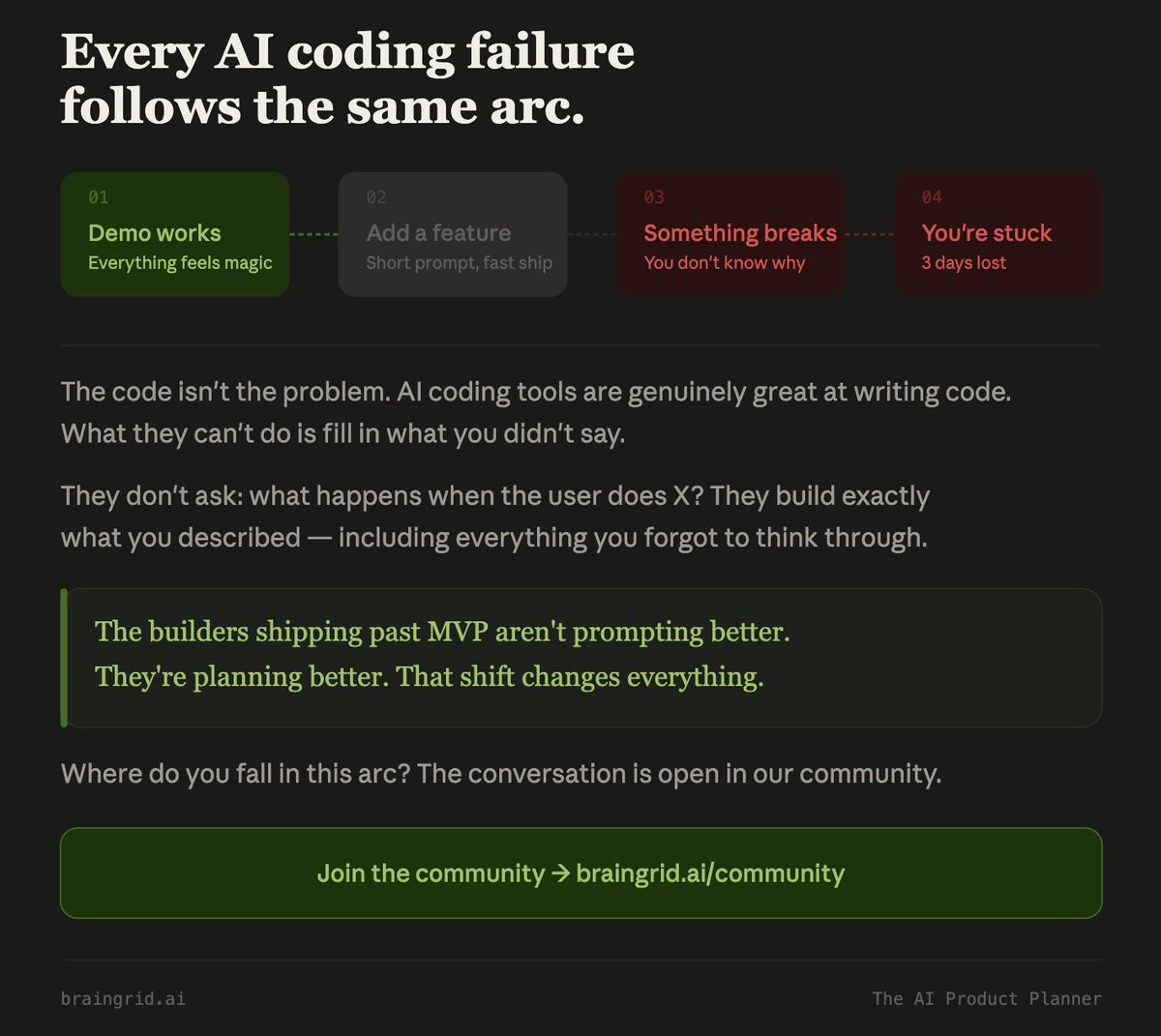

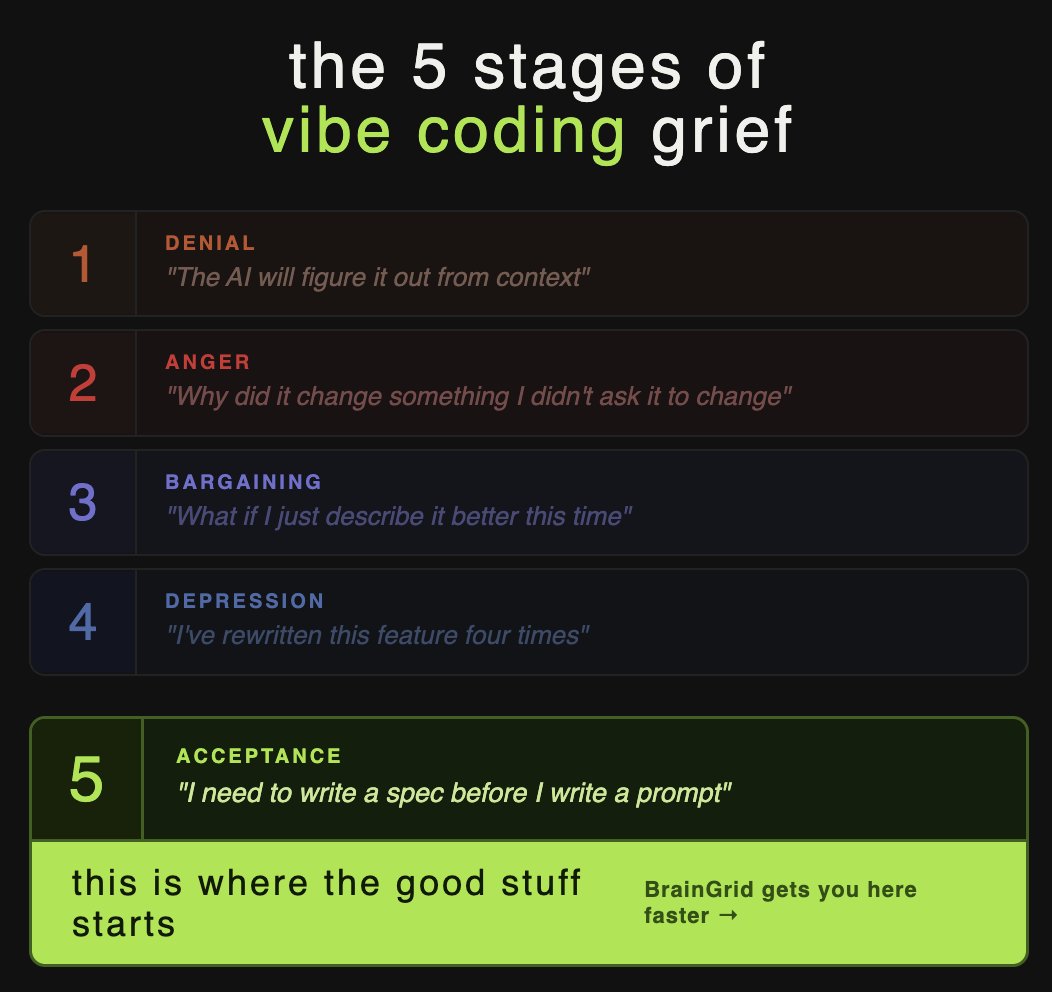

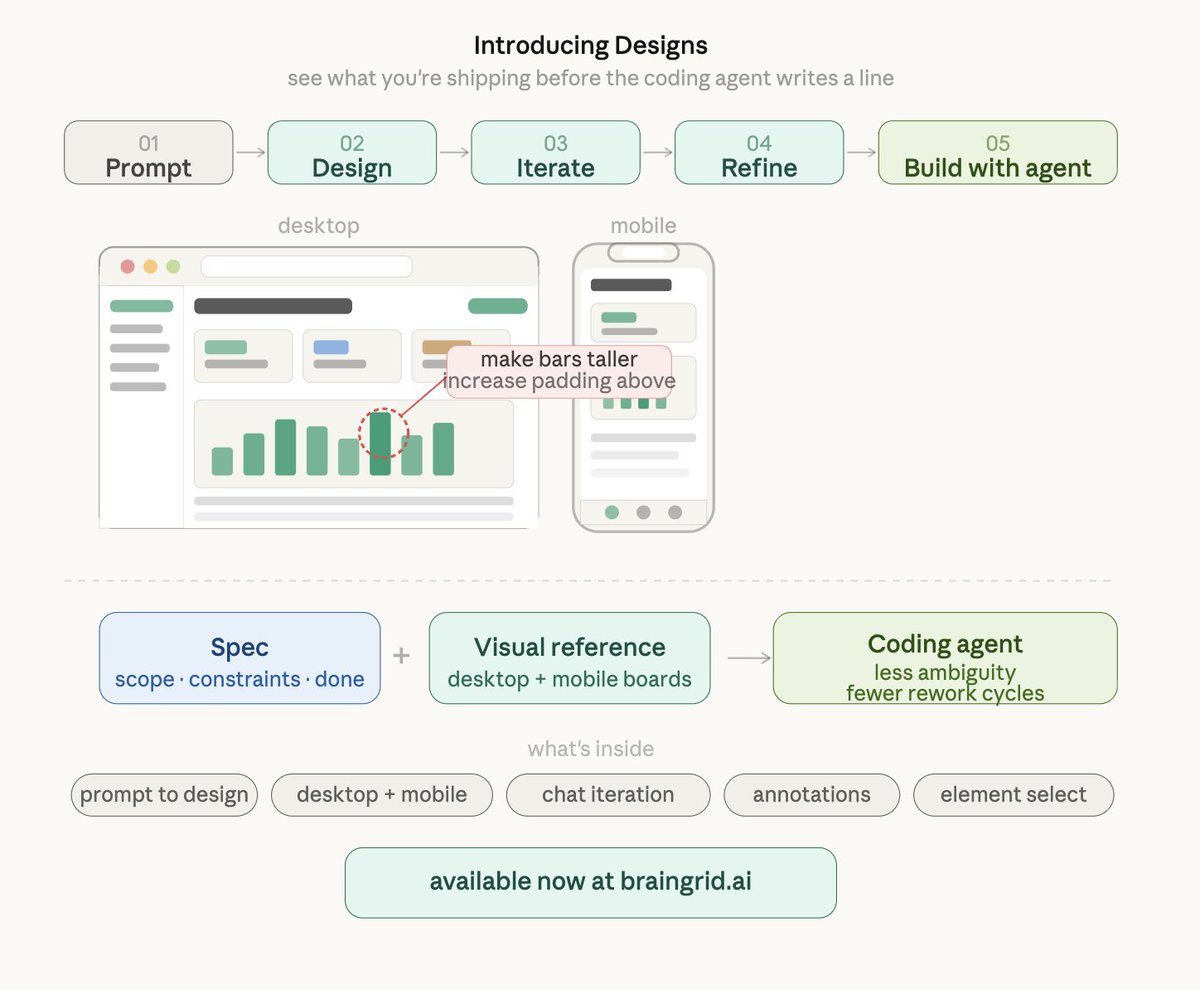

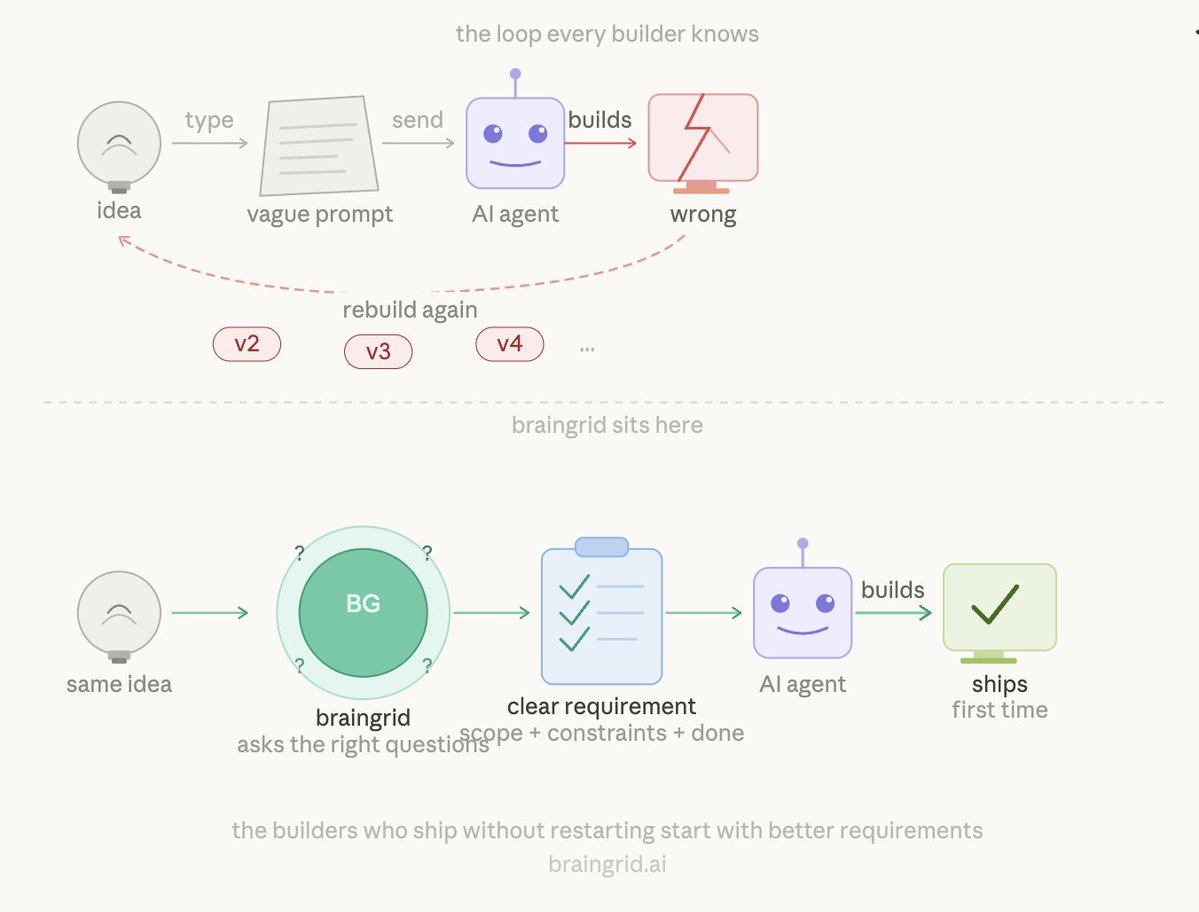

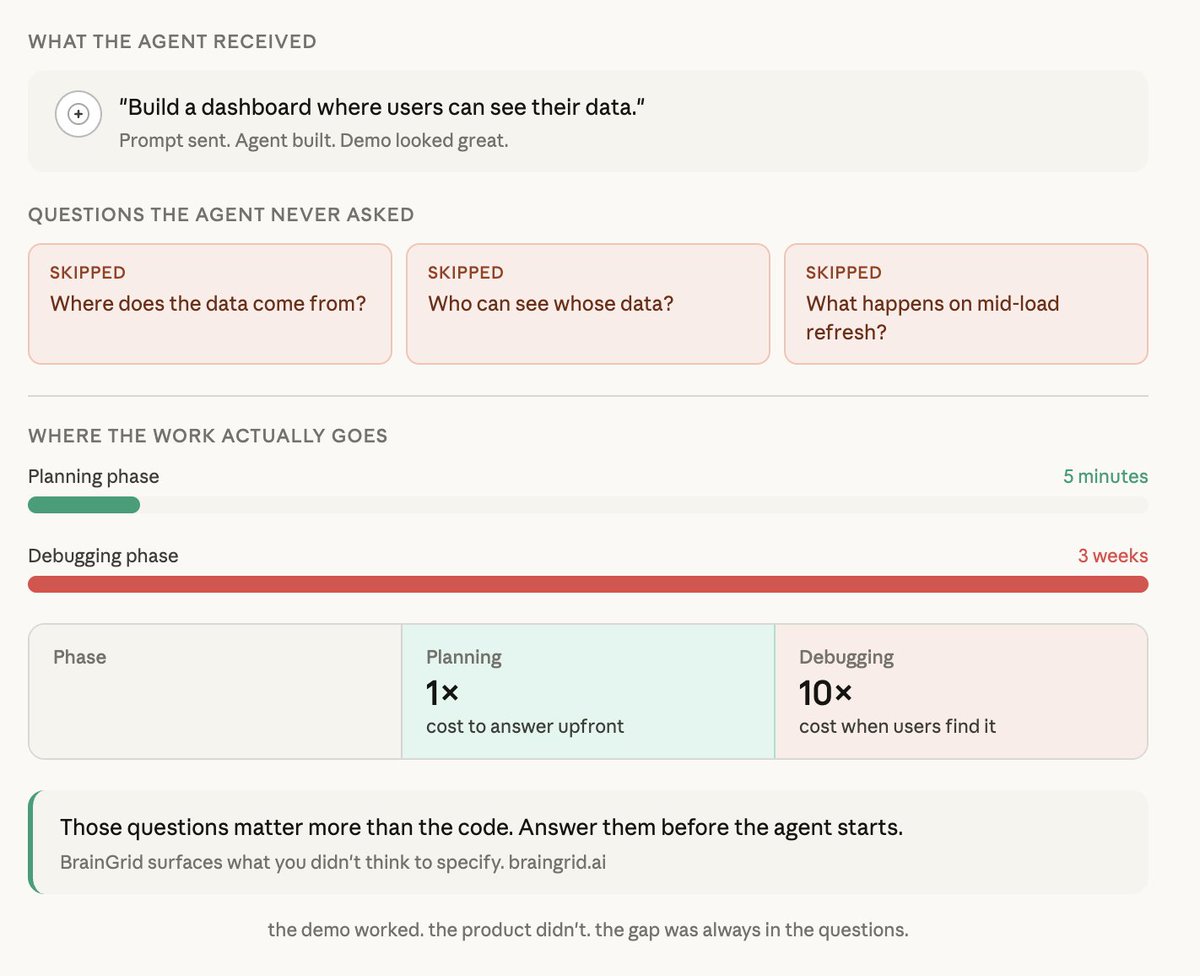

We’re BrainGrid, a AI-Powered Requirements and Task Management tool to help you build 100x more with AI Coding (Works with Cursor, Windsurf, Cline, and VS Code Copilot)

Interested? Join our waitlist at braingrid.ai

So, how about you - what brought you here?

GIF

English