Caviar.eth

5.1K posts

Caviar.eth

@CaviarEth

CriptoBillonaireOG//1000xGem or nothing//Blockchain//NFA//Now $KDA

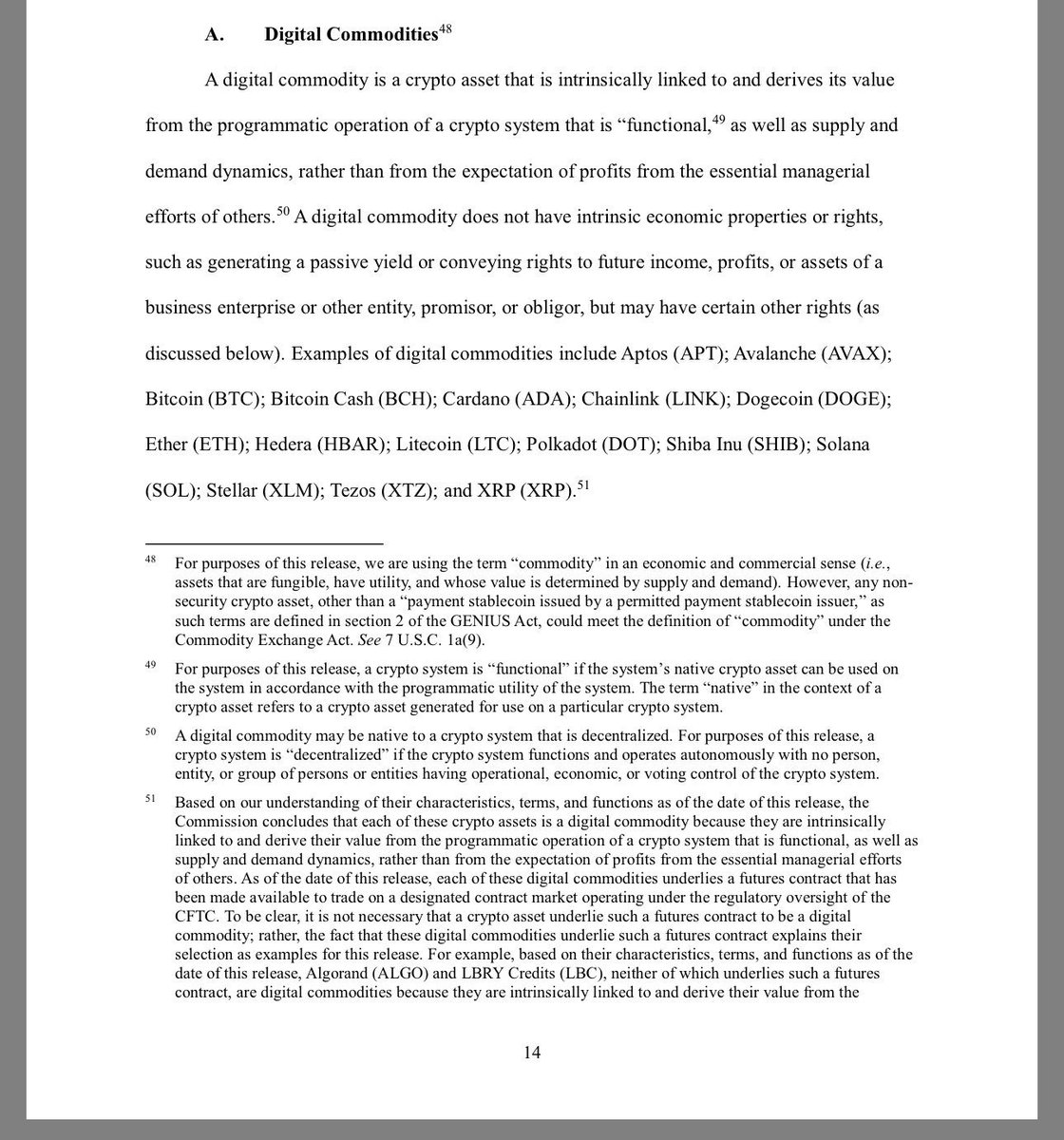

TODAY 🚨: The Commission issued an interpretation that clarifies the application of federal securities laws to crypto assets. This is a major step to provide greater clarity regarding the Commission’s treatment of crypto assets. Read the release here: ow.ly/XhhV50YvxvO

🚨 $XRP Ledger architecture reduces exposure to Ethereum-style mempool backrun MEV, shifting arbitrage toward low-latency market-making between ledger closes. What happened on Ethereum: 1. The order sold 50,432,688 aEthUSDT and received only 327.24 aEthAAVE (about 154,115 USDT/AAVE effective). 2. That execution pushed 17,957.81 WETH into a thin Sushi AAVE pool and got only 331.305 AAVE out, creating a huge temporary price distortion. 3. Two bots immediately backran it in the same block (24643151) and drained the bad price: 0x4538…d4ab: 128.566 AAVE -> 17,929.770 WETH 0x8bd9…34d7: 202.376 AAVE -> 27.882 WETH So the “money bot” profit came from arbitrage/backrun extraction after the giant bad swap distorted pool pricing.

👉 Preparing Kadena for the quantum future. A draft for KIP-0041 has been submitted, proposing the integration of SLH-DSA (Post-Quantum Signatures). This would add a critical layer of long-term security. Developers and community members, check out the specification and share your thoughts. FYI, @CathieDWood @ARKInvest @mightybyte @peterthiel @novogratz @Linus__Torvalds @SirLensALot ❤️ Kadena is alive and preparing for a safer, more decentralized future. 🔗 github.com/kda-community/… 🔗 github.com/kda-community/… #Kadena $KDA #QuantumResistant #Quantum

🔥🔥🔥 "Why Kadena could become the heaven of A2A AI" by CryptoPascal31 A deep dive into why Kadena's horizontal scalability & multi-chain architecture might be the perfect fit for the future of Agent-to-Agent AI — especially compared to Ethereum L1s & centralized L2s like Base. Essential reading 👇 @crypto-pac/why-kadena-could-become-the-heaven-of-a2a-ai-15c6b4d5fbec" target="_blank" rel="nofollow noopener">medium.com/@crypto-pac/wh…

#Kadena #AI #A2A #Web3 $KDA

Great work from the community! 🎉 KDA Tool v1.2 is out with key updates. A special thank you to @mightybyte for originally creating KDA Tool — the foundation for this community release! ✅ Pact 5 support ✅ KIP-0026 mnemonic derivations (Loom, Koala, Linx wallets) ✅ New --preflight & -d options for better control A solid upgrade for Kadena developers and power users. ⚙️ 🔗 github.com/kda-community/… #Kadena $KDA

1/7: The Big News 🧵 Big news for the Kadena community! We are excited to announce the launch of testnet06 — a fresh, clean, and fully functional testnet built for developers, miners, and the community. Read the full announcement below 👇 📖 Medium Article: @communitykadena/welcome-to-the-new-kadena-testnet-testnet06-a3ff8875060e" target="_blank" rel="nofollow noopener">medium.com/@communitykade…

#Kadena #Testnet #Web3 $KDA #pact #chainweb

Do you know that the atomic unit of $KDA is a 'hop'? 🦅 ONE $KDA = 1,000,000,000,000 hops. Named after Admiral Grace Hopper. She invented the compiler and popularized "debugging," teaching computers to speak our language. A permanent tribute to her legacy lives on in every single transaction. 🫡 #Kadena #History

Hyper-scaling Ethereum state by creating new forms of state: ethresear.ch/t/hyper-scalin… Summary: * We want 1000x scale on Ethereum L1. We roughly know how to do this for execution and data. But scaling state is fundamentally harder. * The most practical path for Ethereum may actually be to scale existing state only a medium amount, and at the same time introduce newer forms of state that would be extremely cheap but also more restrictive in how you can use them. * In such a design, the present-day state tree would over time become dominated by user accounts, defi hub contracts, code, and other high-value objects, while all kinds of individual per-user state objects (eg. ERC20s balances, NFTs, CDPs) would be handled with cheaper but more restrictive tools. Making the developer abstractions to make this easy to implement for the use cases that make up >90% of state today seems very doable.

There have recently been some discussions on the ongoing role of L2s in the Ethereum ecosystem, especially in the face of two facts: * L2s' progress to stage 2 (and, secondarily, on interop) has been far slower and more difficult than originally expected * L1 itself is scaling, fees are very low, and gaslimits are projected to increase greatly in 2026 Both of these facts, for their own separate reasons, mean that the original vision of L2s and their role in Ethereum no longer makes sense, and we need a new path. First, let us recap the original vision. Ethereum needs to scale. The definition of "Ethereum scaling" is the existence of large quantities of block space that is backed by the full faith and credit of Ethereum - that is, block space where, if you do things (including with ETH) inside that block space, your activities are guaranteed to be valid, uncensored, unreverted, untouched, as long as Ethereum itself functions. If you create a 10000 TPS EVM where its connection to L1 is mediated by a multisig bridge, then you are not scaling Ethereum. This vision no longer makes sense. L1 does not need L2s to be "branded shards", because L1 is itself scaling. And L2s are not able or willing to satisfy the properties that a true "branded shard" would require. I've even seen at least one explicitly saying that they may never want to go beyond stage 1, not just for technical reasons around ZK-EVM safety, but also because their customers' regulatory needs require them to have ultimate control. This may be doing the right thing for your customers. But it should be obvious that if you are doing this, then you are not "scaling Ethereum" in the sense meant by the rollup-centric roadmap. But that's fine! it's fine because Ethereum itself is now scaling directly on L1, with large planned increases to its gas limit this year and the years ahead. We should stop thinking about L2s as literally being "branded shards" of Ethereum, with the social status and responsibilities that this entails. Instead, we can think of L2s as being a full spectrum, which includes both chains backed by the full faith and credit of Ethereum with various unique properties (eg. not just EVM), as well as a whole array of options at different levels of connection to Ethereum, that each person (or bot) is free to care about or not care about depending on their needs. What would I do today if I were an L2? * Identify a value add other than "scaling". Examples: (i) non-EVM specialized features/VMs around privacy, (ii) efficiency specialized around a particular application, (iii) truly extreme levels of scaling that even a greatly expanded L1 will not do, (iv) a totally different design for non-financial applications, eg. social, identity, AI, (v) ultra-low-latency and other sequencing properties, (vi) maybe built-in oracles or decentralized dispute resolution or other "non-computationally-verifiable" features * Be stage 1 at the minimum (otherwise you really are just a separate L1 with a bridge, and you should just call yourself that) if you're doing things with ETH or other ethereum-issued assets * Support maximum interoperability with Ethereum, though this will differ for each one (eg. what if you're not EVM, or even not financial?) From Ethereum's side, over the past few months I've become more convinced of the value of the native rollup precompile, particuarly once we have enshrined ZK-EVM proofs that we need anyway to scale L1. This is a precompile that verifies a ZK-EVM proof, and it's "part of Ethereum", so (i) it auto-upgrades along with Ethereum, and (ii) if the precompile has a bug, Ethereum will hard-fork to fix the bug. The native rollup precompile would make full, security-council-free, EVM verification accessible. We should spend much more time working out how to design it in such a way that if your L2 is "EVM plus other stuff", then the native rollup precompile would verify the EVM, and you only have to bring your own prover for the "other stuff" (eg. Stylus). This might involve a canonical way of exposing a lookup table between contract call inputs and outputs, and letting you provide your own values to the lookup table (that you would prove separately). This would make it easy to have safe, strong, trustless interoperability with Ethereum. It also enables synchronous composability (see: ethresear.ch/t/combining-pr… and ethresear.ch/t/synchronous-… ). And from there, it's each L2's choice exactly what they want to build. Don't just "extend L1", figure out something new to add. This of course means that some will add things that are trust-dependent, or backdoored, or otherwise insecure; this is unavoidable in a permissionless ecosystem where developers have freedom. Our job should make to make it clear to users what guarantees they have, and to build up the strongest Ethereum that we can.

🚨Urgent 🚨 Matcha 0x router is being exploited as we speak, already ~$14,000,000 stolen. Revoke access to their router contract. The best way I found is using the built-in Rabby Wallet feature - it is the BEST WALLET I've ever experienced in Web3. You can see all the approvals by risk/network/token and perform batch revokes!