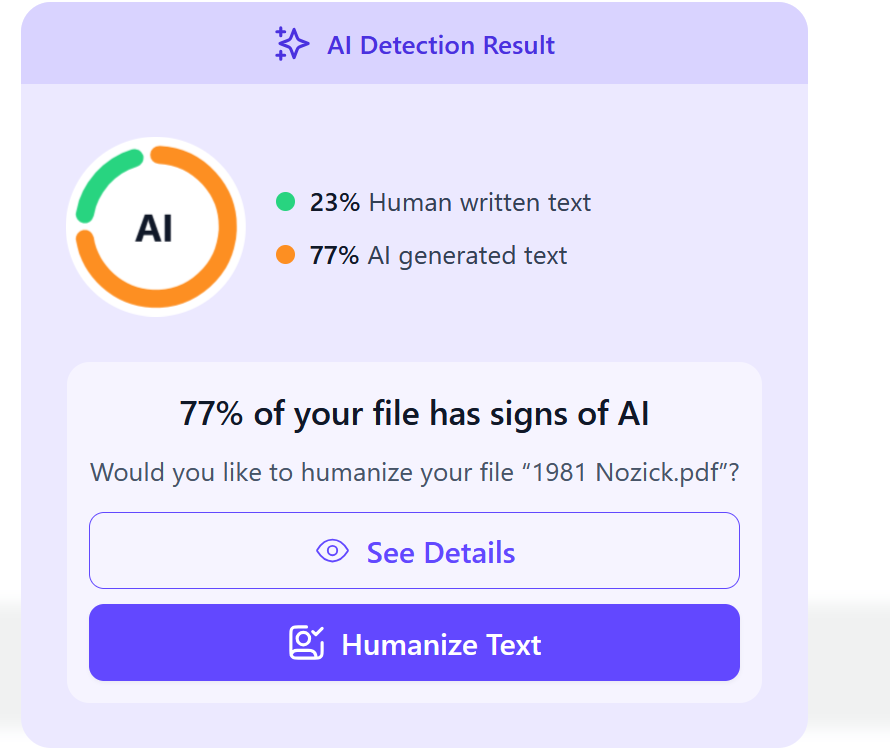

I ran some of my writing through an AI checker. 29.7% robot-generated! Thing is, it obviously wasn't. Ethical and creative reasons aside, the book in question is nearly a decade old - well before technology could do this. 1/3

Houcemeddine Turki

17.5K posts

@Csisc1994

Medical Student (@Univ_Sfax, ASc 18') CS Student (@UoPeople, ASc 25', BSc 26') Former Board Member (@WikimediaTN, @WikiLibrary, @WikimediaAffCom, @DES_Unit)

I ran some of my writing through an AI checker. 29.7% robot-generated! Thing is, it obviously wasn't. Ethical and creative reasons aside, the book in question is nearly a decade old - well before technology could do this. 1/3

Exact same issue for me- I know my previous books and articles have been used to train AI (looking at you anthropic)- & when I run previous articles (written pre-AI) into AI checkers, they can come back as high as 90% AI. It's not artificial intelligence- it's collective human intelligence.