Suresh

290 posts

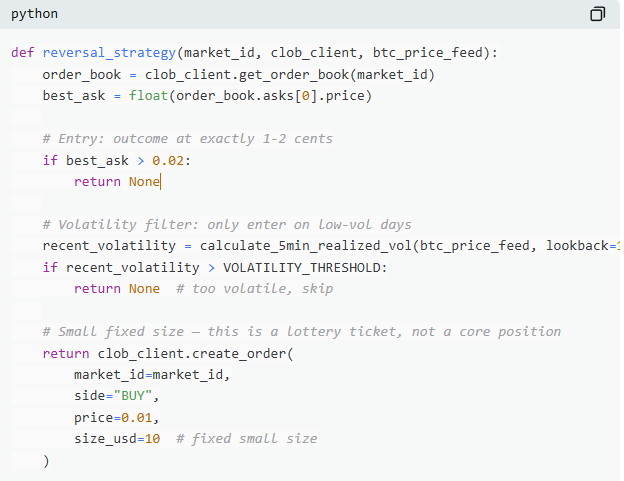

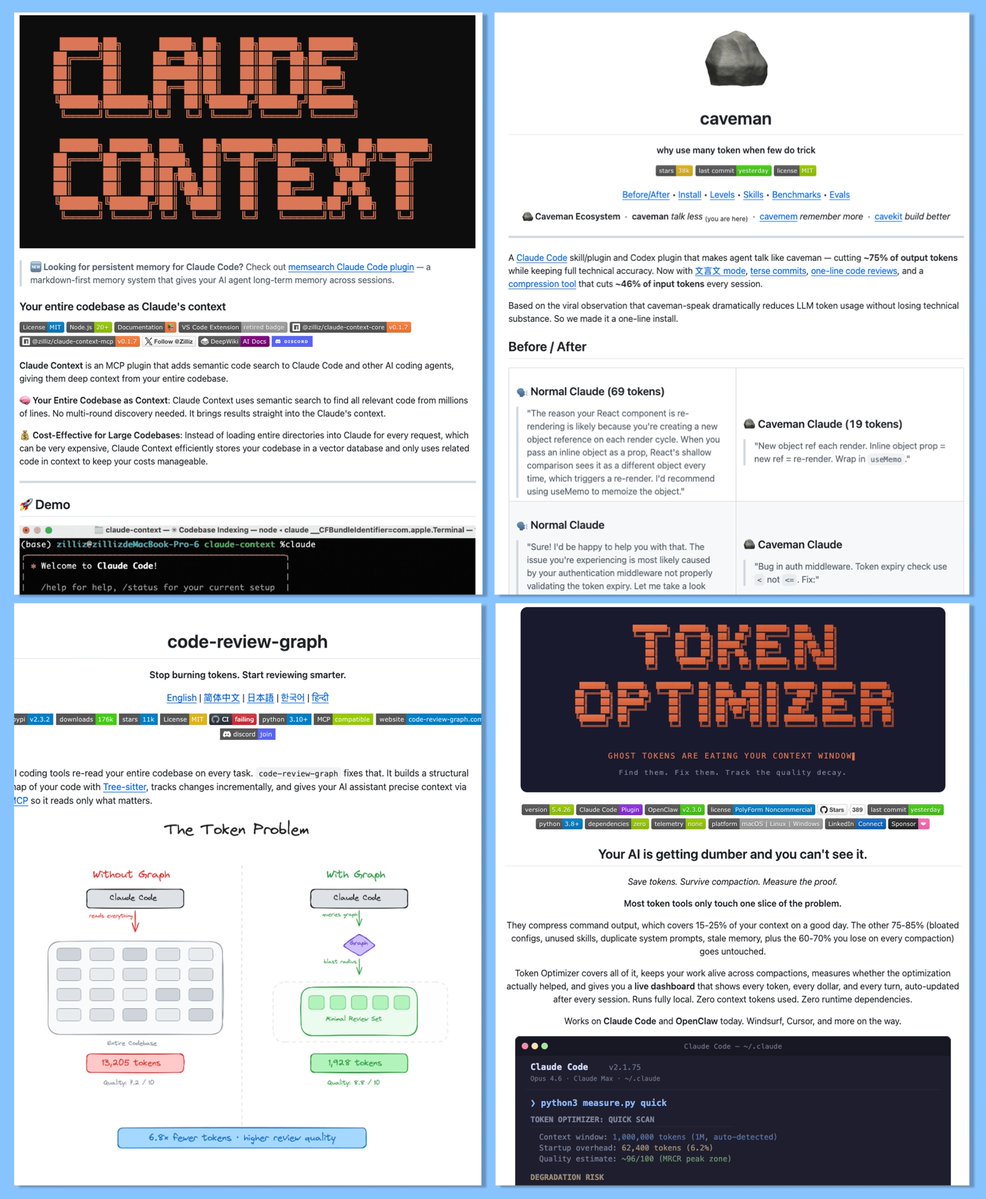

10 GitHub repos that cut your Claude Code token usage by 60–90% This will help you when creating your own trading bots and tools for Polymarket, as well as for any other tasks Here's the full list 1. RTK (Rust Token Killer) CLI proxy that filters terminal output before it hits your context window. 60–90% reduction on common dev commands. One binary, zero dependencies. Works with Claude Code, Cursor, Copilot. GitHub: github.com/rtk-ai/rtk 2. Token Savior MCP server that navigates code by symbols, not full files. 97% reduction on code navigation. Persistent memory across sessions. 69 tools, zero external dependencies. GitHub: github.com/Mibayy/token-s… 3. Context Mode Sandboxes raw tool output into SQLite instead of dumping it straight into context. 98% context reduction on Playwright, GitHub, logs. Only clean summaries enter your conversation. Works as a Claude Code plugin. GitHub: github.com/mksglu/context… 4. code-review-graph Local knowledge graph that maps your codebase with Tree-sitter. Claude reads only what matters - not the entire repo. 49x token reduction on large monorepos. 6.8x on average reviews. GitHub: github.com/tirth8205/code… 5. Caveman Claude Makes Claude respond like a caveman to cut output tokens. 65–75% output reduction. One-line install. Full technical accuracy stays intact. GitHub: github.com/JuliusBrussee/… 6. token-optimizer-mcp MCP server with caching, compression, and smart tool intelligence. 95%+ token reduction through intelligent caching. Compresses repeated tool outputs automatically. GitHub: github.com/ooples/token-o… 7. claude-token-efficient One CLAUDE.md file that keeps responses terse. Drop-in, no code changes needed. Reduces output verbosity on heavy workflows. Best for output-heavy sessions. GitHub: github.com/drona23/claude… 8. claude-context (by Zilliz) Code search MCP that makes your entire codebase the context. ~40% reduction with equivalent retrieval quality. Hybrid BM25 + dense vector search. GitHub: github.com/zilliztech/cla… 9. claude-token-optimizer Reusable setup prompts for optimizing any project. 90% token savings in 5 minutes. Reduces doc token usage from 11K to 1.3K. GitHub: github.com/nadimtuhin/cla… 10. token-optimizer Finds ghost tokens that silently eat your context. Survives compaction without losing quality. Fixes context quality decay over long sessions. GitHub: github.com/alexgreensh/to… You don't need all 10. Pick 2–3 based on your workflow: Heavy terminal output? → RTK Big codebase? → code-review-graph + Token Savior Lots of MCP servers? → Context Mode Quick fix? → Caveman + claude-token-efficient Most people are burning tokens without knowing it. Run /context in a fresh session and see how much is already gone before you type a single word. Like/RT/Bookmark if this was helpful Follow me for more!

How to pull markets from Polymarket by specific niche Most people work with all markets at once - and drown in data If you’re building a tool or analyzing a specific topic, you need filtering by niche right from the start Here’s how it works via the Gamma API >Tags are the basis of filtering Every market on Polymarket has tags Every tag has a numeric ID. It is exactly through this ID that filtering happens: • Politics → tag_id = 2 • Sports → tag_id = 1 • Crypto → tag_id = 21 First you make a request to /tags/{id} to make sure the tag exists and to get its metadata Then you pass this ID as a parameter in the request to /markets >Request parameters Gamma API accepts flexible parameters - you can combine them for any task: • tag_id - filter by niche • closed - only completed or only active markets • end_date_min / end_date_max - time range • limit / offset - pagination, maximum 200 per request • order / ascending - sorting by date, volume, etc >Pagination There are a lot of markets - the API returns them in pages You need to make requests in a loop, each time increasing the offset by the page size, until you get an empty response >Volume filtering The API returns all markets, including micro-markets with zero volume It usually makes sense to additionally filter by minimum volume - for example from $50,000 - to keep only liquid markets where there was real trading >Result At the output you get a clean list of markets for a specific niche for the required period - with volumes, prices, resolution dates and metadata This can be used for strategy analysis, backtesting, building a dashboard or training a model Three parameters - tag, period, minimum volume That’s enough to pull any niche from Polymarket

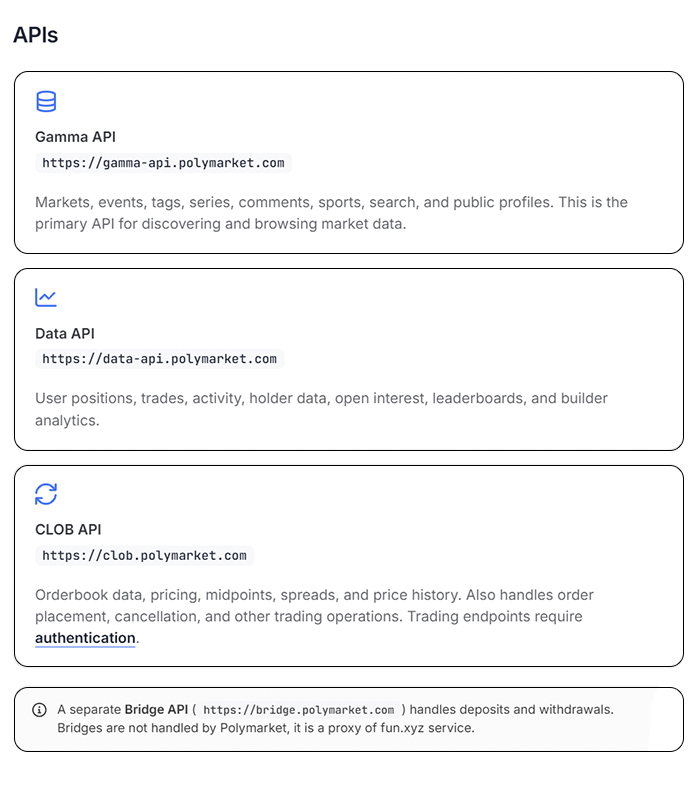

If you want to build on Polymarket, start by getting to know their API There are three of them, and each is responsible for its own task Without this basic understanding, any tool or bot would be built on a whim 1. Gamma API - Markets and Discovery This is your gateway to the data. Through it, you can retrieve a list of all markets and events, filter by category, and search for specific markets. This is where most tools pull their metadata - market names, descriptions, tags, and status. It works without authentication. 2. CLOB API - Prices and Order Book Everything related to live trading. Current prices, historical data, spreads, and a real-time order book. If you’re building a bot that trades or analyzes price movements, this is where you’ll spend most of your time. Orders are also placed and executed through this interface. 3. Data API - Positions, Trades, and Analytics Everything related to a specific wallet and trading history. A user’s current positions, closed positions, trade history, total open interest, and the market’s top holders. This API forms the foundation of any wallet tracker or analytical tool. Three APIs - three layers of data: discovery, trading, and analytics. If you understand this, you understand how Polymarket works under the hood.

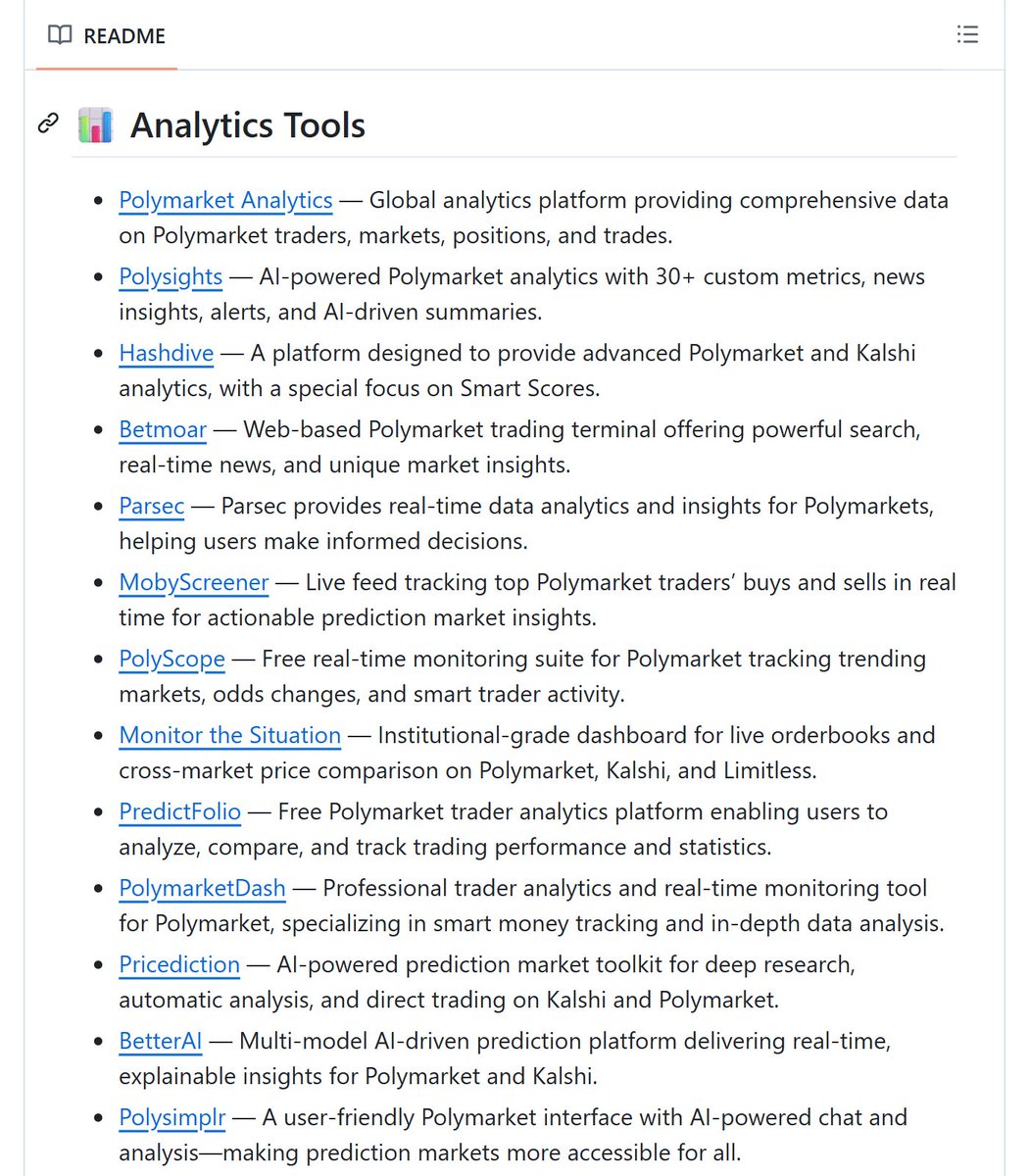

How to make $5,000-15,000 building simple tools for Polymarket Polymarket processes billions of dollars a month, and there are still almost no decent tools for traders Only 5% of Polymarket's total volume comes from the ecosystem - meaning 95% of liquidity is still untapped This is a classic indie hacker opportunity: you take one specific trader pain, vibe code a simple tool that solves it in a few days, and sell it as a subscription No venture capital, no team, no investors What you can build right now: > Telegram bot with alerts when a market probability shifts fast > Wallet tracker with clean analytics on traders > Real-time portfolio monitoring dashboard > Market screener with filters by volume, probability, and movement Any of these can be built with vibe coding in a few days How to find your first users: They're already out there - on Twitter, in the Polymarket Discord, on Reddit Show up, show what you built, give free access to the first 20 people, collect feedback Traders who are making money on Polymarket will happily pay for tools that give them edge or save them time How to monetize: $15-30/month subscription. Niche SaaS tools consistently reach $5-15K with a few hundred paying users. You can also sell the business on Acquire - niche SaaS exits at 2-4x annual revenue. The market is growing. The tools don't exist. The window is open

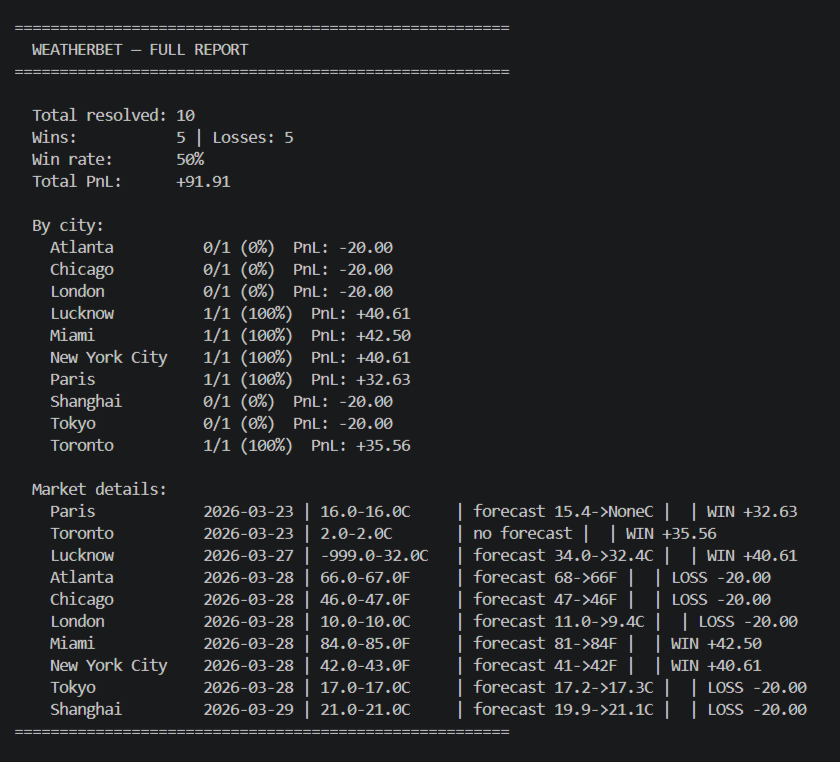

This GitHub repository lets you backtest trading strategies on real Polymarket and Kalshi data An event-driven engine that replays historical trades in chronological order - simulating order fills, portfolio tracking, and market lifecycle events Built on top of Jon-Becker's dataset with 36GB of real trade history What's inside: > Three ready-to-use strategies: buying at low prices, calibration arbitrage, and martingale with mean-reversion > Detailed charts: equity curve, P&L, drawdown, Sharpe, monthly returns > Polymarket and Kalshi support out of the box > Simple API for writing your own strategies - drop a file in the folder and it appears in the menu automatically > Hooks for every event: market open, close, resolution, order fill Most people test strategies on paper or go straight to live trading This engine lets you run a strategy through millions of real trades before spending a single dollar You see the drawdown, Sharpe, monthly returns - all on real data, not synthetics Repo is in active development, full release planned in 1-2 months. Good time to get in early and star it while there's still barely anyone there GitHub: github.com/evan-kolberg/p…

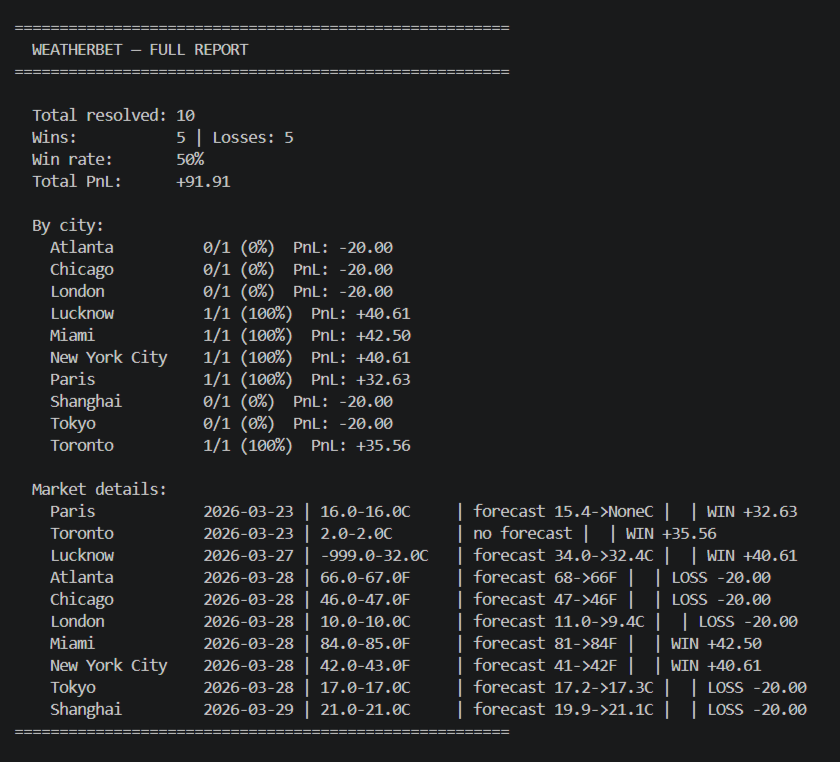

36 GB of real analytical data based on over 72 million Polymarket trades is available on GitHub and its absolutely free… This is the largest public prediction market dataset I have ever found. Here is how you can actually use it for trading on Polymarket, with a real example: This tool allows you to create your own strategies based on real historical market data. For example, u can analyze how typically prices behave right before the market resolves or right after it opens. You can also explore which market categories are more volatile than others, or find patterns in price movement that repeat over time. Now lets imagine - u have already analyzed a huge number of trades using this tool and noticed a pattern. For example, in movie markets u discovered that they are less volatile and usually have a clear winner right from the start (with the highest % probability) - this is just a simple example. And after that, you can use the second tool from GitHub - a working simulator built on this exact dataset. This simulator takes all past markets, analyzes their behaviour from open to close and applies your own strategy to them. As a result, it calculates the potential accuracy, pnl and risks as if u had actually made all these trades yourself. So, you take your strategy - Always buy the most probable outcome at market open only in movie markets. And the simulator tests this strategy across all existing past movie markets and gives u the success rate of this strategy. Based on that, you have a chance to decide whether its actually worth using. This way, you can test hundreds of such strategies without risking any real money at all, see their average performance and choose the best one for yourself or for your trading bot. If this post gets enough likes, I will run a real test of these two repos with proof and a detailed breakdown of how everything works. Here are these two repositories: 1. 36 GB dataset (also read the research paper from the dev): github.com/jon-becker/pre… 2. The simulator: github.com/evan-kolberg/p…