Sabitlenmiş Tweet

It’s official: the first large-scale inherently interpretable language model is here.

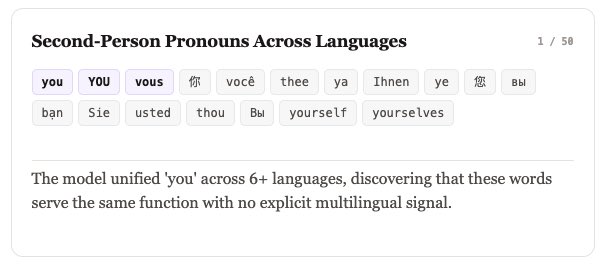

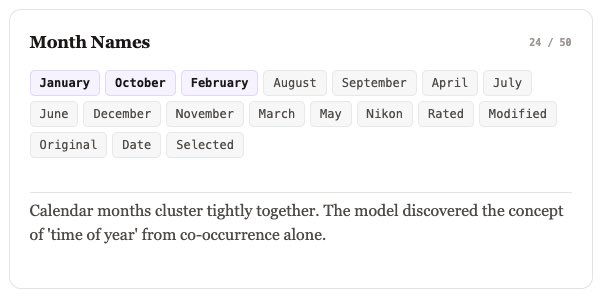

Steerling-8B from @guidelabsai is the first and largest model that can trace every token it generates back to:

→ Input Context

→ Training data

→ Human-understandable concepts

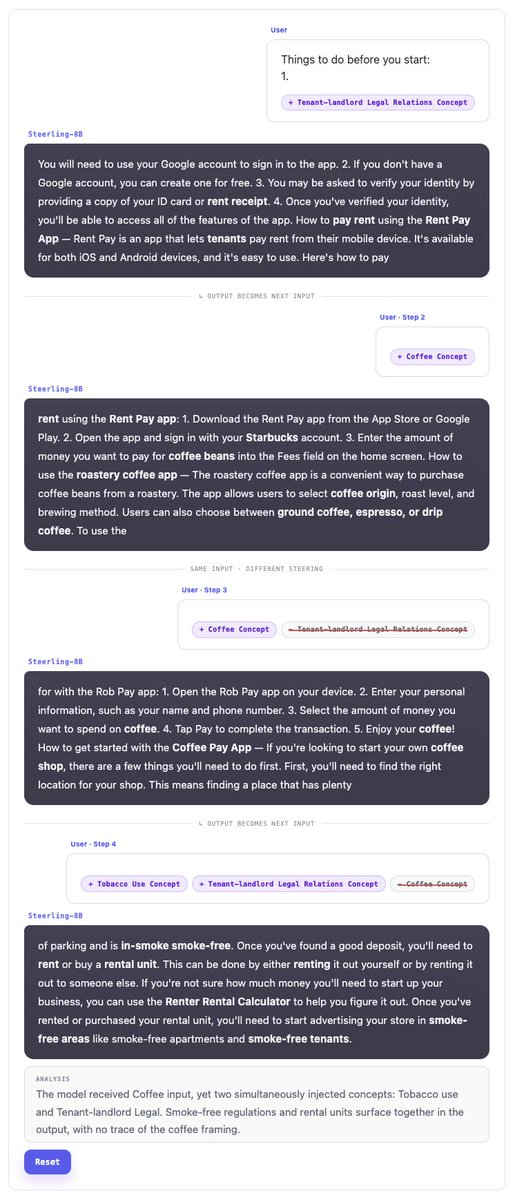

In other words, we've successfully trained Steerling-8B to trace its outputs and explain what has impacted that decision for more reliable manipulation. This isn’t post-hoc explainability. Interpretability is built directly into the model.

🔓Steerling-8B can self-monitor for memorized content and suppress it at inference time without retraining. That makes interpretability a first-class design principle, not an afterthought.

This is a major step toward models we can actually understand, debug, and trust.

Over the coming days, we’ll be sharing investigations into what Steerling-8B’s interpretability enables in practice. Stay tuned as we dive deeper into our research & how we are building LLMs we can trust.

🚨 Try it LIVE and help improve it:

Guide Labs: guidelabs.ai/post/steerling…

GitHub: github.com/guidelabs/stee…

Hugging Face: huggingface.co/guidelabs/stee…

Huge thank you to @TimFernholz and @TechCrunch for featuring this breakthrough. techcrunch.com/2026/02/23/gui…

#Steerling8B #GuideLabs #AI #MachineLearning

English